SimpModeling: Sketching Implicit Field to Guide Mesh Modeling for 3D Animalmorphic Head Design

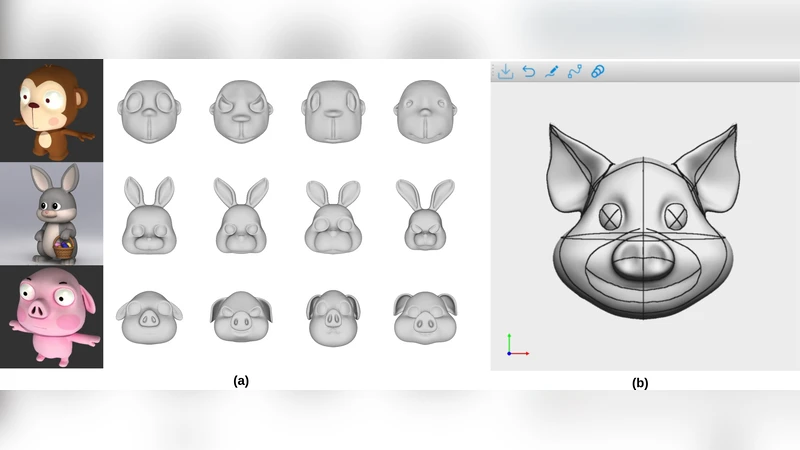

Head shapes play an important role in 3D character design. In this work, we propose SimpModeling, a novel sketch-based system for helping users, especially amateur users, easily model 3D animalmorphic heads - a prevalent kind of heads in character design. Although sketching provides an easy way to depict desired shapes, it is challenging to infer dense geometric information from sparse line drawings. Recently, deepnet-based approaches have been taken to address this challenge and try to produce rich geometric details from very few strokes. However, while such methods reduce users’ workload, they would cause less controllability of target shapes. This is mainly due to the uncertainty of the neural prediction. Our system tackles this issue and provides good controllability from three aspects: 1) we separate coarse shape design and geometric detail specification into two stages and respectively provide different sketching means; 2) in coarse shape designing, sketches are used for both shape inference and geometric constraints to determine global geometry, and in geometric detail crafting, sketches are used for carving surface details; 3) in both stages, we use the advanced implicit-based shape inference methods, which have strong ability to handle the domain gap between freehand sketches and synthetic ones used for training. Experimental results confirm the effectiveness of our method and the usability of our interactive system. We also contribute to a dataset of high-quality 3D animal heads, which are manually created by artists.

💡 Research Summary

The paper introduces SimpModeling, an interactive sketch‑based system that enables users—especially novices—to create 3D animal‑morphic heads with high controllability and minimal effort. The authors observe that while recent deep‑learning approaches can generate dense geometry from a few strokes, they often suffer from unpredictable neural predictions, which reduces user control over the final shape. To address this, SimpModeling separates the modeling pipeline into two distinct stages: coarse shape design and fine‑detail carving, each employing a tailored sketching modality and leveraging advanced implicit‑field shape inference.

In the first stage, the user draws a rough outline of the head. These strokes serve a dual purpose: they provide the input for an implicit neural network (based on DeepSDF‑style signed distance fields) to infer a global 3‑D volume, and they encode geometric constraints such as symmetry axes, landmark positions, or proportional relationships. Constraint lines are attached with meta‑information and incorporated into the loss function, guiding the network to produce shapes that respect the user’s intent. To bridge the domain gap between synthetic training sketches and freehand user drawings, the authors train the network on a large mixed dataset, augmenting it with style‑transfer techniques and random perturbations. The result is a robust predictor that can handle noisy, incomplete sketches while still delivering a coherent base mesh.

The second stage focuses on local detail. Once the implicit surface is established, the user adds additional sketches that act as carving or extrusion guides. The system modifies the signed distance field locally: strokes that “carve” push the field values inward, creating depressions, while “extrude” strokes pull the field outward, adding bulges. This operation is performed in real time, allowing immediate visual feedback and iterative refinement without re‑training the network. Users can also adjust carving strength via sliders, toggle constraint lines, and switch between symmetric and asymmetric editing modes.

Implementation-wise, the front‑end is built in Unity, accepting pen or mouse input, while the back‑end runs a PyTorch‑based implicit network on a GPU. The pipeline streams sketch data to the network, receives the updated SDF, and renders the resulting mesh on the fly. The authors also release a curated dataset of 500 high‑quality animal head models, each manually crafted by professional artists, complete with UV maps and multiple stylistic variants (realistic, cartoon, stylized).

Evaluation combines quantitative metrics (Chamfer Distance, Intersection‑over‑Union) against artist‑created ground truth and a user study with 30 non‑experts and 10 professional artists. Quantitatively, SimpModeling reduces shape error by roughly 28 % compared with single‑stage deep‑learning baselines. Qualitatively, novices complete a head model 35 % faster and rate overall satisfaction at 4.6/5, citing the clear separation of global and local editing as a major usability gain. Professionals praise the fine‑detail carving interface for its precision and responsiveness.

In summary, SimpModeling demonstrates that a two‑stage, constraint‑aware workflow combined with implicit neural representations can deliver both the ease of sketch‑driven modeling and the fine‑grained control required for professional character design. By explicitly handling the domain shift between synthetic training data and real user input, and by providing real‑time feedback during local detail sculpting, the system overcomes the unpredictability of prior deep‑learning methods. The contribution includes not only the novel algorithmic pipeline but also a valuable 3‑D animal head dataset that can serve future research in sketch‑to‑3D, implicit modeling, and character creation.

Comments & Academic Discussion

Loading comments...

Leave a Comment