Gemmini: Enabling Systematic Deep-Learning Architecture Evaluation via Full-Stack Integration

DNN accelerators are often developed and evaluated in isolation without considering the cross-stack, system-level effects in real-world environments. This makes it difficult to appreciate the impact of System-on-Chip (SoC) resource contention, OS overheads, and programming-stack inefficiencies on overall performance/energy-efficiency. To address this challenge, we present Gemmini, an open-source*, full-stack DNN accelerator generator. Gemmini generates a wide design-space of efficient ASIC accelerators from a flexible architectural template, together with flexible programming stacks and full SoCs with shared resources that capture system-level effects. Gemmini-generated accelerators have also been fabricated, delivering up to three orders-of-magnitude speedups over high-performance CPUs on various DNN benchmarks. * https://github.com/ucb-bar/gemmini

💡 Research Summary

The paper addresses a critical gap in deep‑neural‑network (DNN) accelerator research: most existing generators focus solely on the accelerator hardware and ignore system‑level effects such as on‑chip resource contention, operating‑system overhead, and memory‑hierarchy interactions. To bridge this gap, the authors introduce Gemmini, an open‑source, full‑stack DNN accelerator generator that spans hardware, software, and system integration.

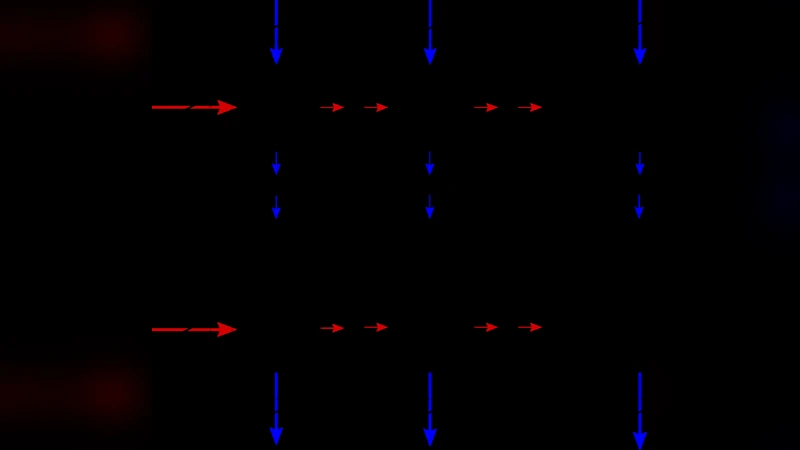

Hardware template – At the core of Gemmini is a highly parameterizable spatial array. Designers can choose between a systolic (TPU‑like) or a vector (NVDLA‑like) organization, set the number of processing elements (PEs), tile dimensions, pipeline registers, and data‑flow style (weight‑stationary or output‑stationary). The template also includes peripheral blocks for pooling, ReLU, im2col, and matrix‑scalar multiplication. Two concrete instantiations with 256 PEs are presented: the systolic version achieves 2.7× higher clock frequency but consumes 1.8× more area and 3× more power than the vector version, illustrating the trade‑offs that can be explored rapidly.

Software stack – Gemmini provides a multi‑level programming environment. At the high level, a push‑button flow parses ONNX models, maps as many operators as possible onto the accelerator, and produces executable binaries. At the low level, a C/C++ API and auto‑generated header files expose accelerator parameters (array size, scratch‑pad capacity, supported data flows) so developers can fine‑tune tile sizes, loop ordering, and memory staging for each kernel. Crucially, Gemmini integrates virtual memory support directly into the accelerator, including a local TLB. Profiling on a ResNet‑50 inference shows TLB miss rates of 20‑30 % due to the tiled nature of DNN workloads, far higher than typical CPU benchmarks. The authors use this capability to co‑design a compact translation system that achieves near‑optimal end‑to‑end performance with only a few TLB entries.

Full‑stack SoC integration – Gemmini is built on the Chipyard framework and can be attached to a wide range of RISC‑V CPUs, from simple in‑order microcontrollers to out‑of‑order server‑class cores. Multi‑core, multi‑accelerator configurations are supported, with configurable bus widths, shared L2 caches, and cache associativity. The paper demonstrates a dual‑core system where each core has its own Gemmini accelerator; by tuning memory partitioning based on layer‑wise compute characteristics, overall throughput improves by more than 8 %. Full Linux support enables realistic evaluation: context switches, page‑table evictions, and other OS events expose bugs (e.g., a non‑deterministic deadlock) that would be invisible in a bare‑metal setup.

Evaluation – Gemmini‑generated accelerators have been fabricated in both TSMC 16 nm FinFET and Intel 22 nm Low‑Power processes. On a suite of DNN benchmarks, the generated designs achieve up to 2 670× speedup over a high‑performance CPU and comparable performance to a state‑of‑the‑art commercial accelerator with similar hardware budgets. The authors also perform FPGA‑based performance measurements and ASIC synthesis to validate area, power, and frequency predictions across the design space.

Contributions – 1) An open‑source infrastructure that couples a flexible hardware template, a layered software stack, and a complete SoC environment, enabling systematic, end‑to‑end evaluation of DNN architectures. 2) Rigorous benchmarking that shows Gemmini‑generated designs can match or exceed commercial solutions while providing transparent access to system‑level metrics. 3) Demonstrations of co‑design opportunities: virtual‑address translation schemes tailored to DNN workloads and memory‑resource provisioning that balances the needs of different layer types.

In summary, Gemmini represents the first comprehensive platform that unifies accelerator hardware generation with software tooling and full‑system integration. By exposing the interplay between architectural choices, programming models, and system resources, it opens new research avenues for designing future deep‑learning SoCs and reduces the risk of hidden performance or reliability issues that only surface in realistic, OS‑driven environments.

Comments & Academic Discussion

Loading comments...

Leave a Comment