The Audio Auditor: User-Level Membership Inference in Internet of Things Voice Services

With the rapid development of deep learning techniques, the popularity of voice services implemented on various Internet of Things (IoT) devices is ever increasing. In this paper, we examine user-level membership inference in the problem space of voi…

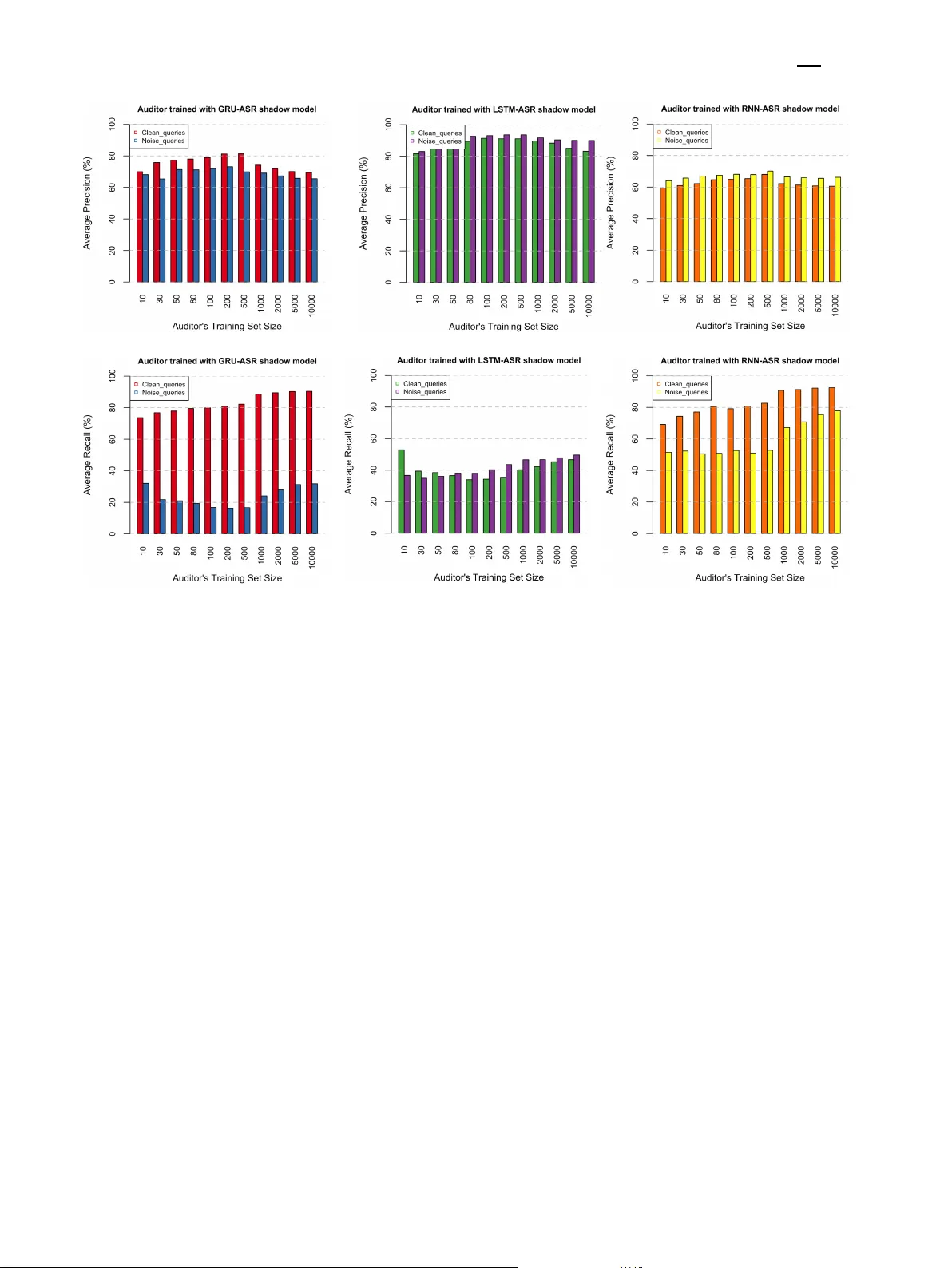

Authors: Yuantian Miao, Minhui Xue, Chao Chen