Learning Gaussian Networks

We describe algorithms for learning Bayesian networks from a combination of user knowledge and statistical data. The algorithms have two components: a scoring metric and a search procedure. The scoring metric takes a network structure, statistical data, and a user’s prior knowledge, and returns a score proportional to the posterior probability of the network structure given the data. The search procedure generates networks for evaluation by the scoring metric. Previous work has concentrated on metrics for domains containing only discrete variables, under the assumption that data represents a multinomial sample. In this paper, we extend this work, developing scoring metrics for domains containing all continuous variables or a mixture of discrete and continuous variables, under the assumption that continuous data is sampled from a multivariate normal distribution. Our work extends traditional statistical approaches for identifying vanishing regression coefficients in that we identify two important assumptions, called event equivalence and parameter modularity, that when combined allow the construction of prior distributions for multivariate normal parameters from a single prior Bayesian network specified by a user.

💡 Research Summary

The paper presents a Bayesian framework for learning the structure of belief networks when all variables are continuous and assumed to follow a multivariate normal distribution. Traditional approaches to Bayesian network learning have focused on discrete variables, treating data as samples from a multinomial distribution. When dealing with continuous data, prior work typically discretized each variable, which incurs information loss and leads to an exponential increase in the number of parameters. This work eliminates the need for discretization by directly modeling continuous variables with Gaussian (normal) distributions and by extending the scoring‑metric/search‑procedure paradigm to this setting.

Key contributions are two conceptual assumptions: event equivalence and parameter modularity. Event equivalence states that two network structures that encode the same set of conditional independence assertions should correspond to the same probabilistic event and therefore receive identical scores. Parameter modularity asserts that the prior distribution of parameters for a given variable depends only on its parent set, not on the rest of the graph. Together, these assumptions allow the construction of a coherent prior over all possible Gaussian network structures from a single user‑specified prior network.

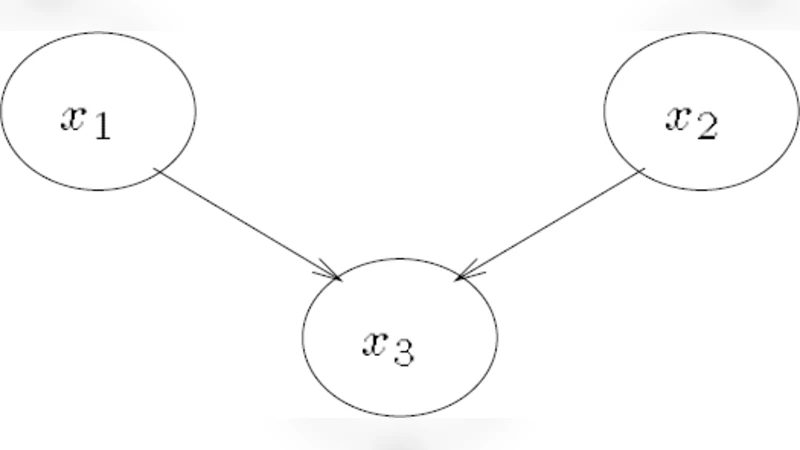

The authors define a Gaussian belief network as a directed acyclic graph (DAG) where each node (x_i) has a conditional distribution (x_i \mid \text{Pa}(x_i) \sim N(m_i + \sum_{j\in\text{Pa}(i)} b_{ij}(x_j - m_j), 1/v_i)). The collection of regression coefficients (b_{ij}), conditional variances (v_i), and unconditional means (m_i) uniquely determines the joint multivariate normal distribution via its precision matrix (W = \Sigma^{-1}). Equation 5 (the recursive construction of (W) from the local parameters) plays a central role in translating between the local network representation and the global covariance structure.

For scoring, the paper adopts a Bayesian posterior probability of a structure given data (D):

\

Comments & Academic Discussion

Loading comments...

Leave a Comment