Cascade and Parallel Convolutional Recurrent Neural Networks on EEG-based Intention Recognition for Brain Computer Interface

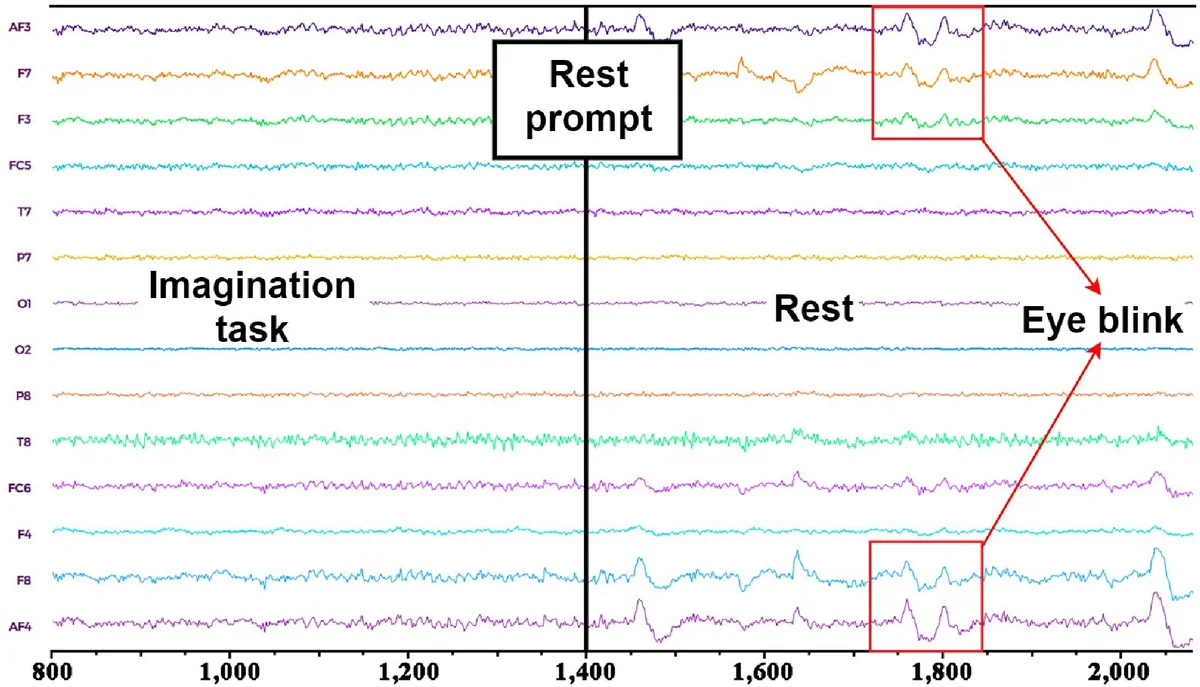

Brain-Computer Interface (BCI) is a system empowering humans to communicate with or control the outside world with exclusively brain intentions. Electroencephalography (EEG) based BCIs are promising solutions due to their convenient and portable instruments. Motor imagery EEG (MI-EEG) is a kind of most widely focused EEG signals, which reveals a subjects movement intentions without actual actions. Despite the extensive research of MI-EEG in recent years, it is still challenging to interpret EEG signals effectively due to the massive noises in EEG signals (e.g., low signal noise ratio and incomplete EEG signals), and difficulties in capturing the inconspicuous relationships between EEG signals and certain brain activities. Most existing works either only consider EEG as chain-like sequences neglecting complex dependencies between adjacent signals or performing simple temporal averaging over EEG sequences. In this paper, we introduce both cascade and parallel convolutional recurrent neural network models for precisely identifying human intended movements by effectively learning compositional spatio-temporal representations of raw EEG streams. The proposed models grasp the spatial correlations between physically neighboring EEG signals by converting the chain like EEG sequences into a 2D mesh like hierarchy. An LSTM based recurrent network is able to extract the subtle temporal dependencies of EEG data streams. Extensive experiments on a large-scale MI-EEG dataset (108 subjects, 3,145,160 EEG records) have demonstrated that both models achieve high accuracy near 98.3% and outperform a set of baseline methods and most recent deep learning based EEG recognition models, yielding a significant accuracy increase of 18% in the cross-subject validation scenario.

💡 Research Summary

**

This paper tackles the persistent challenges in motor‑imagery EEG (MI‑EEG) based brain‑computer interfaces (BCIs): low signal‑to‑noise ratio, missing channel readings, and the difficulty of capturing subtle spatial‑temporal dependencies. The authors propose two end‑to‑end deep learning architectures—Cascade and Parallel convolutional‑recurrent neural networks (CNN‑RNNs)—that operate directly on raw EEG streams without extensive preprocessing.

First, the raw 1‑D EEG vectors are reshaped into 2‑D “meshes” according to the physical layout of the electrode cap. This conversion preserves the spatial relationships among neighboring electrodes and pads absent channels with zeros; non‑zero entries are subsequently Z‑score normalized. Sliding windows with 50 % overlap segment the mesh sequence into fixed‑length clips, each containing S meshes.

The Cascade model processes each mesh with a three‑layer 2‑D CNN (3×3 kernels, 32→64→128 feature maps) followed by a fully‑connected layer that yields a 1024‑dimensional spatial feature vector per time step. The resulting sequence of spatial vectors is fed into two stacked LSTM layers; only the hidden state of the final time step is passed to a fully‑connected layer and a softmax classifier. This sequential pipeline first refines spatial information before modeling temporal dynamics, which is advantageous for capturing sustained intention patterns.

The Parallel model shares the same CNN for spatial extraction but simultaneously feeds the original 1‑D electrode vectors into a separate two‑layer LSTM stream. The temporal hidden state from the LSTM is passed through a fully‑connected layer, and the resulting temporal feature vector is concatenated with the CNN‑derived spatial feature vector. A final fully‑connected layer and softmax produce the class probabilities. By learning spatial and temporal representations in parallel, this architecture can fuse complementary cues more rapidly.

Both networks employ dropout after the CNN and final fully‑connected layers, are trained with the Adam optimizer and cross‑entropy loss, and use the same window size S for fair comparison.

Experiments were conducted on a large‑scale MI‑EEG dataset comprising 108 subjects and 3,145,160 recordings. In intra‑subject 10‑fold cross‑validation, the Cascade and Parallel models achieved an average accuracy of 98.3 %, substantially outperforming traditional baselines (e.g., CSP‑LDA, SVM) and recent deep models (DeepConvNet, EEGNet) by roughly 18 percentage points. In the more demanding cross‑subject validation—where training and testing subjects are disjoint—the same performance gap persisted, demonstrating strong generalization across individuals.

A practical evaluation on a real‑world BCI system with only eight EEG channels further validated the approach: the models recognized five distinct instruction intents with 93 % accuracy, confirming robustness under limited hardware and noisy conditions.

Key contributions include: (1) a novel 2‑D mesh representation that explicitly encodes electrode spatial topology, (2) two complementary CNN‑RNN architectures that jointly learn spatial‑temporal dynamics without handcrafted feature extraction, and (3) extensive empirical evidence of superior performance in both cross‑subject and multi‑class scenarios. Limitations noted are the dependence on a fixed electrode layout for mesh construction and sensitivity to hyper‑parameters such as window length and CNN depth. Future work may explore adaptive mesh generation for heterogeneous caps, model compression for on‑device deployment, and online adaptation mechanisms to further enhance real‑time BCI usability.

Comments & Academic Discussion

Loading comments...

Leave a Comment