Multiscale Principle of Relevant Information for Hyperspectral Image Classification

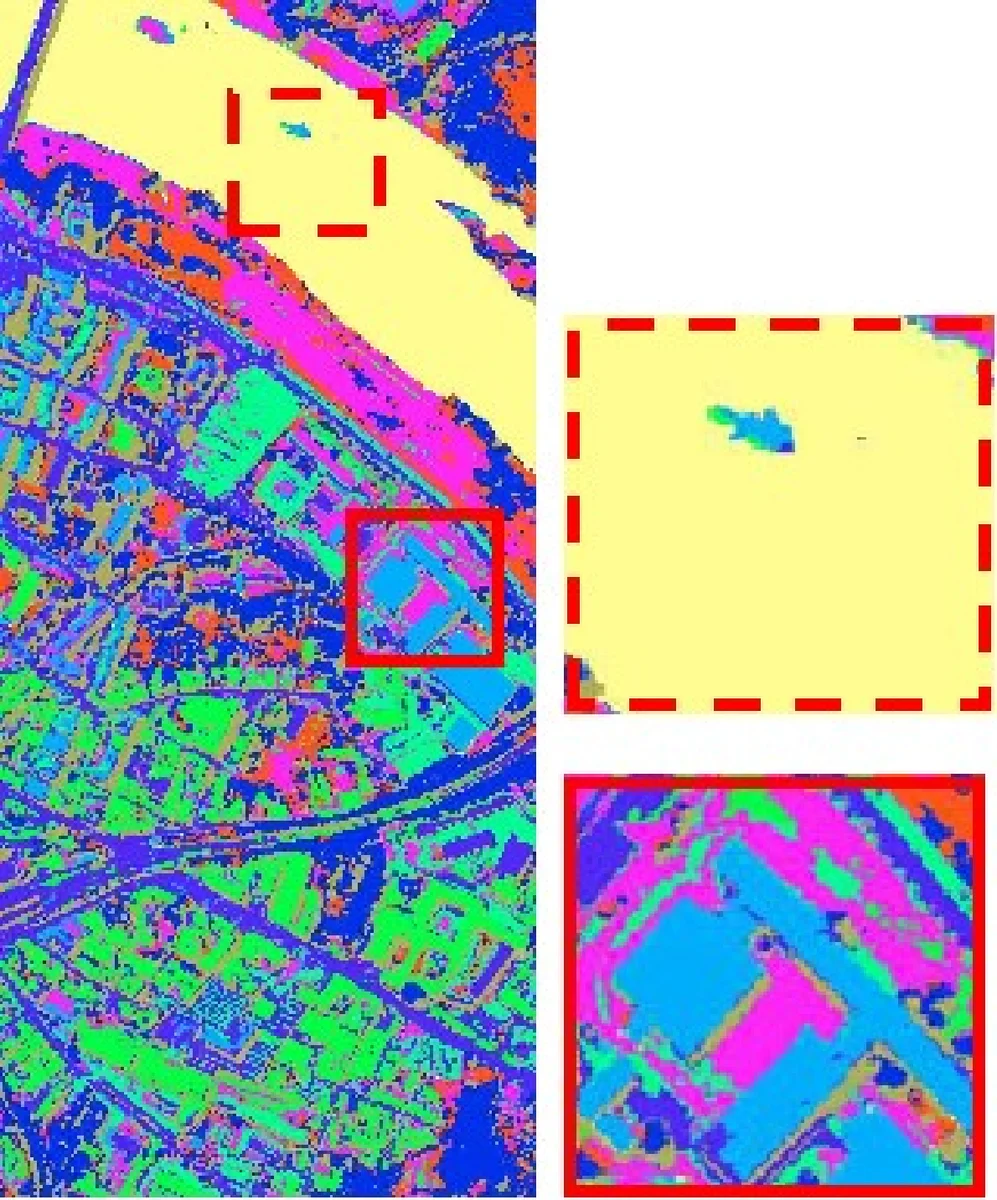

This paper proposes a novel architecture, termed multiscale principle of relevant information (MPRI), to learn discriminative spectral-spatial features for hyperspectral image (HSI) classification. MPRI inherits the merits of the principle of relevant information (PRI) to effectively extract multiscale information embedded in the given data, and also takes advantage of the multilayer structure to learn representations in a coarse-to-fine manner. Specifically, MPRI performs spectral-spatial pixel characterization (using PRI) and feature dimensionality reduction (using regularized linear discriminant analysis) iteratively and successively. Extensive experiments on three benchmark data sets demonstrate that MPRI outperforms existing state-of-the-art methods (including deep learning based ones) qualitatively and quantitatively, especially in the scenario of limited training samples. Code of MPRI is available at \url{http://bit.ly/MPRI_HSI}.

💡 Research Summary

The paper introduces a novel framework called Multiscale Principle of Relevant Information (MPRI) for hyperspectral image (HSI) classification. MPRI builds upon the Principle of Relevant Information (PRI), an information‑theoretic method that balances entropy reduction against divergence from the original data using a single hyper‑parameter β. By embedding PRI within a multiscale and multilayer architecture, the authors achieve discriminative spectral‑spatial feature learning that works well even with very few labeled samples.

Core methodology

-

PRI formulation – The authors adopt the 2‑order Rényi entropy and the Cauchy‑Schwarz (CS) divergence to define the PRI objective: minimize (1‑β)·H₂(f) + 2β·H₂(f‖g). The parameter β controls the trade‑off between regularity (low entropy) and relevance (high similarity to the original data). When β varies from 0 to ∞ the solution passes from the data mean, through cluster centers, to principal curves, and finally back to the raw data.

-

Spectral‑spatial feature learning unit – For each pixel, a local 3‑D cube (size n × n × d) is extracted using a sliding window. PRI is applied to this cube, yielding a new set of vectors Ȳ. The central vector of Ȳ becomes the updated representation of the target pixel. The update is performed iteratively via a fixed‑point equation derived from the gradient of the PRI objective (Eq. 12 in the paper).

-

Multiscale processing – Several window sizes (n = 3, 5, 7, 9, 11, 13) are used in parallel, allowing the model to capture both fine‑grained local structures and broader contextual information. The concatenated multiscale PRI outputs are high‑dimensional, so a regularized Linear Discriminant Analysis (LDA) step follows each PRI iteration to reduce redundancy and maximize class separability.

-

Multilayer architecture – Multiple feature‑learning units are stacked. The output of layer i becomes the input for layer i + 1, and the same PRI‑LDA pipeline is repeated. Unlike conventional deep neural networks, training proceeds layer‑by‑layer without any back‑propagation; each layer is optimized independently using the fixed‑point update. After the final layer, the concatenated features from all layers are fed to a simple k‑Nearest Neighbors (KNN) classifier.

Experimental validation

The authors evaluate MPRI on three widely used HSI benchmarks: Pavia University, Indian Pines, and Salinas. They vary the number of labeled training samples per class (10, 20, 30, 50) to simulate the limited‑sample regime common in remote sensing. Competing methods include classical approaches (PCA‑EPF, HIFI, Joint Sparse Representation, Spatial‑Aware Dictionary Learning) and deep learning models (SAE‑LR, 3D‑CNN).

Results show that MPRI consistently outperforms all baselines in Overall Accuracy (OA), Average Accuracy (AA), and Kappa coefficient. The performance gap is especially pronounced when training data are scarce; MPRI achieves near‑state‑of‑the‑art accuracy with only 10–20 samples per class, whereas deep models degrade sharply under the same conditions. Sensitivity analysis indicates that β values in the range 0.3–0.7 and a depth of 3–4 layers yield the best trade‑off between accuracy and computational cost.

Key contributions and implications

- Demonstrates that PRI, originally a theoretical construct, can be effectively applied to 3‑D HSI data to extract hierarchical, discriminative features.

- Introduces a multiscale strategy that jointly models local and global spatial contexts without requiring handcrafted filters or large convolutional kernels.

- Proposes a deep‑like architecture that avoids back‑propagation, reducing training complexity and making the method robust to limited labeled data.

- Provides extensive empirical evidence that information‑theoretic feature learning can rival or surpass deep learning methods in HSI classification, especially in low‑sample scenarios.

In summary, MPRI offers a principled, efficient, and highly accurate solution for hyperspectral image classification, opening new avenues for information‑theoretic approaches in remote sensing and suggesting that deep architectures need not rely exclusively on gradient‑based training to achieve state‑of‑the‑art performance.

Comments & Academic Discussion

Loading comments...

Leave a Comment