d-blink: Distributed End-to-End Bayesian Entity Resolution

Entity resolution (ER; also known as record linkage or de-duplication) is the process of merging noisy databases, often in the absence of unique identifiers. A major advancement in ER methodology has been the application of Bayesian generative models, which provide a natural framework for inferring latent entities with rigorous quantification of uncertainty. Despite these advantages, existing models are severely limited in practice, as standard inference algorithms scale quadratically in the number of records. While scaling can be managed by fitting the model on separate blocks of the data, such a na"ive approach may induce significant error in the posterior. In this paper, we propose a principled model for scalable Bayesian ER, called “distributed Bayesian linkage” or d-blink, which jointly performs blocking and ER without compromising posterior correctness. Our approach relies on several key ideas, including: (i) an auxiliary variable representation that induces a partition of the entities and records into blocks; (ii) a method for constructing well-balanced blocks based on k-d trees; (iii) a distributed partially-collapsed Gibbs sampler with improved mixing; and (iv) fast algorithms for performing Gibbs updates. Empirical studies on six data sets—including a case study on the 2010 Decennial Census—demonstrate the scalability and effectiveness of our approach.

💡 Research Summary

The paper introduces d‑blink (Distributed Bayesian Linkage), a scalable Bayesian entity resolution (ER) framework that integrates probabilistic blocking directly into the Bayesian model, thereby preserving posterior correctness while achieving near‑linear computational complexity. Traditional Bayesian ER models, such as the original blink, require all‑pair comparisons, leading to O(N²) time and making them infeasible for large databases. Existing work often resorts to deterministic blocking as a preprocessing step; however, this breaks the Bayesian pipeline, prevents uncertainty propagation, and can severely degrade matching accuracy if the blocking design is suboptimal.

Key contributions

-

Auxiliary‑variable representation – The authors introduce a discrete latent variable (z) that assigns records (and latent entities) to blocks. Unlike deterministic blocking, (z) is treated as a random variable and inferred jointly with all other model parameters. They prove that marginalizing over (z) yields the same posterior as the original blink model, guaranteeing that the introduction of probabilistic blocks does not bias inference.

-

Balanced block construction via k‑d trees – To obtain well‑balanced workloads across a distributed environment, the method recursively partitions the multi‑dimensional attribute space using a k‑d tree. This yields blocks of roughly equal size while keeping similar records together, which improves both load balancing and the probability that true matches lie in the same block.

-

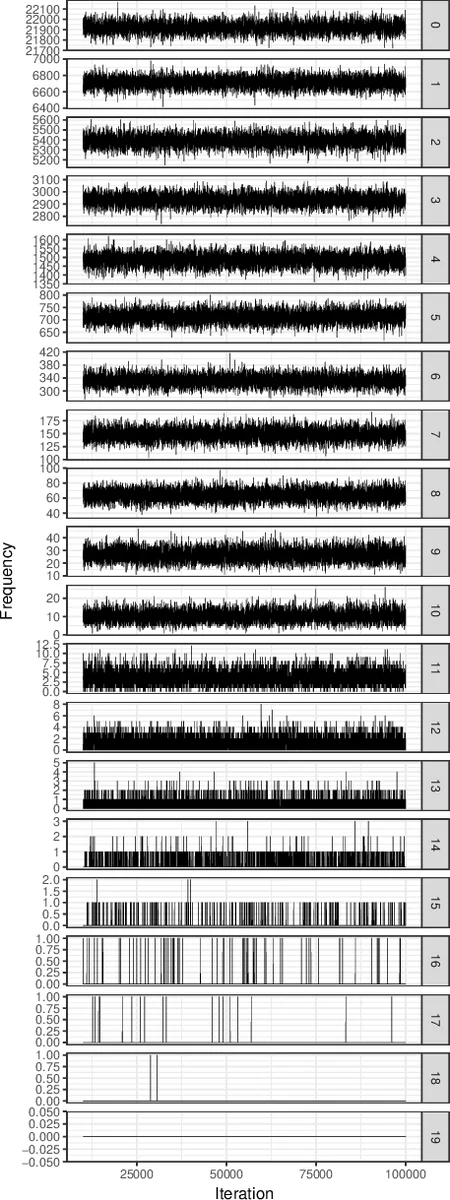

Distributed partially‑collapsed Gibbs sampler – Standard Gibbs updates for ER suffer from poor mixing because each update only moves a single record or entity. d‑blink collapses out the block‑level parameters when updating entity assignments, resulting in a partially‑collapsed sampler that reduces autocorrelation and accelerates convergence. The block assignments themselves are updated conditionally, enabling parallel Gibbs steps across blocks.

-

Algorithmic accelerations – Within each block, the authors employ indexing structures (hash tables, bitmaps) and a novel perturbation‑sampling scheme to avoid exhaustive pairwise comparisons. These tricks bring the per‑iteration cost down to near‑linear in the number of records per block.

-

Open‑source Spark implementation – The entire pipeline is packaged as an Apache Spark library with an R front‑end, allowing practitioners to run d‑blink on commodity clusters without deep knowledge of MCMC.

Empirical evaluation – Six datasets are used, ranging from synthetic benchmarks to three real‑world collections and a large case study linking 2010 U.S. Census records with state administrative data (over 2 million records). Compared with the original blink, d‑blink achieves speed‑ups of 300× or more while maintaining or improving F1 scores. The case study demonstrates that probabilistic blocking does not sacrifice matching quality; instead, it yields a well‑calibrated posterior that can be directly fed into downstream statistical analyses (e.g., regression on linked data).

Implications – d‑blink resolves the long‑standing tension between Bayesian rigor and scalability in ER. By embedding blocking as a random component, it enables full uncertainty propagation from the blocking stage through to final entity assignments, a feature absent in most large‑scale linkage pipelines. The partially‑collapsed Gibbs sampler and block‑wise parallelism make the approach practical for modern big‑data environments. Moreover, the methodological ideas—auxiliary‑variable partitioning, k‑d‑tree balancing, and distributed collapsed sampling—are potentially transferable to other Bayesian clustering problems, such as micro‑clustering or mixture models where scalability is a bottleneck.

In summary, d‑blink offers a principled, efficient, and publicly available solution for end‑to‑end Bayesian entity resolution on massive datasets, bridging the gap between statistical theory and real‑world data integration needs.

Comments & Academic Discussion

Loading comments...

Leave a Comment