Optimising Optimisers with Push GP

This work uses Push GP to automatically design both local and population-based optimisers for continuous-valued problems. The optimisers are trained on a single function optimisation landscape, using random transformations to discourage overfitting. They are then tested for generality on larger versions of the same problem, and on other continuous-valued problems. In most cases, the optimisers generalise well to the larger problems. Surprisingly, some of them also generalise very well to previously unseen problems, outperforming existing general purpose optimisers such as CMA-ES. Analysis of the behaviour of the evolved optimisers indicates a range of interesting optimisation strategies that are not found within conventional optimisers, suggesting that this approach could be useful for discovering novel and effective forms of optimisation in an automated manner.

💡 Research Summary

This paper investigates the automatic design of optimisation algorithms using Push Genetic Programming (Push GP), a system that evolves programs written in the Turing‑complete Push language. Push provides typed stacks (boolean, integer, float, vector) and an execution stack, allowing evolved programs to maintain internal state across calls without fragile indexed memory. By extending Push with a vector type and adding specialised instructions (e.g., vector.current, vector.best), the authors enable the evolution of both local and population‑based optimisers. Each individual in a population runs its own copy of the evolved Push program; the program receives the current search point, its objective value, and feedback about improvement, and returns the next point. Because stacks persist between calls, the program can accumulate memory, implement conditional logic, and share information with other individuals via the new vector instructions, yielding swarm‑like behaviour without imposing any pre‑defined swarm structure.

Training is performed on a subset of the CEC 2005 real‑valued benchmark suite: the sphere function (F1), Rastrigin (F9), Schwefel’s Problem 2.13 (F12), a Griewank‑Rosenbrock composition (F13), and a Scaffer‑F6 variant (F14). To prevent over‑fitting to a particular instance, each dimension of each function is randomly transformed during training: translations up to ±50 % of the axis range, scalings between 50 % and 200 %, and independent axis flips with 50 % probability. The optimiser is trained on the 10‑dimensional versions of these functions, with an evaluation budget of 1 000 function evaluations (FEs) per run. Fitness is the mean best objective value over ten independent runs with different random transformations and initial points.

The evolutionary run uses a population of 200 Push programs, 50 generations, tournament selection (size 5), and a program length limit of 100 instructions. Each evolved program is required to perform a single optimisation move per invocation; an outer loop repeatedly calls the program until the evaluation budget is exhausted. After each move, the program receives feedback (new objective value, a boolean indicating improvement, and the current best point) via the appropriate stacks.

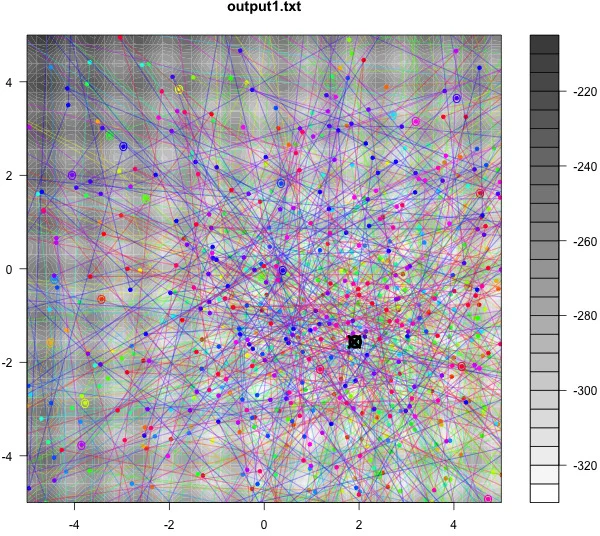

After evolution, the best‑of‑run optimisers are re‑evaluated on the standard CEC 2005 benchmark (25 independent runs, no random transformations) using various splits of the 1 000‑FE budget between population size and number of iterations: 50 × 20, 25 × 40, 5 × 200, and 1 × 1000 (the latter corresponds to a purely local search). Results show that the trade‑off between population size and iterations significantly influences performance. Smaller populations with many iterations (e.g., 5 × 200) often achieve lower mean errors on the multimodal, non‑separable functions (F9, F13, F14) than larger populations with fewer iterations. When compared with two strong baselines from the original CEC 2005 competition—G‑CMA‑ES (a restart‑enhanced Covariance Matrix Adaptation Evolution Strategy) and Differential Evolution (DE)—the evolved Push optimisers are competitive and, on several functions, outperform both baselines despite using the same 1 000‑FE budget.

A qualitative analysis of the evolved programs reveals novel optimisation strategies not commonly found in hand‑designed algorithms. First, many programs dynamically adjust step sizes by computing vector differences between the current point and the best‑seen point, then scaling these differences with learned coefficients. Second, the use of vector.best enables implicit sharing of the globally best solution among individuals, producing a swarm‑like attraction without explicit velocity or neighbourhood definitions. Third, conditional logic based on the boolean “improved” flag allows the program to switch between exploratory moves (when no improvement occurs) and exploitative moves (when improvement is detected). These mechanisms collectively give rise to adaptive, feedback‑driven search behaviours that differ from classic mutation‑selection or CMA‑ES update rules.

The authors conclude that Push GP provides a powerful, low‑bias framework for exploring the vast design space of optimisation algorithms. By evolving programs from a minimal instruction set, the system can discover effective, sometimes superior, strategies without being constrained by existing metaheuristic templates. Future work is suggested in three directions: (1) testing the generality of the evolved optimisers on higher‑dimensional and real‑world problems, (2) analysing the evolved code to extract human‑readable design principles, and (3) integrating the approach with other automated design methods (e.g., grammatical evolution or reinforcement learning) to further broaden the scope of discoverable optimisation techniques.

Comments & Academic Discussion

Loading comments...

Leave a Comment