Reversible Adversarial Attack based on Reversible Image Transformation

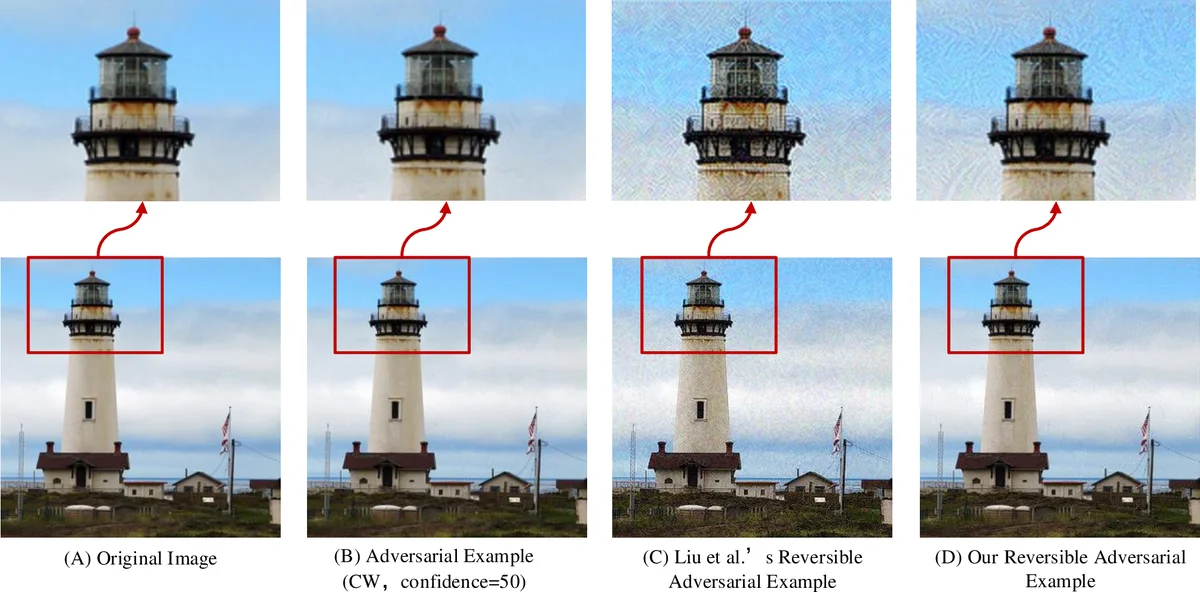

In order to prevent illegal or unauthorized access of image data such as human faces and ensure legitimate users can use authorization-protected data, reversible adversarial attack technique is rise. Reversible adversarial examples (RAE) get both attack capability and reversibility at the same time. However, the existing technique can not meet application requirements because of serious distortion and failure of image recovery when adversarial perturbations get strong. In this paper, we take advantage of Reversible Image Transformation technique to generate RAE and achieve reversible adversarial attack. Experimental results show that proposed RAE generation scheme can ensure imperceptible image distortion and the original image can be reconstructed error-free. What’s more, both the attack ability and the image quality are not limited by the perturbation amplitude.

💡 Research Summary

The paper addresses a critical limitation of existing reversible adversarial example (RAE) techniques, which rely on reversible data embedding (RDE) to hide adversarial perturbations within the adversarial image. While RDE‑based RAEs can theoretically provide both attack capability and lossless recovery, in practice the embedding capacity of RDE limits the amount of perturbation data that can be stored. When the perturbation strength is increased to improve attack success, the required payload exceeds the embedding capacity, leading to three major problems: (1) incomplete embedding of the perturbation, causing irreversible loss of the original image; (2) severe visual distortion of the reversible adversarial image; and (3) a drop in attack effectiveness due to the distortion.

To overcome these issues, the authors propose a fundamentally different approach that replaces RDE with reversible image transformation (RIT). RIT is a technique that can transform an original image into a camouflage image that visually resembles an arbitrarily chosen target image, while embedding only a small amount of auxiliary information needed to reverse the transformation. The key insight is that the difference between an original image and its adversarial counterpart is usually very small; therefore, the auxiliary data required for reversal is minimal and does not depend on the magnitude of the adversarial perturbation.

The proposed pipeline consists of three steps: (1) generate adversarial examples using standard white‑box attacks (IFGSM, DeepFool, C&W); (2) apply RIT to the original image with the adversarial example as the target, producing a reversible adversarial example (RAE); (3) recover the original image by extracting the embedded auxiliary data and performing the inverse RIT. The RIT process itself is described in detail: the original and target images are divided into blocks, each block’s mean and standard deviation are computed, and blocks with similar standard deviations are paired. For each pair, the mean of the original block is shifted to match the target block’s mean, and the block is rotated (0°, 90°, 180°, or 270°) to minimize the root‑mean‑square error with the target block. The auxiliary information—compressed class‑index table, mean shifts, and rotation directions—is then embedded using a reversible data hiding (RDH) scheme.

Experimental evaluation uses 5,000 correctly classified ImageNet validation images and a pretrained Inception‑v3 model. Attack parameters are set to realistic values (IFGSM ε ≤ 8/255, C&W L2 learning rate 0.005). The authors compare their RIT‑based RAE with the earlier RDE‑based method across three metrics: (a) image quality (PSNR, SSIM), (b) recovery fidelity (percentage of perfectly recovered images), and (c) attack success rate. Results show that the RIT‑based approach achieves near‑perfect recovery (0 % error), PSNR values above 45 dB and SSIM around 0.99, regardless of perturbation strength. Visual distortion remains negligible even when the perturbation amplitude is increased, because the auxiliary payload stays constant (≈0.3 bits per pixel). Attack success rates are comparable to or slightly higher than the baseline (IFGSM 92 %, DeepFool 95 %, C&W 90 %). In contrast, the RDE‑based method suffers from increasing distortion and recovery failures as perturbation amplitude grows.

The paper also discusses limitations and future work. The current implementation focuses on grayscale images; extending to color images requires careful handling of inter‑channel correlations to avoid color artifacts. Moreover, the RDH scheme used for embedding auxiliary data is not fully optimized; more efficient entropy coding could further reduce payload. Real‑time applications such as video streaming would need GPU‑accelerated block matching and transformation to meet latency constraints.

In conclusion, the authors present a novel RIT‑based framework for generating reversible adversarial examples that decouples attack strength from recovery quality. By transforming the original image into the adversarial image rather than embedding the perturbation, the method eliminates the capacity bottleneck of RDE, delivers high‑fidelity visual quality, and maintains strong adversarial effectiveness. This work opens the door for practical, secure image‑sharing scenarios where authorized users can retrieve pristine data while unauthorized models are thwarted by robust adversarial perturbations. Future research directions include extending the technique to high‑resolution and video data, improving color‑preserving transformations, and integrating more sophisticated reversible data hiding mechanisms.

Comments & Academic Discussion

Loading comments...

Leave a Comment