Fully Convolutional Network for Removing DCT Artefacts From Images

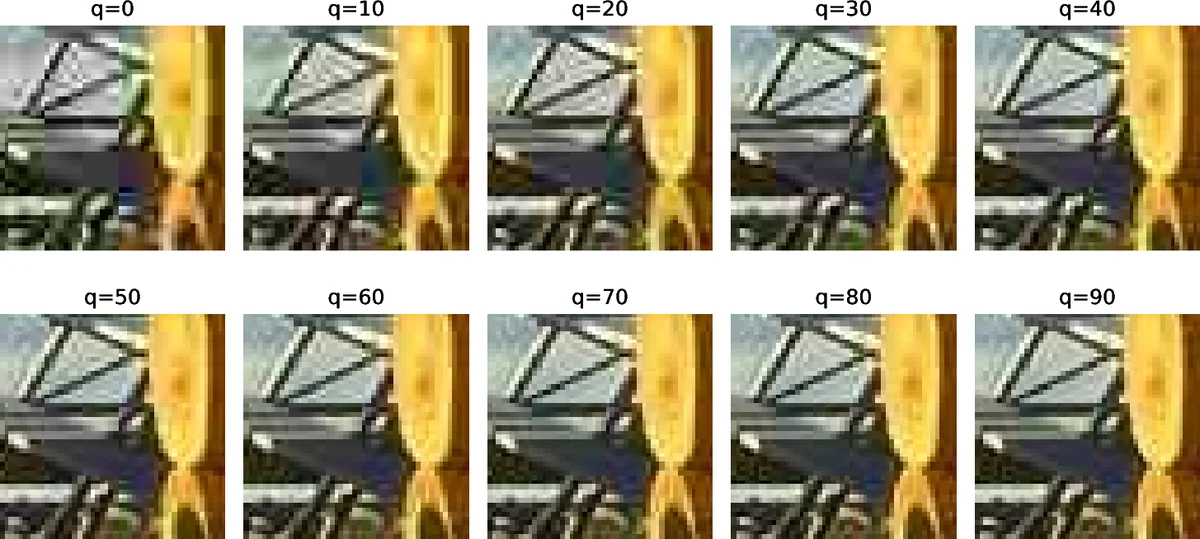

Image compression is one of the essential methods of image processing. Its most prominent advantage is the significant reduction of image size allowing for more efficient storage and transfer. However, lossy compression is associated with the loss of some image details in favor of reducing its size. In compressed images, the deficiencies are manifested by noticeable defects in the form of artifacts; the most common are block artifacts, ringing effect, or blur. In this article, we propose three models of fully convolutional networks with different configurations and examine their abilities in reducing compression artifacts. In the experiments, we research the extent to which the results are improved for models that will process the image in a similar way to the compression algorithm, and whether the initialization with predefined filters would allow for better image reconstruction than developed solely during learning.

💡 Research Summary

The paper addresses the persistent problem of visual artifacts—blockiness, ringing, and blur—that arise from JPEG’s block‑based discrete cosine transform (DCT) compression. The authors propose three fully convolutional network (FCN) architectures designed to exploit the same 8 × 8 DCT processing that JPEG uses. Model 1 incorporates a fixed, non‑learnable DCT layer consisting of 64 filters of size 8 × 8 with a stride of 8, followed by a residual block and several 3 × 3 convolutional layers. Model 2 shares the same overall topology but allows the DCT layer’s weights to be learned during training, testing whether data‑driven adaptation can outperform the canonical DCT basis. Model 3 removes the DCT stage entirely and relies solely on a stack of 3 × 3 convolutions, serving as a baseline FCN. For comparison, the well‑known AR‑CNN architecture (the state‑of‑the‑art JPEG artifact removal network) is used as a reference.

All networks are deliberately shallow (no more than four convolutional layers) to keep computational cost low. Parameter counts are 163 k for Models 1 and 2, 77 k for Model 3, and 106 k for AR‑CNN, demonstrating that the DCT‑augmented models can be competitive in size. Training is performed on the BSDS500 dataset (≈500 natural images of 481 × 321 pixels). Images are compressed at JPEG quality factor q = 60, then split into non‑overlapping 8 × 8 patches that align perfectly with the DCT layer’s receptive field. No additional scaling or preprocessing is applied, ensuring that the DCT filters operate on exactly the same blocks used during compression.

During training the networks converge to an average PSNR of about 32.8 dB on the training set. On the held‑out test set, the results are as follows (average values): Model 1 achieves 32.90 dB PSNR and an SSIM of 0.96–0.98, Model 2 reaches 32.76 dB PSNR with similar SSIM, Model 3 obtains 32.51 dB PSNR, and AR‑CNN records 32.23 dB. The unprocessed JPEG images score 32.05 dB PSNR. In terms of gain over the compressed input, Model 1 improves PSNR by 0.86 dB, Model 2 by 0.72 dB, Model 3 by 0.47 dB, and AR‑CNN by only 0.19 dB. SSIM improvements are modest and nearly identical across all methods, indicating that all approaches effectively suppress block artifacts but differ in how they handle residual noise and fine‑detail preservation.

Qualitative analysis shows that the DCT‑based models excel on images with large uniform regions or shallow depth‑of‑field, where block artifacts are most conspicuous. Conversely, images rich in fine textures (e.g., foliage, rain) suffer from irreversible loss during compression, limiting the achievable PSNR regardless of the network used. The visual examples confirm that Model 1’s fixed DCT filters provide slightly sharper restoration of small details compared with the learned DCT filters of Model 2, supporting the hypothesis that a mathematically exact DCT basis offers a universal prior for JPEG artifact removal.

The authors claim three main contributions: (1) introducing the first neural networks that embed DCT layers, thereby aligning network processing with the compression pipeline; (2) demonstrating that networks with predefined DCT filters can be smaller and marginally more accurate than purely learned FCNs; and (3) showing that such predefined filters are more universal across JPEG images than fully learned convolutional kernels. The work suggests that respecting the underlying transform of a compression algorithm can yield efficient, lightweight models without sacrificing performance. Future directions include extending the approach to variable quality factors, other color spaces, and real‑time video applications where computational budget is critical.

Comments & Academic Discussion

Loading comments...

Leave a Comment