Segmentation of MRI head anatomy using deep volumetric networks and multiple spatial priors

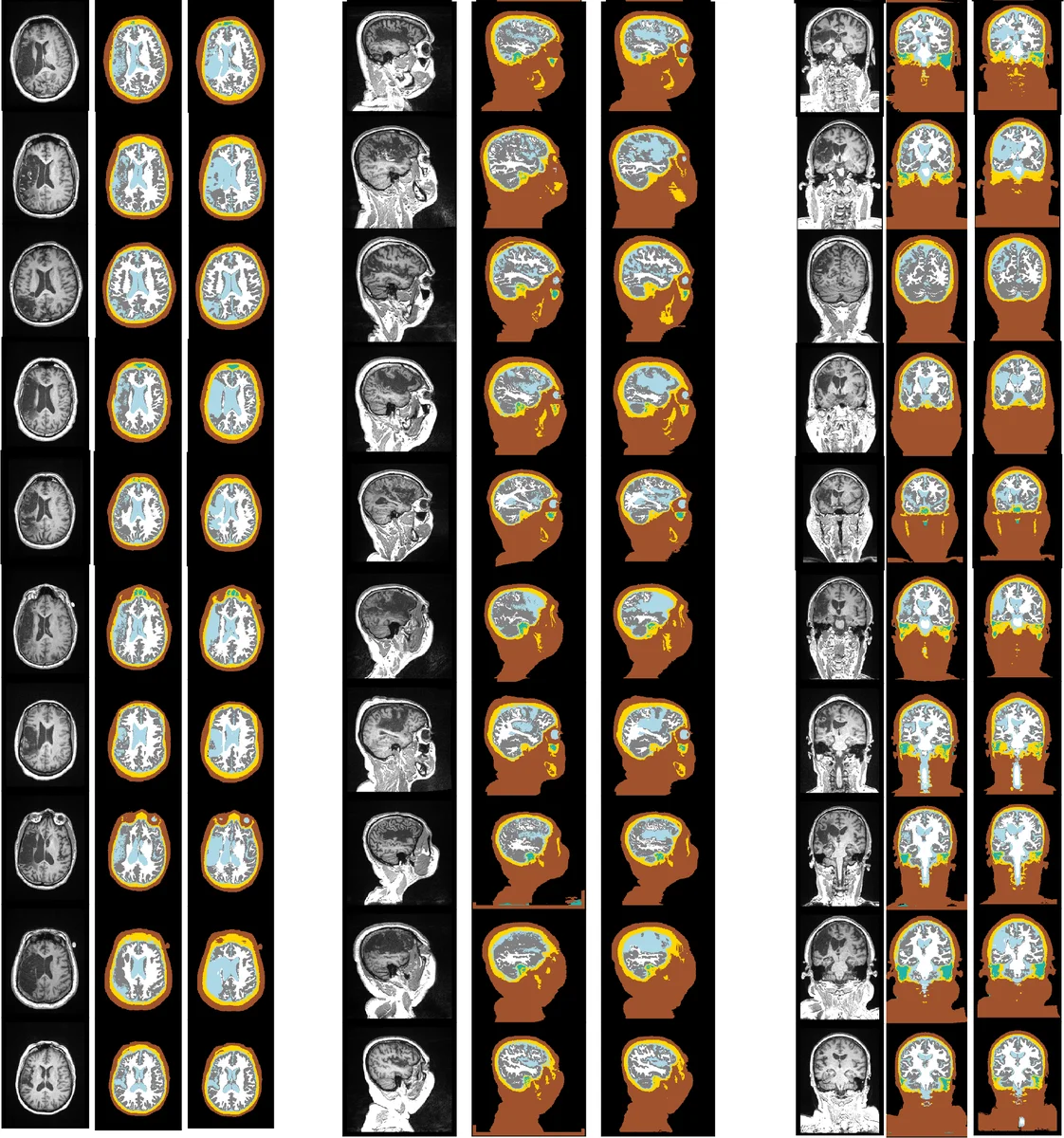

Purpose: Conventional automated segmentation of the head anatomy in MRI distinguishes different brain and non-brain tissues based on image intensities and prior tissue probability maps (TPM). This works well for normal head anatomies, but fails in the presence of unexpected lesions. Deep convolutional neural networks leverage instead spatial patterns and can learn to segment lesions, but often ignore prior probabilities. Approach: We add three sources of prior information to a three-dimensional convolutional network, namely, spatial priors with a TPM, morphological priors with conditional random fields, and spatial context with a wider field-of-view at lower resolution. We train and test these networks on 3D images of 43 stroke patients and 4 healthy individuals which have been manually segmented. Results: We demonstrate the benefits of each sources of prior information, and we show that the new architecture, which we call Multiprior network, improves the performance of existing segmentation software, such as SPM, FSL, and DeepMedic for abnormal anatomies. The relevance of the different priors was compared and the TPM was found to be most beneficial. The benefit of adding a TPM is generic in that it can boost the performance of established segmentation networks such as the DeepMedic and a UNet. We also provide an out-of-sample validation and clinical application of the approach on an additional 47 patients with disorders of consciousness. We make the code and trained networks freely available. Conclusions: Biomedical images follow imaging protocols that can be leveraged as prior information into deep convolutional neural networks to improve performance. The network segmentations match human manual corrections performed in 3D, and are comparable in performance to human segmentations obtained from scratch in 2D for abnormal brain anatomies.

💡 Research Summary

The paper addresses a fundamental limitation of conventional MRI head‑segmentation tools such as SPM and FSL, which rely heavily on tissue probability maps (TPMs) derived from healthy subjects. While TPMs provide valuable global location priors, they break down when the anatomy is altered by lesions (stroke, tumor, trauma), leading to systematic mis‑classifications. Conversely, modern 3‑D convolutional neural networks (CNNs) like DeepMedic excel at learning complex intensity patterns and can segment lesions, but they typically ignore explicit spatial priors, resulting in anatomically implausible segmentations.

To bridge this gap, the authors propose the “Multiprior” architecture, a fully trainable 3‑D CNN that simultaneously incorporates three complementary spatial priors:

-

Location prior via TPM – For each voxel, the six‑class TPM (gray matter, white matter, CSF, bone, skin, background) is registered to the subject’s T1‑weighted image using SPM8’s non‑linear coregistration. The resulting probability vector is concatenated to the network’s final classification layer, allowing the model to learn how to weight global anatomical expectations.

-

Morphological prior via a fully‑connected Conditional Random Field (CRF) – A penalty matrix encodes pairwise tissue adjacency constraints (e.g., high penalty for CSF‑air contact, low penalty for same‑tissue continuity). After the CNN produces softmax probabilities, the CRF iteratively refines the labeling (five iterations) while also considering the original image intensities. This extends the classic Potts model with tissue‑specific penalties and operates in 3‑D.

-

Contextual prior via a wide field‑of‑view (FOV) pathway – In addition to a high‑resolution “Detail” stream that processes a 17³ voxel patch, a parallel “Context” stream receives a larger 57³ voxel region down‑sampled by a factor of three, runs the same 8‑layer CNN, and is up‑sampled back to the original resolution. The context stream supplies global anatomical cues (e.g., distinguishing venous dark regions inside the skull from true background).

The network consists of three branches: Detail CNN, Context CNN, and a Classification head. Both CNN branches use eight 3×3×3 convolutional layers with LeakyReLU activations, expanding channel depth from 30 to 50. Their outputs are concatenated with the TPM vector and fed into three fully‑connected layers (the last with softmax) that predict seven classes (skin, skull, CSF, white matter, gray matter, internal air cavities, external background). Training minimizes a Generalized Dice Loss, which mitigates class imbalance without additional weighting.

Data and Evaluation

Training data comprised 43 chronic stroke patients (lesions replaced by CSF) and 4 healthy volunteers, all scanned on 3 T Siemens systems with isotropic 1 mm resolution. Ground‑truth segmentations were generated by an automatic pipeline followed by meticulous manual correction, yielding seven tissue labels. The model was evaluated using Dice similarity coefficients and Hausdorff distances, and compared against SPM, FSL, DeepMedic, and a 3‑D UNet.

Results

- Adding the TPM alone increased overall Dice by 4–6 % relative to the baseline CNN, with the most pronounced gains at lesion boundaries.

- The CRF and wide‑FOV stream each contributed modest additional improvements, confirming that morphological consistency and global context are beneficial but secondary to the location prior.

- When the same TPM module was grafted onto DeepMedic and UNet, both networks showed comparable Dice boosts, demonstrating the generality of the TPM prior.

- An external validation on 47 patients with disorders of consciousness (collected on a GE 3 T scanner) showed that Multiprior’s automatic segmentations were statistically indistinguishable from expert manual segmentations performed slice‑by‑slice in 2‑D.

Contributions and Impact

- Introduces a unified framework that fuses location, morphology, and context priors within a single end‑to‑end trainable 3‑D CNN.

- Provides empirical evidence that TPM is the most influential prior for abnormal brain anatomy, and that TPM can be seamlessly integrated into any volumetric segmentation network.

- Extends CRF modeling to a fully‑connected 3‑D setting with tissue‑specific pairwise penalties, improving anatomical plausibility.

- Releases code and pretrained weights, facilitating reproducibility and encouraging further research.

Limitations and Future Directions

- The TPM used is derived from healthy subjects; rare congenital anomalies may still be mis‑segmented.

- The CRF penalty matrix is manually designed; learning these constraints jointly with the network could yield more adaptive morphology modeling.

- The study focuses on T1‑weighted images; incorporating multimodal data (T2, FLAIR, diffusion) could further enhance robustness.

Conclusion

The Multiprior network demonstrates that embedding explicit spatial memories—global tissue probability maps, morphological adjacency constraints, and wide‑field context—significantly improves head‑MRI segmentation in the presence of lesions. The approach outperforms traditional TPM‑based tools and state‑of‑the‑art CNNs, while remaining flexible enough to augment other architectures. By making the implementation publicly available, the authors lay a solid foundation for broader clinical adoption and future methodological advances.

Comments & Academic Discussion

Loading comments...

Leave a Comment