Cognitive swarming in complex environments with attractor dynamics and oscillatory computing

Neurobiological theories of spatial cognition developed with respect to recording data from relatively small and/or simplistic environments compared to animals’ natural habitats. It has been unclear how to extend theoretical models to large or complex spaces. Complementarily, in autonomous systems technology, applications have been growing for distributed control methods that scale to large numbers of low-footprint mobile platforms. Animals and many-robot groups must solve common problems of navigating complex and uncertain environments. Here, we introduce the ‘NeuroSwarms’ control framework to investigate whether adaptive, autonomous swarm control of minimal artificial agents can be achieved by direct analogy to neural circuits of rodent spatial cognition. NeuroSwarms analogizes agents to neurons and swarming groups to recurrent networks. We implemented neuron-like agent interactions in which mutually visible agents operate as if they were reciprocally-connected place cells in an attractor network. We attributed a phase state to agents to enable patterns of oscillatory synchronization similar to hippocampal models of theta-rhythmic (5-12 Hz) sequence generation. We demonstrate that multi-agent swarming and reward-approach dynamics can be expressed as a mobile form of Hebbian learning and that NeuroSwarms supports a single-entity paradigm that directly informs theoretical models of animal cognition. We present emergent behaviors including phase-organized rings and trajectory sequences that interact with environmental cues and geometry in large, fragmented mazes. Thus, NeuroSwarms is a model artificial spatial system that integrates autonomous control and theoretical neuroscience to potentially uncover common principles to advance both domains.

💡 Research Summary

The paper introduces NeuroSwarms, a novel control framework that directly maps principles of rodent spatial cognition onto distributed robotic swarms. Recognizing that neurobiological studies of navigation have been confined to small, simplified arenas, the authors seek to extend these models to large, complex environments by leveraging the two hallmark mechanisms of hippocampal function: attractor dynamics of place‑cell networks and theta‑rhythm phase coding.

In NeuroSwarms each mobile agent is treated as a “spatial neuron” possessing an internal place‑field that reflects preferred sensory cues rather than its physical location. Mutual visibility between agents defines a sparse recurrent connectivity matrix V, while Euclidean inter‑agent distances D are transformed via a Gaussian kernel into synaptic‑like weights W = V·exp(−D²/σ²). This construction yields a continuous attractor map: the swarm’s collective state relaxes to a self‑reinforcing activity “bump,” analogous to the stable firing patterns observed in hippocampal networks.

Reward learning is incorporated through a separate weight matrix W_r = V_r·exp(−D_r/κ), allowing agents to be drawn toward distant goal locations. Sensory cues are modeled as discrete inputs c that are integrated over a time constant τ_c, while reward signals r are integrated over τ_r. The three input streams—cues, rewards, and recurrent swarm interactions—are combined with normalized gains (g_c, g_r, g_s) to produce a net current that drives a rectified activation p, which in turn serves as the basis for Hebbian (or two‑factor) learning.

Crucially, each agent carries an internal phase variable θ, mirroring the theta phase of a place cell. Pairwise interactions are modulated by a cosine term cos(θ_j − θ_i), producing excitation when phases align and inhibition when they diverge. This implements oscillatory synchronization and reproduces phase precession: as agents move, their phases advance systematically within each theta cycle, generating temporally compressed “theta sequences” that support planning and decision‑making.

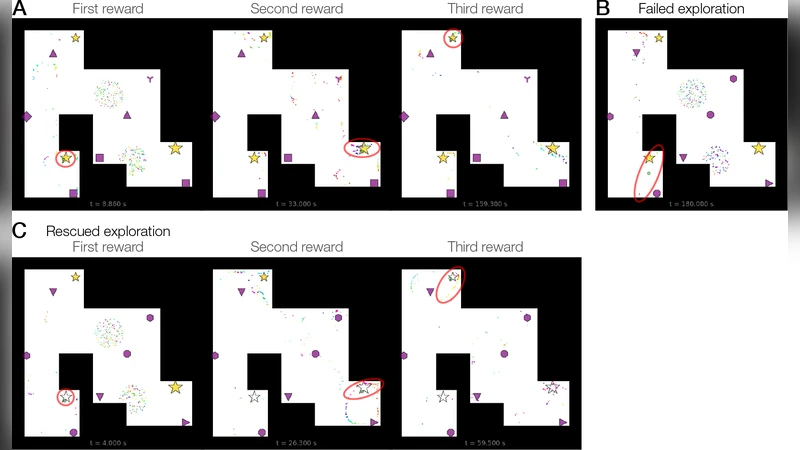

The authors validate the framework through extensive simulations. In fragmented, large‑scale mazes, agents spontaneously form phase‑organized rings and trajectory sequences that respect environmental geometry. A single‑entity simulation demonstrates that internal place‑fields can be decoupled from physical position, echoing hippocampal replay of remote locations. In a hairpin maze, a reward‑approach mechanism combined with the attractor map enables efficient navigation toward distant goals, illustrating how Hebbian‑style distance learning can guide exploration.

Overall, NeuroSwarms shows that (1) biologically inspired attractor and oscillatory dynamics can be instantiated in low‑footprint robotic swarms, (2) such swarms can self‑organize, learn, and adapt in complex, uncertain environments, and (3) the framework provides a two‑way bridge: robotic experiments can test hypotheses about spatial cognition, while neuroscience offers robust algorithms for autonomous control. The paper suggests future work on hardware deployment, multimodal sensory integration, and brain‑machine interfaces, positioning NeuroSwarms as a promising platform at the intersection of neuroscience and robotics.

Comments & Academic Discussion

Loading comments...

Leave a Comment