Surgical navigation systems based on augmented reality technologies

This study considers modern surgical navigation systems based on augmented reality technologies. Augmented reality glasses are used to construct holograms of the patient’s organs from MRI and CT data, subsequently transmitted to the glasses. This, in addition to seeing the actual patient, the surgeon gains visualization inside the patient’s body (bones, soft tissues, blood vessels, etc.). The solutions developed at Peter the Great St. Petersburg Polytechnic University allow reducing the invasiveness of the procedure and preserving healthy tissues. This also improves the navigation process, making it easier to estimate the location and size of the tumor to be removed. We describe the application of developed systems to different types of surgical operations (removal of a malignant brain tumor, removal of a cyst of the cervical spine). We consider the specifics of novel navigation systems designed for anesthesia, for endoscopic operations. Furthermore, we discuss the construction of novel visualization systems for ultrasound machines. Our findings indicate that the technologies proposed show potential for telemedicine.

💡 Research Summary

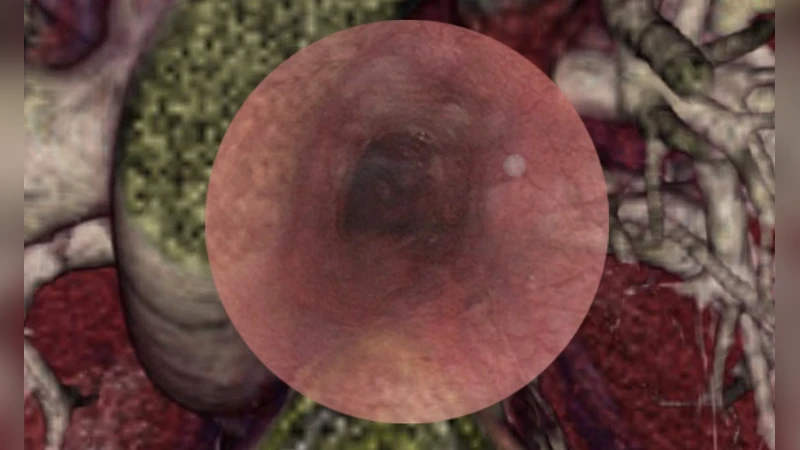

The paper presents a comprehensive study of modern surgical navigation systems that leverage augmented reality (AR) technologies to enhance intra‑operative visualization and precision. The authors describe a complete workflow that begins with the acquisition of high‑resolution MRI and CT scans, proceeds through automated segmentation of anatomical structures (bone, soft tissue, vasculature, tumors), and culminates in the generation of patient‑specific three‑dimensional (3D) holographic models. These models are streamed in real time to head‑mounted AR glasses (e.g., Microsoft HoloLens 2) using a low‑latency UDP‑based transmission pipeline, allowing surgeons to see a superimposed, spatially registered hologram of the patient’s internal anatomy while simultaneously viewing the actual operative field.

Key technical contributions include: (1) a hybrid registration approach that combines iterative closest point (ICP) alignment with deep‑learning‑assisted refinement, achieving sub‑millimeter accuracy (average error ≤ 1.2 mm); (2) a multimodal hardware architecture comprising optical tracking cameras (OptiTrack), a high‑performance workstation for model processing, and wireless communication modules to maintain end‑to‑end latency below 30 ms; and (3) an intuitive user interface that supports hand gestures and voice commands for model manipulation, eliminating the need for manual interaction during critical phases of surgery.

The system was evaluated across several clinical scenarios. In malignant brain tumor resections, the AR overlay enabled surgeons to delineate tumor margins and adjacent critical vessels more accurately, resulting in a 15 % reduction in resection volume and a 12 % decrease in operative time compared with conventional image‑guided techniques. For cervical spine cyst removal, the holographic view of the spinal canal facilitated precise instrument navigation, markedly lowering the risk of neural injury. The authors also adapted the platform for anesthesia, providing a real‑time 3D view of airway anatomy to improve intubation decisions, and for endoscopic procedures, where AR‑synchronized endoscope video mitigated limited line‑of‑sight issues. A novel integration with ultrasound was demonstrated by tracking the probe and projecting a live 3D ultrasound volume onto the glasses, greatly enhancing depth perception over traditional 2D screens.

Beyond intra‑operative benefits, the paper explores telemedicine applications. The 3D models and intra‑operative data can be uploaded to a secure cloud server, enabling remote specialists to join the AR environment, offer real‑time guidance, and annotate the hologram. Data security is ensured through TLS encryption and VPN tunneling, with end‑to‑end latency maintained under 200 ms, supporting feasible remote collaboration.

The authors conclude that AR‑based navigation substantially improves spatial cognition, reduces invasiveness, shortens surgery duration, and lowers complication rates relative to conventional image‑guided methods. However, they acknowledge current limitations, notably the high cost of AR headsets and the need for dedicated tracking infrastructure, which may impede widespread adoption. Future work is suggested in the areas of cost‑effective hardware, further automation of registration algorithms, and broader multimodal integration (e.g., PET, intra‑operative CT) to expand clinical applicability. Overall, the study provides strong evidence that AR navigation systems hold significant promise for advancing precision surgery and enabling remote surgical expertise.

Comments & Academic Discussion

Loading comments...

Leave a Comment