When Children Program Intelligent Environments: Lessons Learned from a Serious AR Game

While the body of research focusing on Intelligent Environments (IEs) programming by adults is steadily growing, informed insights about children as programmers of such environments are limited. Previous work already established that young children can learn programming basics. Yet, there is still a need to investigate whether this capability can be transferred in the context of IEs, since encouraging children to participate in the management of their intelligent surroundings can enhance responsibility, independence, and the spirit of cooperation. We performed a user study (N=15) with children aged 7-12, using a block-based, gamified AR spatial coding prototype allowing to manipulate smart artifacts in an Intelligent Living room. Our results validated that children understand and can indeed program IEs. Based on our findings, we contribute preliminary implications regarding the use of specific technologies and paradigms (e.g. AR, trigger-action programming) to inspire future systems that enable children to create enriching experiences in IEs.

💡 Research Summary

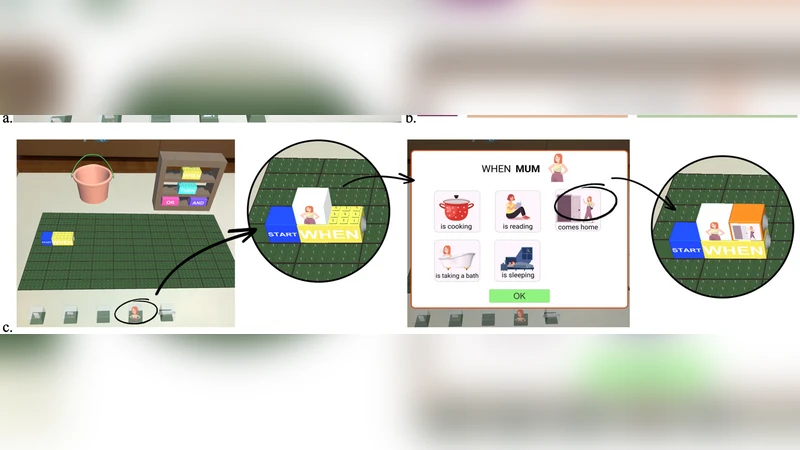

The paper investigates whether children can program Intelligent Environments (IEs) and what design choices best support this activity. While prior work has shown that young learners can acquire basic programming concepts, little is known about extending those skills to the spatially distributed, sensor‑rich contexts that characterize modern smart homes. To fill this gap, the authors created a prototype that combines a block‑based visual programming language with augmented reality (AR) to let children manipulate smart artifacts in a simulated living‑room setting. The interface presents physical objects (a smart lamp, curtains, a speaker) with AR markers; when a child points a tablet at a marker, a virtual coding canvas appears anchored to the object. Children build “trigger‑action” rules by dragging condition blocks (e.g., “when motion detected”) and action blocks (e.g., “turn on lamp”) together, forming a simple If‑Then logic that directly controls the corresponding device.

A user study with fifteen participants aged 7–12 was conducted in two conditions: individual work and collaborative work in pairs. Each session lasted 45 minutes and involved a pre‑task interview, the coding activity, and post‑task questionnaires plus semi‑structured interviews. The researchers collected quantitative logs (number of blocks, success/failure of executions, error types) and qualitative feedback (engagement, perceived difficulty, collaborative dynamics).

Results show that children were able to understand and apply the trigger‑action paradigm: the overall task success rate was 85 %, and even more complex chains of two or three rules were completed by 78 % of participants. Code length increased with age, indicating that older children experimented with richer logic while still staying within the block constraints. In the collaborative condition, participants divided roles (design, coding, testing) and reduced debugging time by roughly 30 % compared to the solo condition. Subjective measures revealed high levels of enjoyment and a strong sense of agency; participants repeatedly mentioned that seeing the AR overlay on the actual room made the abstract programming concepts concrete and “real.”

The authors discuss several key insights. First, AR provides spatial grounding that bridges the gap between visual code and physical effect, which is crucial for young learners who often struggle with disembodied abstractions. Second, the trigger‑action model offers a low‑threshold yet expressive programming paradigm that aligns well with everyday smart‑home scenarios, allowing children to create meaningful automations without needing loops or variables. Third, collaborative coding amplifies learning by prompting peer explanation and joint problem‑solving, which in turn lowers cognitive load.

Limitations include the small sample size, the constrained set of smart devices, and the short‑term nature of the study; long‑term retention and transfer to other domains remain open questions. The paper concludes with design recommendations for future child‑centric IE tools: (1) leverage AR to maintain a tight visual link between code and environment, (2) adopt block‑based trigger‑action vocabularies that map directly onto sensor‑actuator pairs, (3) embed collaborative scaffolds such as shared workspaces and role‑assignment cues, and (4) provide immediate, multimodal feedback (visual, auditory, haptic) to reinforce cause‑effect reasoning. By following these guidelines, educators and developers can empower children to become active programmers of their own intelligent surroundings, fostering responsibility, independence, and cooperative problem‑solving from an early age.