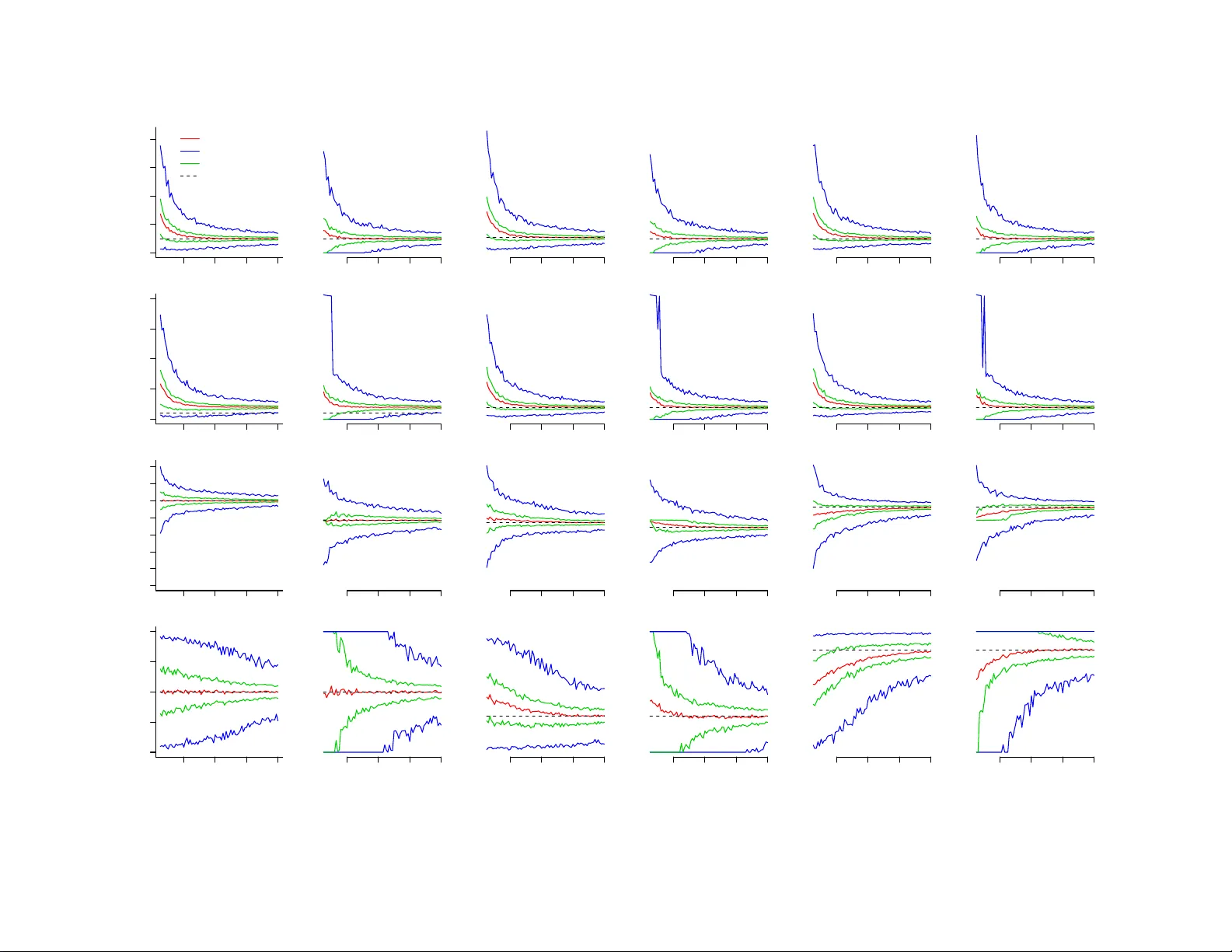

Statistical Properties of Sanitized Results from Differentially Private Laplace Mechanism with Univariate Bounding Constraints

Protection of individual privacy is a common concern when releasing and sharing data and information. Differential privacy (DP) formalizes privacy in probabilistic terms without making assumptions about the background knowledge of data intruders, and…

Authors: Fang Liu