Insights on the V3C2 Dataset

💡 Research Summary

The paper presents a comprehensive quantitative analysis of the second shard of the Vimeo Creative Commons Collection, known as V3C2, with the goal of informing researchers about its suitability for video retrieval and other multimodal research tasks. V3C2 comprises 9,760 videos and 1,425,451 shot‑level segments, amounting to roughly 1,300 hours of content. All videos were collected from Vimeo under Creative Commons licenses and have durations between three and sixty minutes.

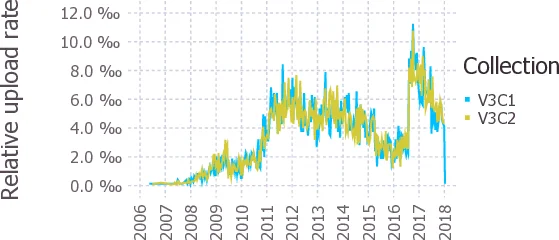

The authors first examine technical properties. Video duration distribution closely mirrors that of the first shard (V3C1), with a Pearson correlation of 0.939 before cumulative aggregation; about 80 % of videos are ten minutes or shorter. Segment length distribution is also nearly identical (correlation 0.999). On average each video contains 146 segments, ranging from a minimum of four to a maximum of 5,814. File containers are overwhelmingly MP4 (98.79 %), followed by MOV (1.1 %) and a few M4V files. The most common resolutions are 1280 × 720 and 1920 × 1080, reflecting typical web‑video standards.

Content‑level analysis uses the categories assigned by Vimeo uploaders. The top‑10 categories are the same for V3C1 and V3C2 (narrative, travel, sports, documentary, music, journalism, personal, hd/canon/videos, art, cameratechniques), and the Spearman rank correlation across all 71 shared categories is 0.987, indicating strong consistency between shards.

Low‑level visual statistics show that 23 % of keyframes lack a dominant color, while the majority are dominated by gray (≈58 %) or black/white (≈8 %). The distribution of dominant hues (orange, red, blue, green, cyan, magenta) is virtually identical between shards, despite no explicit balancing during dataset construction.

For high‑level visual semantics, the authors extract deep features from the final layer of an Inception‑ResNet‑v2 network pre‑trained on ImageNet. Feature vectors are computed per frame and mean‑pooled per segment, yielding 2,508,108 vectors across both shards. Pairwise L1 distances have nearly the same histogram for V3C1, V3C2, and the combined set (means around 46, standard deviations ≈ 6.5), suggesting comparable semantic variability within each shard.

Object detection is performed with YOLOv4 (Darknet‑53 backbone) using the COCO‑80 pretrained model. The pipeline discovers 5,009,059 object instances, averaging 3.5 objects per keyframe (median = 2). The top‑10 detected classes are person, bottle, car, cup, chair, bicycle, book, bird, tie, and boat. Approximately 23.4 % of keyframes contain no detectable object from the COCO set.

Face detection uses FaceNet, resulting in 1,352,749 detected faces. Most keyframes (59.5 %) contain no face; 23.1 % contain exactly one face, and the remainder have multiple faces, with a small tail up to 289 faces per frame. The number of detected faces is roughly 43.6 % of the number of detected persons, indicating many people appear without visible faces.

Scene‑text analysis employs EasyOCR, supporting Latin, Persian, Thai, Simplified Chinese, Devanagari, Japanese, and Hangul scripts. Text is found in at least one keyframe of 9,467 out of 9,760 videos (≈ 97 %). However, only 29.88 % of all segments contain any text, and the distribution of text items per frame is heavily skewed: 57 % of frames with text have ≤ 5 items, and 78 % have ≤ 10 items.

Speech analysis uses Mozilla DeepSpeech (v0.9.3) together with a voice‑activity detector to extract spoken segments. Transcriptions are generated under the assumption that all speech is English. At least one transcribed segment is obtained for 9,613 videos; voice activity is detected for an average of 90.3 % of each video’s duration, yielding about 20.2 speech segments per video. Word‑per‑minute rates show a long tail, indicating that the VAD sometimes misclassifies non‑speech audio as speech and that continuous dialog is rare.

The authors conclude that V3C2 exhibits high variability across technical, visual, textual, and auditory dimensions. Because neither scene‑text detection nor speech transcription covers the entire collection, reliance on a single modality for indexing or retrieval is insufficient. Instead, multimodal fusion approaches are recommended for effective video search. The paper also notes that, with additional annotations, V3C2 could support research beyond retrieval, such as multimodal representation learning, video summarization, and content analysis. All derived statistics, feature files, and processing scripts are publicly released via GitHub and an institutional repository, facilitating reproducibility and further exploration.

Comments & Academic Discussion

Loading comments...

Leave a Comment