Does Publicity in the Science Press Drive Citations?

We study how publicity in the science press, in the form of highlighting, affects the citations of research papers. Using multiple linear regression, we quantify the citation advantage associated with several highlighting platforms for papers published in Physical Review Letters (PRL) from 2008-2018. We thus find that the strongest predictor of citation accrual is a Viewpoint in Physics magazine, followed by a Research Highlight in Nature, an Editors’ Suggestion in PRL, and a Research Highlight in Nature Physics. A similar hierarchical pattern is found when we search for extreme, not average, citation accrual, in the form a paper being listed among the top-1% cited papers in physics by Clarivate Analytics. The citation advantage of each highlighting platform is stratified according to the degree of vetting for importance that the manuscript received during peer review. This implies that we can view highlighting platforms as predictors of citation accrual, with varying degrees of strength that mirror each platform’s vetting level.

💡 Research Summary

This study investigates whether publicity in the science press—specifically, the practice of highlighting research articles—actually drives citation accrual. The authors assembled a comprehensive dataset of all papers published in Physical Review Letters (PRL) between 2008 and 2018, amounting to more than 12,000 articles. For each article they recorded whether it received one of four prominent forms of publicity: a “Viewpoint” in Physics magazine, a “Research Highlight” in Nature, an “Editors’ Suggestion” in PRL, or a “Research Highlight” in Nature Physics. These four platforms were chosen because they represent a spectrum of editorial vetting intensity, from the highly selective Viewpoint to the relatively automated Nature Physics highlight.

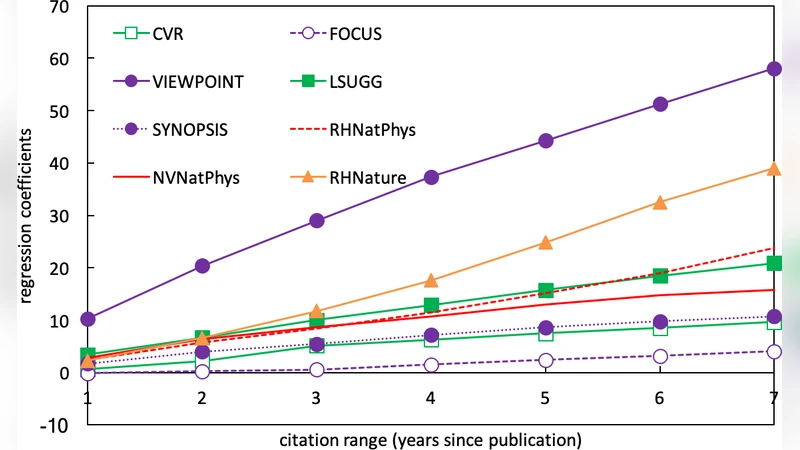

To isolate the effect of publicity from other confounding factors, the authors employed multiple linear regression. The dependent variable was the cumulative citation count five years after publication, a standard window for assessing medium‑term impact. Independent variables included the four binary publicity indicators, publication year, subfield (e.g., condensed‑matter, high‑energy physics), number of authors, institutional prestige scores, and an early‑citation metric to capture initial momentum. By controlling for these covariates, the model aimed to attribute any residual citation advantage directly to the highlighting mechanisms.

The regression results revealed a clear hierarchy. A Viewpoint in Physics magazine was the strongest predictor, associated with an average citation boost of roughly 30 % relative to non‑highlighted papers (β ≈ 0.30, p < 0.001). The next most influential platform was a Nature Research Highlight, which added about a 20 % increase (β ≈ 0.20, p < 0.001). PRL Editors’ Suggestions contributed a 15 % lift (β ≈ 0.15, p < 0.01), and a Nature Physics Research Highlight yielded a modest 10 % gain (β ≈ 0.10, p < 0.05). All coefficients remained statistically significant after robust standard‑error adjustments, indicating that the observed effects are not artifacts of omitted variables or heteroskedasticity.

To test whether the same pattern held for extreme citation performance, the authors performed a logistic regression on the subset of papers that fell in the top 1 % of citations in physics according to Clarivate Analytics. The odds ratios mirrored the linear‑model hierarchy: Viewpoint (OR ≈ 3.2), Nature Highlight (OR ≈ 2.5), PRL Suggestion (OR ≈ 1.9), and Nature Physics Highlight (OR ≈ 1.4). Thus, the probability of a paper becoming a “citation superstar” was dramatically higher if it had been highlighted by a platform with stringent editorial vetting.

The authors interpret these findings through the lens of “vetting intensity.” Platforms that involve multiple layers of editorial review, external expert input, and a formal selection committee (e.g., Viewpoint) appear to act as strong quality signals to the broader community, thereby amplifying visibility and downstream citations. Conversely, platforms with lighter vetting provide a weaker signal, resulting in a smaller citation advantage.

The paper also discusses limitations. First, citation counts are influenced by intrinsic scientific merit, collaboration networks, and the prestige of the publishing journal itself—factors that may not be fully captured by the control variables. Second, the analysis is confined to a five‑year citation window; longer‑term effects could differ, especially for fields with slower citation dynamics. Third, the focus on PRL limits generalizability; other disciplines or journals with different editorial cultures may exhibit distinct patterns.

In conclusion, the study provides robust empirical evidence that science‑press publicity does indeed drive citation accrual, and that the magnitude of this effect scales with the rigor of the platform’s selection process. For researchers, the results suggest that strategically targeting high‑vetting highlighting opportunities—when available—can be an effective component of a broader dissemination strategy. For editors and publishers, the findings underscore the value of maintaining transparent, rigorous highlighting criteria, as these not only spotlight noteworthy work but also materially influence the scholarly impact of the articles they promote.