Demystifying BERT: Implications for Accelerator Design

Transfer learning in natural language processing (NLP), as realized using models like BERT (Bi-directional Encoder Representation from Transformer), has significantly improved language representation with models that can tackle challenging language problems. Consequently, these applications are driving the requirements of future systems. Thus, we focus on BERT, one of the most popular NLP transfer learning algorithms, to identify how its algorithmic behavior can guide future accelerator design. To this end, we carefully profile BERT training and identify key algorithmic behaviors which are worthy of attention in accelerator design. We observe that while computations which manifest as matrix multiplication dominate BERT’s overall runtime, as in many convolutional neural networks, memory-intensive computations also feature prominently. We characterize these computations, which have received little attention so far. Further, we also identify heterogeneity in compute-intensive BERT computations and discuss software and possible hardware mechanisms to further optimize these computations. Finally, we discuss implications of these behaviors as networks get larger and use distributed training environments, and how techniques such as micro-batching and mixed-precision training scale. Overall, our analysis identifies holistic solutions to optimize systems for BERT-like models.

💡 Research Summary

The paper presents a comprehensive performance analysis of BERT training with the explicit goal of informing the design of future hardware accelerators. By instrumenting a full BERT‑Base training run on modern GPU hardware, the authors break down the workload into its constituent operations and quantify their contribution to overall execution time, memory traffic, and power consumption.

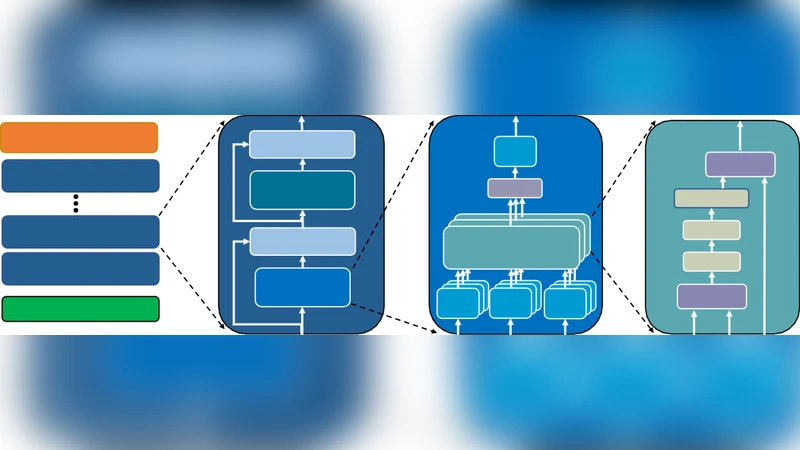

The first major finding is that dense matrix multiplication (GEMM) dominates the compute profile, accounting for roughly 60 % of the total runtime, much like convolutional neural networks. However, unlike CNNs, BERT’s Transformer blocks contain a substantial proportion of memory‑intensive kernels. Operations such as query/key/value (Q/K/V) projection, attention‑score softmax, layer‑norm, and the element‑wise scaling that follows the softmax are identified as “memory‑bound” despite their relatively low arithmetic intensity. These kernels generate irregular, non‑contiguous memory accesses, suffer from low cache reuse, and become the primary source of bandwidth pressure, consuming over 30 % of the measured memory bandwidth.

A second insight concerns the heterogeneity of compute across layers. Early Transformer layers are attention‑heavy, requiring large‑scale matrix‑matrix products and complex data reshaping, while deeper layers shift toward the feed‑forward network (FFN) where two point‑wise linear layers dominate. This intra‑model variance means a static, one‑size‑fits‑all accelerator pipeline cannot achieve optimal utilization; dynamic scheduling and layer‑specific micro‑architectural support are required.

The authors then evaluate two software‑level optimizations that have become standard in large‑scale training: micro‑batching and mixed‑precision (FP16/BF16) training. Micro‑batching reduces per‑step memory footprints, allowing larger global batch sizes without exceeding on‑chip memory limits, and improves pipeline occupancy on both GPUs and TPUs. Mixed‑precision cuts the data movement for GEMM by roughly half and doubles the effective compute throughput. Nevertheless, the paper emphasizes that precision reduction introduces scaling challenges—overflow/underflow in gradients and loss values—necessitating dynamic loss‑scaling and careful handling of accumulation buffers.

On the system side, the study compares parameter‑server and all‑reduce data‑parallel schemes in a multi‑node setting. All‑reduce is shown to be more bandwidth‑efficient for BERT, yet the sheer size of the model (110 M parameters for BERT‑Base, 340 M for BERT‑Large) leads to communication volumes that can dominate training time. The authors propose overlapping communication with computation, gradient compression (e.g., 8‑bit quantization), and topology‑aware all‑reduce algorithms to mitigate this bottleneck. They also discuss how scaling to larger models (e.g., 1 B‑parameter Transformers) will exacerbate both memory and network demands, making hierarchical memory systems and high‑speed interconnects indispensable.

From these observations, the paper distills four concrete hardware design recommendations for BERT‑like accelerators:

- High‑bandwidth memory subsystem and optimized GEMM engines – to sustain the massive matrix multiplications without throttling.

- Low‑latency cache and dedicated functional units for memory‑bound kernels – such as specialized softmax, layer‑norm, and attention‑projection units that can keep data on‑chip and reduce off‑chip traffic.

- Dynamic, heterogeneity‑aware scheduling and reconfigurable compute blocks – enabling the accelerator to allocate more resources to attention in early layers and to FFN in later layers, possibly via fine‑grained power‑gating or re‑mapping of compute tiles.

- Native support for mixed‑precision and micro‑batching pipelines – including on‑chip loss‑scaling logic, flexible accumulator widths, and mechanisms for seamless precision conversion.

The authors argue that these principles are not limited to the current BERT‑Base/ Large models but will remain relevant as the community moves toward deeper, wider, and multimodal Transformer architectures. By linking algorithmic characteristics directly to hardware implications, the paper provides a practical roadmap for both academia and industry to co‑design next‑generation AI accelerators that can efficiently handle the growing demands of large‑scale language models.