A multi-plane augmented reality head-up display system based on volume holographic optical elements with large area

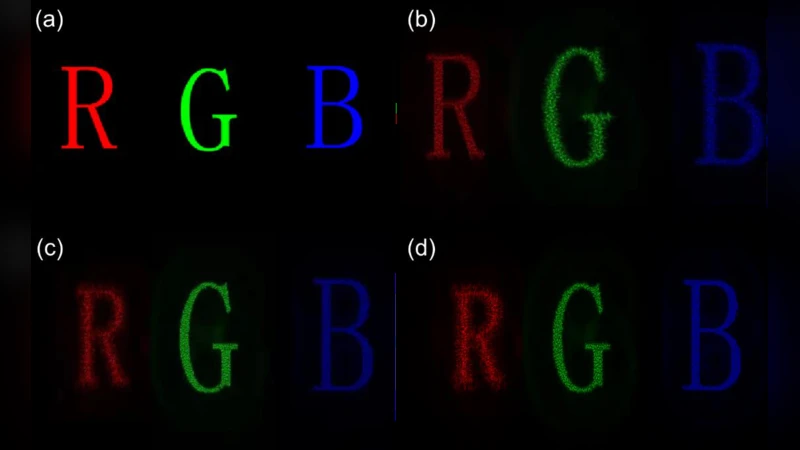

The traditional head-up display (HUD) system has the disadvantages of a small area and a single display plane, here we propose and design an augmented reality (AR) HUD system with multi-plane, large area, high diffraction efficiency and a single picture generation unit (PGU) based on holographic optical elements (HOEs). Since volume HOEs have excellent angle selectivity and wavelength selectivity, HOEs of different wavelengths can be designed to display images in different planes. Experimental and simulated results verify the feasibility of this method. Experimental results show that the diffraction efficiencies of the red, green and blue HOEs are 75.2%, 73.1% and 67.5%. And the size of HOEs is 20cm*15cm. Moreover, the three HOEs of red, green and blue display images at different depths of 150cm, 500cm and 1000cm, respectively. In addition, the field of view (FOV) and eye-box (EB) of the system are 12°10° and 9.5cm11.2cm. Furthermore, the light transmittance of the system has reached 60%. It is believed that this technique can be applied to the augmented reality navigation display of vehicles and aviation.

💡 Research Summary

The paper addresses the inherent limitations of conventional head‑up displays (HUDs), namely their small active area and single‑plane image projection, by introducing a multi‑plane, large‑area augmented‑reality (AR) HUD that relies on volume holographic optical elements (HOEs). The core concept exploits the strong angular and wavelength selectivity of volume HOEs: each HOE is recorded for a specific wavelength and incident angle, thereby diffracting only its designated laser light while remaining transparent to the others. In practice, three lasers—red (633 nm), green (532 nm), and blue (450 nm)—are emitted from a single picture generation unit (PGU). The red, green, and blue HOEs are fabricated on a 20 cm × 15 cm photopolymer slab and are engineered to focus the respective beams at distances of 150 cm, 500 cm, and 1000 cm from the viewer. This arrangement enables simultaneous presentation of depth‑segregated information without requiring the user to refocus the eye, a key advantage for automotive and aviation applications where glance time must be minimized.

Experimental results demonstrate high diffraction efficiencies: 75.2 % for red, 73.1 % for green, and 67.5 % for blue. These values are substantially higher than those typically achieved with thin‑film diffractive optics, confirming the benefit of volume holography for energy‑efficient beam steering. The system’s overall optical transmittance reaches 60 %, indicating that a significant portion of ambient light passes through the HOE stack, preserving scene visibility and contrast. The field of view (FOV) is measured at 12° × 10°, and the eye‑box (usable viewing region) is 9.5 cm × 11.2 cm, both of which exceed the specifications of most commercial HUDs, which often provide sub‑5° FOV and eye‑boxes on the order of a few centimeters.

From an architectural standpoint, the design is remarkably simple: a single PGU supplies the three primary colors, and three volume HOEs perform both wavelength separation and depth projection. This reduces the number of optical components, alignment steps, and overall system cost compared to multi‑projector or multi‑lens configurations. However, the approach imposes strict requirements on laser spectral purity and precise angular alignment during assembly, as any cross‑talk would degrade image quality. Moreover, the current implementation offers fixed focal distances; dynamic depth modulation would require either tunable HOEs (e.g., electro‑optic or thermally responsive photopolymers) or mechanically adjustable recording geometries.

The authors discuss several avenues for future work. First, integrating electrically controllable volume holograms could enable real‑time focal‑plane switching, allowing the HUD to adapt to varying situational demands (e.g., near‑field navigation cues versus far‑field horizon lines). Second, stacking multiple HOE layers could broaden the FOV and enlarge the eye‑box further, though careful management of inter‑layer diffraction and cumulative loss would be necessary. Third, coupling the system with high‑speed digital micromirror devices (DMDs) or liquid‑crystal on silicon (LCoS) modulators could provide dynamic, high‑resolution imagery, turning the HUD into a full‑featured AR display rather than a static indicator.

In conclusion, the study demonstrates that volume HOEs can serve as a compact, high‑efficiency, multi‑depth projection platform for AR HUDs. By achieving large display area, high diffraction efficiency, substantial transmittance, and a generous eye‑box, the proposed system overcomes many of the practical constraints of existing HUD technologies. Its scalability and potential for integration with adaptive holographic materials position it as a promising candidate for next‑generation vehicle and aircraft navigation displays, as well as for broader AR applications in industrial, medical, and training environments.