Extended pipeline for content-based feature engineering in music genre recognition

We present a feature engineering pipeline for the construction of musical signal characteristics, to be used for the design of a supervised model for musical genre identification. The key idea is to extend the traditional two-step process of extraction and classification with additive stand-alone phases which are no longer organized in a waterfall scheme. The whole system is realized by traversing backtrack arrows and cycles between various stages. In order to give a compact and effective representation of the features, the standard early temporal integration is combined with other selection and extraction phases: on the one hand, the selection of the most meaningful characteristics based on information gain, and on the other hand, the inclusion of the nonlinear correlation between this subset of features, determined by an autoencoder. The results of the experiments conducted on GTZAN dataset reveal a noticeable contribution of this methodology towards the model’s performance in classification task.

💡 Research Summary

**

The paper addresses a well‑known limitation in music genre classification systems: the traditional two‑step pipeline that first extracts features from audio signals and then feeds them directly into a classifier. This sequential “extraction‑then‑classification” design often leads to loss of information, especially regarding nonlinear relationships among features, and it does not allow intermediate stages to influence earlier processing steps.

To overcome these drawbacks, the authors propose an extended, non‑linear, multi‑cycle feature‑engineering pipeline that introduces back‑tracking arrows and loops, thereby allowing later stages to feed information back to earlier ones. The pipeline consists of four main components:

-

Short‑time feature extraction – Using 50 ms frames with 50 % overlap, 14 basic physical and perceptual descriptors (compactness, energy, RMS, zero‑crossing rate, 26 MFCCs, chroma vectors, LPCs, spectral descriptors, beat‑related measures, etc.) are computed. Derivatives of each descriptor are also calculated.

-

Early temporal integration – A texture window of 1 second aggregates the short‑time values by computing mean and standard deviation (Mean‑Var model). This yields a medium‑time representation for each descriptor.

-

Information‑gain based feature selection – The medium‑time vectors are fed to an intermediate Random Forest classifier. For each attribute, the sum of information gain across all tree splits is calculated. Only attributes with positive contribution are retained, producing a compact yet highly predictive subset of features.

-

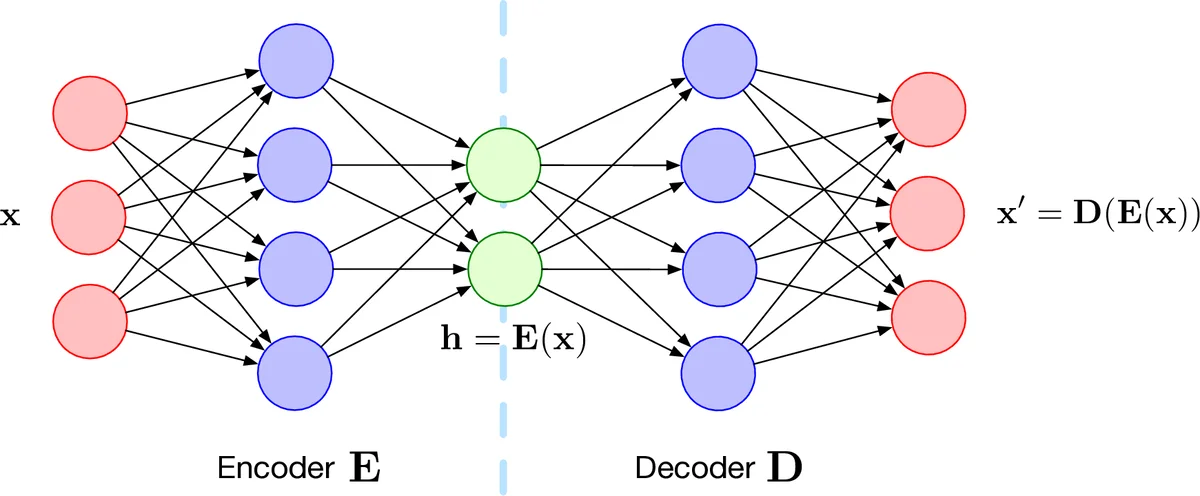

Non‑linear compression via autoencoder – The selected features (190‑dimensional after concatenation of means, standard deviations, and derivatives) are input to a symmetric autoencoder with architecture 190‑60‑20‑60‑190, using PReLU activations and dropout (0.2). The 20‑dimensional bottleneck layer captures nonlinear correlations among the selected descriptors. Its output is concatenated with the original selected features, forming the final feature vector.

The final vector is normalized to the

Comments & Academic Discussion

Loading comments...

Leave a Comment