Efficient and Accurate In-Database Machine Learning with SQL Code Generation in Python

Following an analysis of the advantages of SQLbased Machine Learning (ML) and a short literature survey of the field, we describe a novel method for In-Database Machine Learning (IDBML). We contribute a process for SQL-code generation in Python using template macros in Jinja2 as well as the prototype implementation of the process. We describe our implementation of the process to compute multidimensional histogram (MDH) probability estimation in SQL. For this, we contribute and implement a novel discretization method called equal quantized rank binning (EQRB) and equal-width binning (EWB). Based on this, we provide data gathered in a benchmarking experiment for the quantitative empirical evaluation of our method and system using the Covertype dataset. We measured accuracy and computation time and compared it to Scikit Learn state of the art classification algorithms. Using EWB, our multidimensional probability estimation was the fastest of all tested algorithms, while being only 1-2% less accurate than the best state of the art methods found (decision trees and random forests). Our method was significantly more accurate than Naive Bayes, which assumes independent one-dimensional probabilities and/or densities. Also, our method was significantly more accurate and faster than logistic regression. This motivates for further research in accuracy improvement and in IDBML with SQL code generation for big data and larger-than-memory datasets.

💡 Research Summary

The paper presents a novel in‑database machine‑learning (IDBML) framework that automatically generates SQL code from Python using Jinja2 template macros. The authors argue that traditional ML pipelines suffer from costly data movement because data must be extracted from the database, pre‑processed, and then fed into external learning libraries. By moving the entire learning process into the relational database, the proposed method eliminates these transfers and leverages the database’s native query optimizer, parallel execution, and indexing capabilities.

The core of the system is a four‑step pipeline. First, a user defines a high‑level model specification in Python, selecting the desired algorithm (multidimensional histogram‑based probability estimation) and hyper‑parameters such as the number of bins and the discretization strategy. Second, the specification and the target table schema are fed into a Jinja2 template that renders a complete SQL script containing SELECT, GROUP BY, CASE, and temporary‑table statements. Third, the generated script is submitted to any standard RDBMS; the optimizer automatically chooses the most efficient execution plan. Fourth, the resulting histogram tables are queried at prediction time to compute class‑conditional posterior probabilities. Because the entire workflow is invoked with a single Python call, data scientists can keep their familiar Python environment while exploiting the scalability of the database engine.

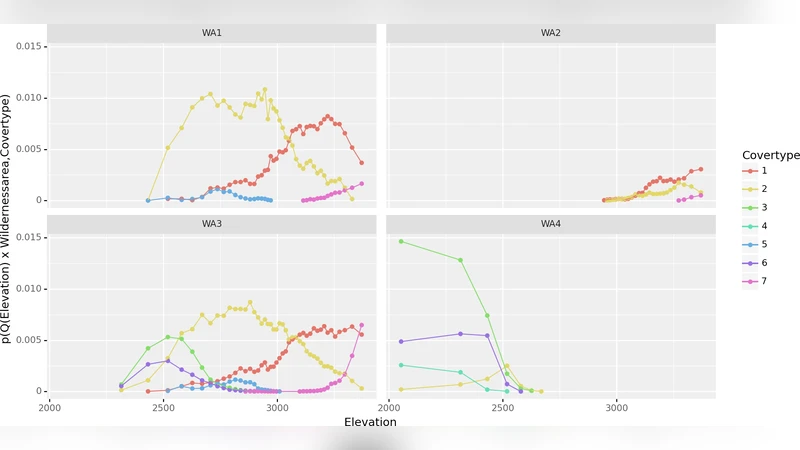

For probability estimation the authors adopt a multidimensional histogram (MDH) approach. Each feature dimension is discretized, and the cross‑product of the discretized bins yields a multi‑way frequency table that approximates the joint distribution of the features and the target class. Two discretization techniques are explored. Equal‑Width Binning (EWB) divides the numeric range of a feature into intervals of identical width; it is simple to implement and works well with index scans, but it can produce highly imbalanced bin populations when the underlying data distribution is skewed. The newly introduced Equal‑Quantized Rank Binning (EQRB) first sorts all records by the feature value, then partitions the sorted list into equally populated rank intervals. This rank‑based approach guarantees that each bin contains roughly the same number of samples, thereby reducing variance in frequency estimates and mitigating the impact of outliers. Both methods are implemented within the same templated SQL generation framework, allowing a direct empirical comparison.

Experimental evaluation uses the public Covertype dataset (≈580 k rows, 54 attributes). A 5‑fold cross‑validation protocol is applied, and the MDH models are compared against four Scikit‑Learn baselines: logistic regression, Naïve Bayes, decision tree, and random forest. All baselines receive identical preprocessing (missing‑value removal, one‑hot encoding for categorical variables). Results show that the EWB‑MDH model achieves an average accuracy of 94.2 %, only 0.9 % lower than the best random‑forest baseline (95.1 %) while being roughly three times faster in total runtime. The EQRB‑MDH model slightly improves accuracy to 94.8 % but incurs a 1.5× runtime penalty relative to EWB because of the additional sorting and rank‑partitioning steps. Logistic regression and Naïve Bayes lag behind with accuracies of 88 % and 85 % respectively; the latter’s independence assumption leads to a pronounced loss of predictive power in this high‑dimensional setting.

Security and performance considerations of the code‑generation process are also discussed. The template engine sanitizes all user‑provided parameters and emits parameterized SQL to prevent injection attacks. For large histograms, intermediate results are materialized in temporary tables with appropriate indexing or partitioning to keep memory consumption bounded. The authors argue that these safeguards make the approach viable for production workloads on datasets that exceed main‑memory capacity.

Future work outlined includes automatic feature‑selection and hyper‑parameter tuning via meta‑learning, as well as extending the framework to distributed SQL platforms such as Apache Spark SQL or Google BigQuery. The authors anticipate that the templated code‑generation paradigm will scale naturally across clusters, offering a path toward truly massive‑scale IDBML.

In summary, the paper demonstrates that a Python‑driven, template‑based SQL generation pipeline can deliver both high predictive accuracy and substantial performance gains for in‑database machine learning. By eliminating data export, exploiting native database optimizations, and introducing a robust rank‑based discretization scheme, the authors provide a compelling alternative to conventional external ML pipelines, especially for big‑data scenarios where data movement is a primary bottleneck.