Selection Heuristics on Semantic Genetic Programming for Classification Problems

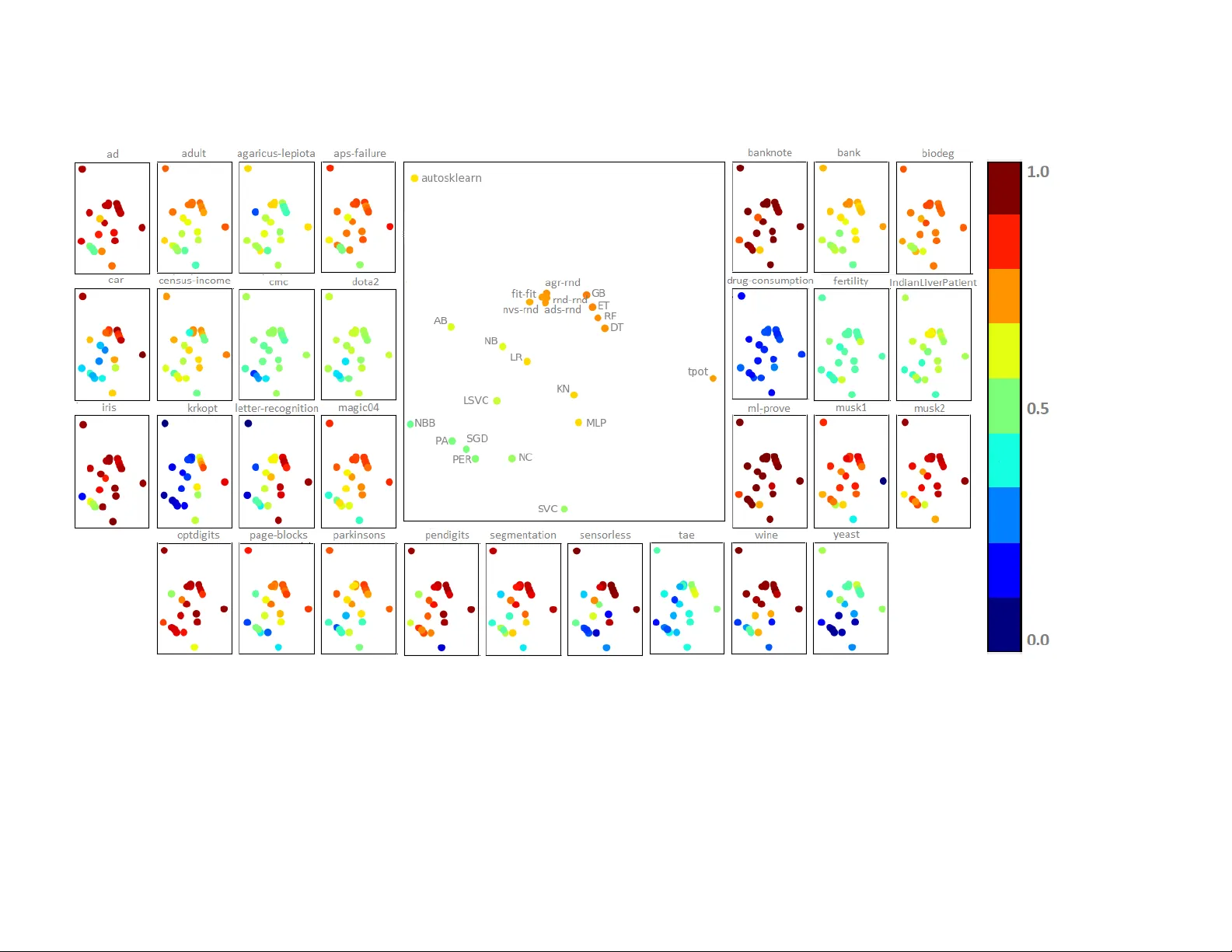

Individual's semantics have been used for guiding the learning process of Genetic Programming solving supervised learning problems. The semantics has been used to proposed novel genetic operators as well as different ways of performing parent selecti…

Authors: Claudia N. Sanchez, Mario Graff