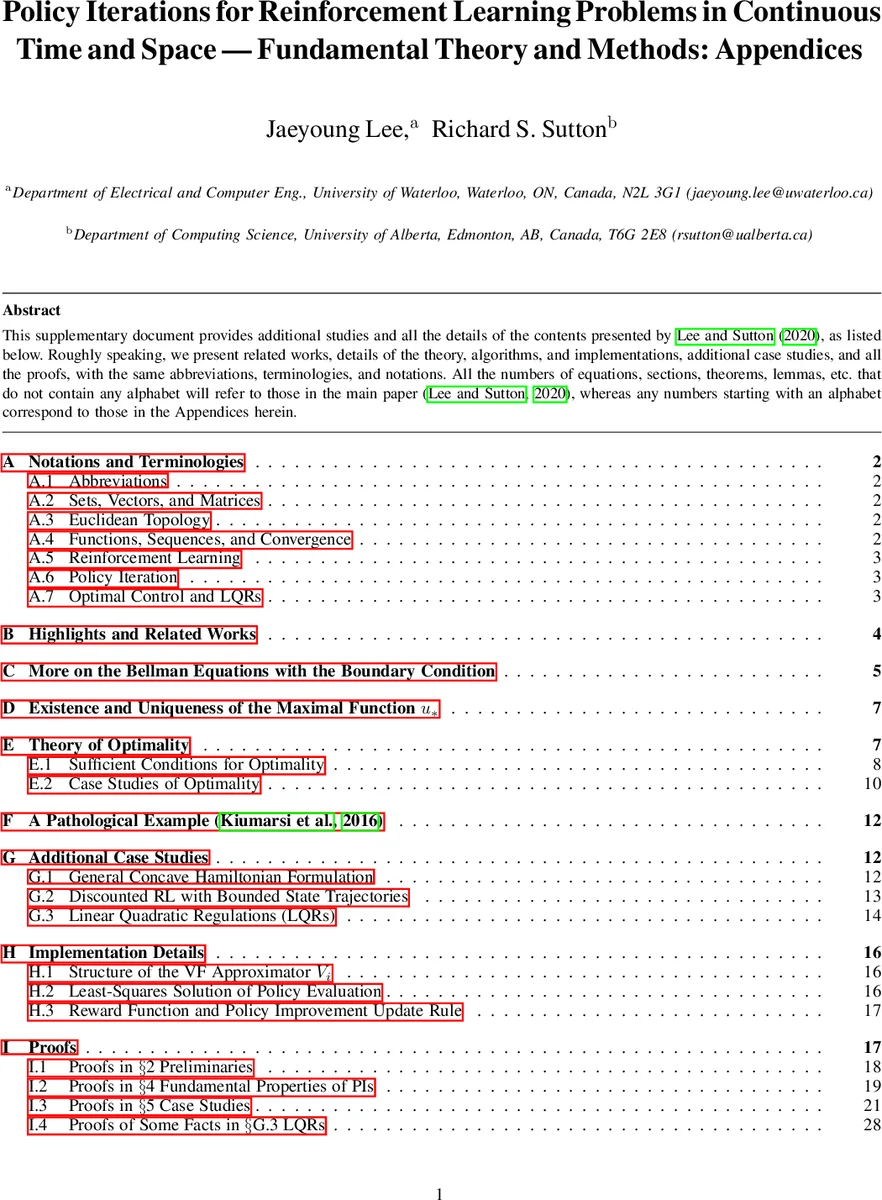

Policy Iterations for Reinforcement Learning Problems in Continuous Time and Space -- Fundamental Theory and Methods

Policy iteration (PI) is a recursive process of policy evaluation and improvement for solving an optimal decision-making/control problem, or in other words, a reinforcement learning (RL) problem. PI has also served as the fundamental for developing RL methods. In this paper, we propose two PI methods, called differential PI (DPI) and integral PI (IPI), and their variants, for a general RL framework in continuous time and space (CTS), where the environment is modeled by a system of ordinary differential equations (ODEs). The proposed methods inherit the current ideas of PI in classical RL and optimal control and theoretically support the existing RL algorithms in CTS: TD-learning and value-gradient-based (VGB) greedy policy update. We also provide case studies including 1) discounted RL and 2) optimal control tasks. Fundamental mathematical properties – admissibility, uniqueness of the solution to the Bellman equation (BE), monotone improvement, convergence, and optimality of the solution to the Hamilton-Jacobi-Bellman equation (HJBE) – are all investigated in-depth and improved from the existing theory, along with the general and case studies. Finally, the proposed ones are simulated with an inverted-pendulum model and their model-based and partially model-free implementations to support the theory and further investigate them beyond.

💡 Research Summary

This paper addresses the gap between classical reinforcement learning (RL) methods, which are formulated for discrete‑time and discrete‑space Markov decision processes, and the continuous‑time, continuous‑space (CTS) environments that most physical control problems inhabit. Modeling the environment as a system of ordinary differential equations (ODEs), the authors develop a general RL framework that imposes only minimal assumptions: existence and uniqueness of trajectories, continuity of the dynamics and reward, and no restriction on the discount factor γ∈(0,1]. Within this framework they introduce two policy‑iteration (PI) schemes—Differential PI (DPI) and Integral PI (IPI)—and a set of variants that bridge the theory of optimal control with modern RL algorithms.

Differential PI (DPI) is a model‑based method. For a given policy π, DPI solves the differential Bellman equation (the continuous‑time analogue of the Bellman equation) to obtain the value function vπ. The policy is then improved by maximizing the Hamiltonian H(x,∇vπ(x),u)=r(x,u)+∇vπ(x)·f(x,u) over the admissible action set. This step yields a new policy π′, and the process repeats.

Integral PI (IPI) is partially model‑free. It rewrites the Bellman equation in an integral (temporal‑difference) form, allowing the value function to be estimated from sampled trajectories without full knowledge of the dynamics—only the input‑coupling part of f is required. The policy‑improvement step is identical to DPI, but the evaluation step can be carried out with Monte‑Carlo or trajectory‑based data, making IPI suitable for settings where the dynamics are unknown or only partially known.

The authors rigorously prove several fundamental properties for both schemes:

-

Admissibility – a policy is admissible if its value function is finite for all states. Under the mild bounded‑reward assumption, any admissible policy yields a value function bounded above by v = r_max/α (α = –ln γ), with v = 0 when γ = 1.

-

Uniqueness of the Bellman solution – for any admissible policy, the associated Bellman equation has a unique solution in the class of continuous, differentiable functions.

-

Monotone improvement – each policy‑improvement step produces a new policy whose value function dominates the previous one pointwise, guaranteeing a non‑decreasing sequence of value functions.

-

Convergence – the monotone, bounded sequence converges to a limit value function v*; the corresponding policy sequence converges to a policy π* that satisfies the Hamilton‑Jacobi‑Bellman equation (HJBE).

-

Optimality – π* is shown to be optimal for the original RL problem; it maximizes the Hamiltonian everywhere, thus solving the HJBE.

To illustrate the theory, the paper presents four case studies:

- Concave Hamiltonian – where the Hamiltonian is concave in the control, guaranteeing global optimality of the improvement step.

- Discounted RL with bounded reward – demonstrating that the framework handles γ < 1 without additional stability constraints.

- Local Lipschitz dynamics – showing that even when the dynamics are only locally Lipschitz, the PI schemes remain well‑defined and converge.

- Nonlinear optimal control – applying DPI and IPI to classic control problems such as the inverted pendulum.

Simulation results on an inverted‑pendulum model validate the theoretical claims. DPI (model‑based) converges rapidly when the full dynamics are known, while IPI (partially model‑free) successfully learns an optimal policy from trajectory data even when the initial policy is not stabilizing. The experiments also explore “bang‑bang” control and binary‑reward RL scenarios, indicating the flexibility of the proposed methods beyond the strict assumptions of earlier continuous‑time PI literature.

In summary, the paper establishes a unified, mathematically rigorous policy‑iteration framework for continuous‑time, continuous‑space reinforcement learning. By removing the need for an initially stabilizing policy and by allowing arbitrary discount factors, it broadens the applicability of PI to a wide class of RL and optimal‑control problems. The connection to TD‑learning and value‑gradient‑based greedy updates further integrates the proposed methods with existing RL algorithms, offering both theoretical insight and practical tools for researchers and practitioners working with real‑world dynamical systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment