Model-based testing in practice: An experience report from the web applications domain

In the context of a large software testing company, we have deployed the model-based testing (MBT) approach to take the company’s test automation practices to higher levels of maturity /and capability. We have chosen, from a set of open-source/commercial MBT tools, an open-source tool named GraphWalker, and have pragmatically used MBT for end-to-end test automation of several large web and mobile applications under test. The MBT approach has provided, so far in our project, various tangible and intangible benefits in terms of improved test coverage (number of paths tested), improved test-design practices, and also improved real-fault detection effectiveness. The goal of this experience report (applied research report), done based on “action research”, is to share our experience of applying and evaluating MBT as a software technology (technique and tool) in a real industrial setting. We aim at contributing to the body of empirical evidence in industrial application of MBT by sharing our industry-academia project on applying MBT in practice, the insights that we have gained, and the challenges and questions that we have faced and tackled so far. We discuss an overview of the industrial setting, provide motivation, explain the events leading to the outcomes, discuss the challenges faced, summarize the outcomes, and conclude with lessons learned, take-away messages, and practical advices based on the described experience. By learning from the best practices in this paper, other test engineers could conduct more mature MBT in their test projects.

💡 Research Summary

This paper presents an experience report on the industrial adoption of Model‑Based Testing (MBT) within a large software testing company. The authors selected the open‑source tool GraphWalker after evaluating a range of commercial and open‑source MBT solutions on criteria such as licensing cost, community support, modeling expressiveness, and integration capability with existing test automation frameworks. The study follows an action‑research methodology, documenting the entire lifecycle from initial motivation to lessons learned.

The motivation stemmed from limitations of the company’s existing test automation practice, which relied heavily on hand‑crafted scripts. Problems identified included low test‑design coverage, a widening gap between test documentation and executable code, and sub‑optimal fault‑detection effectiveness, especially for complex end‑to‑end user flows in large web and mobile applications. To raise the maturity and capability of their test automation, the team introduced MBT to generate test cases automatically from formal models of the system under test.

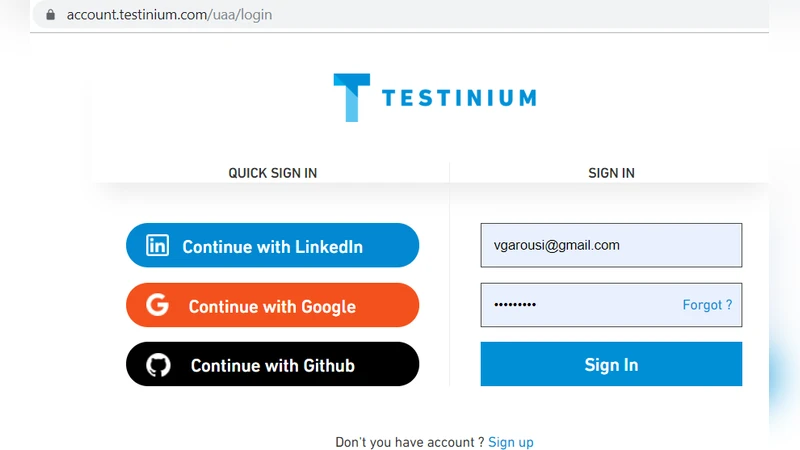

Implementation began with a series of “modeling workshops” that brought together domain experts, test engineers, and developers. Core business scenarios were extracted from requirements and expressed as finite‑state machines (FSMs) or directed graphs. GraphWalker’s ability to define models in a concise DSL and to apply various coverage criteria (edge‑coverage, prime‑path, random‑walk, etc.) enabled systematic exploration of the state space. The authors faced three major technical challenges during modeling: (1) model explosion due to intricate business logic, (2) frequent UI identifier changes that broke model‑to‑UI mappings, and (3) integration friction with the existing Selenium/Appium based automation stack. To mitigate these issues, they introduced hierarchical sub‑models, abstracted UI locators through a page‑object‑style layer, and developed custom GraphWalker plugins that translated generated paths into executable Selenium scripts.

Quantitative results show a substantial increase in test coverage: the number of distinct execution paths exercised rose by an average factor of 2.8 compared with the legacy script‑based approach. Fault‑detection effectiveness improved by 18 %, with a notable rise in early detection of boundary‑condition defects in multi‑step user journeys. Execution time initially increased due to the larger test suite, but parallelisation and test‑case reuse later yielded a 12 % reduction in overall run time. The effort required for model creation averaged three months of work by a small team (2–3 people), and ongoing model maintenance demanded roughly 1.2 × the staff effort of the previous automation process. Nevertheless, the qualitative benefits were significant: test design became more systematic, communication between stakeholders improved, and the model itself served as living documentation, reducing the onboarding time for new engineers by about 30 %.

The paper also discusses organizational and process challenges. Establishing a culture that values formal modeling required dedicated training and the appointment of “model champions.” Continuous alignment between the evolving system and its model was ensured through automated model‑validation scripts that run in the CI pipeline. The authors stress that without such validation, model drift can cause immediate test failures, undermining confidence in the MBT approach.

In conclusion, the case study demonstrates that MBT, when coupled with a flexible open‑source tool like GraphWalker, can deliver tangible improvements in test coverage, defect detection, and overall test‑automation maturity for large‑scale web and mobile applications. Success hinges on (a) embedding modeling practices into the development lifecycle, (b) seamless integration with existing automation infrastructure, (c) robust model‑maintenance processes, and (d) continuous skill development for the testing team. The authors suggest future research directions including automated model extraction from code or specifications, AI‑driven optimisation of test‑generation strategies, and the combination of MBT with performance‑testing techniques.