Data-driven sparse skin stimulation can convey social touch information to humans

💡 Research Summary

**

The paper addresses the challenge of conveying social touch information through a low‑complexity, wearable haptic system. Recognizing that remote communication increasingly lacks the richness of in‑person interaction, the authors investigate whether a sparse representation—using only a few discrete points of skin stimulation—can transmit the meaning behind human social touch.

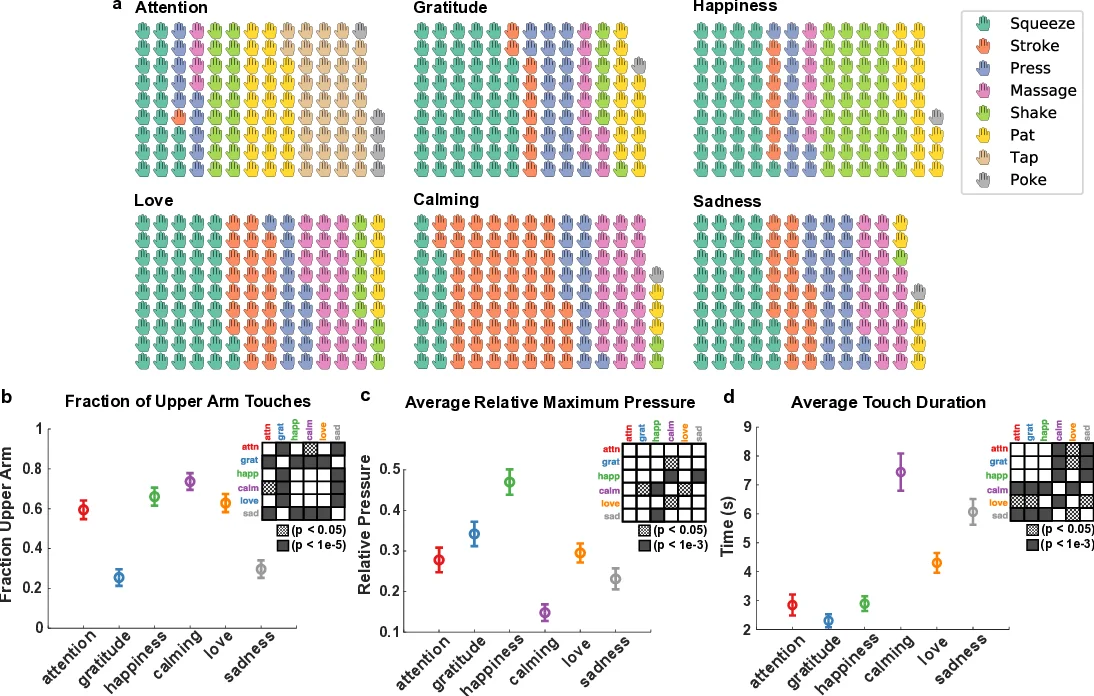

To this end, they first built a naturalistic social‑touch dataset. Twenty pairs of participants (friends or romantic partners) wore a soft, capacitive pressure‑sensor sleeve on the upper arm and forearm. For each of six pre‑defined scenarios—attention seeking, gratitude, happiness, calming, love, and sadness—participants were given an audio narrative and ambient lighting to set the context, then the “toucher” freely expressed the intended feeling by touching the sensor‑covered arm of the “receiver.” The sensor recorded pressure at 20 Hz with 1 in² spatial resolution, capturing 661 touch instances (after discarding a few corrupted recordings). Each instance was manually annotated with a set of gestures (e.g., tap, pat, stroke, shake) drawn from prior work, and statistical analyses revealed differences in pressure, duration, and arm region depending on scenario and relationship type.

The second contribution is a data‑driven mapping algorithm that converts the high‑resolution pressure maps into control signals for a sparse haptic display. Using multi‑object tracking, the algorithm isolates high‑pressure, sustained contact regions. It then selects eight fixed actuator locations on the forearm, spaced 37–50 mm apart—well beyond the discrimination threshold of cutaneous afferents. Each location is driven by a one‑degree‑of‑freedom voice‑coil actuator capable of reproducing the recorded pressure waveform (max ≈ 2.96 psi) at low force. This mapping preserves the temporal dynamics of the original touch while drastically reducing hardware complexity.

A user study evaluated whether naïve participants could infer the original scenario solely from the sparse actuation. Participants received no training; they simply felt the sequence of pressure pulses generated by the eight actuators and selected which of the six scenarios they believed was being conveyed. The average classification accuracy was 45 %, compared to 57 % reported for direct human‑to‑human touch in a prior study using the same scenarios. While performance did not reach the level of natural touch, the result demonstrates that meaningful social information can be transmitted through a minimal set of discrete tactile cues.

Key insights include:

- Sparse actuation can capture enough information for users to discriminate between distinct social meanings, especially affective categories such as love or sadness.

- Data‑driven mapping allows the same hardware to render a wide variety of touch patterns without hand‑crafted signal design, making the system adaptable to new datasets or user‑specific styles.

- Hardware simplicity (eight voice‑coil actuators, low‑force pressure) reduces power, weight, and mechanical complexity, which is advantageous for wearable or mobile applications.

The authors openly release the full pressure‑sensor dataset, the gesture annotations, and the source code for the mapping pipeline, encouraging reproducibility and further research. They discuss limitations: the low spatial resolution may hinder fine‑grained gesture discrimination; individual differences in tactile perception were not modeled; and only the forearm was instrumented, limiting the range of possible social gestures. Future work could explore dynamic actuator placement, multimodal stimulation (e.g., vibration, temperature), and machine‑learning‑based personalization to boost recognition rates.

In summary, the study provides empirical evidence that a carefully designed sparse tactile interface, driven by data‑derived signals, can convey socially relevant touch information. This opens avenues for more expressive remote communication, tele‑presence, and affective haptics in contexts where full‑body haptic suits are impractical.

Comments & Academic Discussion

Loading comments...

Leave a Comment