Building HVAC Scheduling Using Reinforcement Learning via Neural Network Based Model Approximation

Buildings sector is one of the major consumers of energy in the United States. The buildings HVAC (Heating, Ventilation, and Air Conditioning) systems, whose functionality is to maintain thermal comfort and indoor air quality (IAQ), account for almost half of the energy consumed by the buildings. Thus, intelligent scheduling of the building HVAC system has the potential for tremendous energy and cost savings while ensuring that the control objectives (thermal comfort, air quality) are satisfied. Recently, several works have focused on model-free deep reinforcement learning based techniques such as Deep Q-Network (DQN). Such methods require extensive interactions with the environment. Thus, they are impractical to implement in real systems due to low sample efficiency. Safety-aware exploration is another challenge in real systems since certain actions at particular states may result in catastrophic outcomes. To address these issues and challenges, we propose a model-based reinforcement learning approach that learns the system dynamics using a neural network. Then, we adopt Model Predictive Control (MPC) using the learned system dynamics to perform control with random-sampling shooting method. To ensure safe exploration, we limit the actions within safe range and the maximum absolute change of actions according to prior knowledge. We evaluate our ideas through simulation using widely adopted EnergyPlus tool on a case study consisting of a two zone data-center. Experiments show that the average deviation of the trajectories sampled from the learned dynamics and the ground truth is below $20%$. Compared with baseline approaches, we reduce the total energy consumption by $17.1% \sim 21.8%$. Compared with model-free reinforcement learning approach, we reduce the required number of training steps to converge by 10x.

💡 Research Summary

The paper addresses the pressing need for energy‑efficient operation of heating, ventilation, and air‑conditioning (HVAC) systems in commercial buildings. While traditional rule‑based, PID, and linear‑quadratic regulator (LQR) controllers have been employed for HVAC scheduling, they struggle with the nonlinear, high‑dimensional dynamics of modern HVAC plants and cannot anticipate external disturbances such as weather changes, occupancy patterns, or electricity price fluctuations. Recent model‑free deep reinforcement learning (DRL) approaches, notably Deep Q‑Network (DQN) and Proximal Policy Optimization (PPO), have shown promise but suffer from severe sample inefficiency: they require millions of interaction steps with the environment to converge, which is infeasible for real‑world HVAC equipment where each trial incurs energy cost and risk of equipment damage. Moreover, model‑free methods lack explicit mechanisms for safe exploration, making them unsuitable for safety‑critical control tasks.

To overcome these limitations, the authors propose a model‑based reinforcement learning (MBRL) framework that learns a data‑driven dynamics model of the HVAC system using a deep neural network (NN). The NN is trained to predict the change in observable variables (supply‑air temperature, zone temperature, humidity) given a short history of past observations and control actions, effectively approximating the underlying partially observable Markov decision process (POMDP). By learning deterministic dynamics in a Δ‑observation formulation, the model captures the nonlinear relationships without requiring explicit physical equations.

Once the dynamics model is obtained, the control problem is cast as a constrained trajectory optimization solved by Model Predictive Control (MPC). The authors employ a random‑sampling shooting method: a set of candidate action sequences is sampled, each sequence is rolled out through the learned NN model, and a cost function that combines energy consumption and penalty terms for comfort violations (temperature and humidity bounds) is evaluated. The best sequence’s first action is executed, and the process repeats at the next time step. To guarantee safe exploration, the action space is clipped to a pre‑defined safe range and the maximum absolute change between successive actions is limited based on domain knowledge, preventing abrupt control moves that could damage equipment.

Because MPC can be computationally intensive for real‑time deployment, the authors train an auxiliary policy network that imitates the MPC output (behavior cloning). This “imitation policy” can generate near‑optimal actions with a single forward pass, satisfying real‑time constraints while preserving the performance benefits of MPC.

The framework is evaluated using EnergyPlus, a widely adopted building energy simulation platform, on a two‑zone data‑center case study. The authors collect a week of simulated operational data to train the NN dynamics model, then test the controller over a month of simulated operation. Key results include:

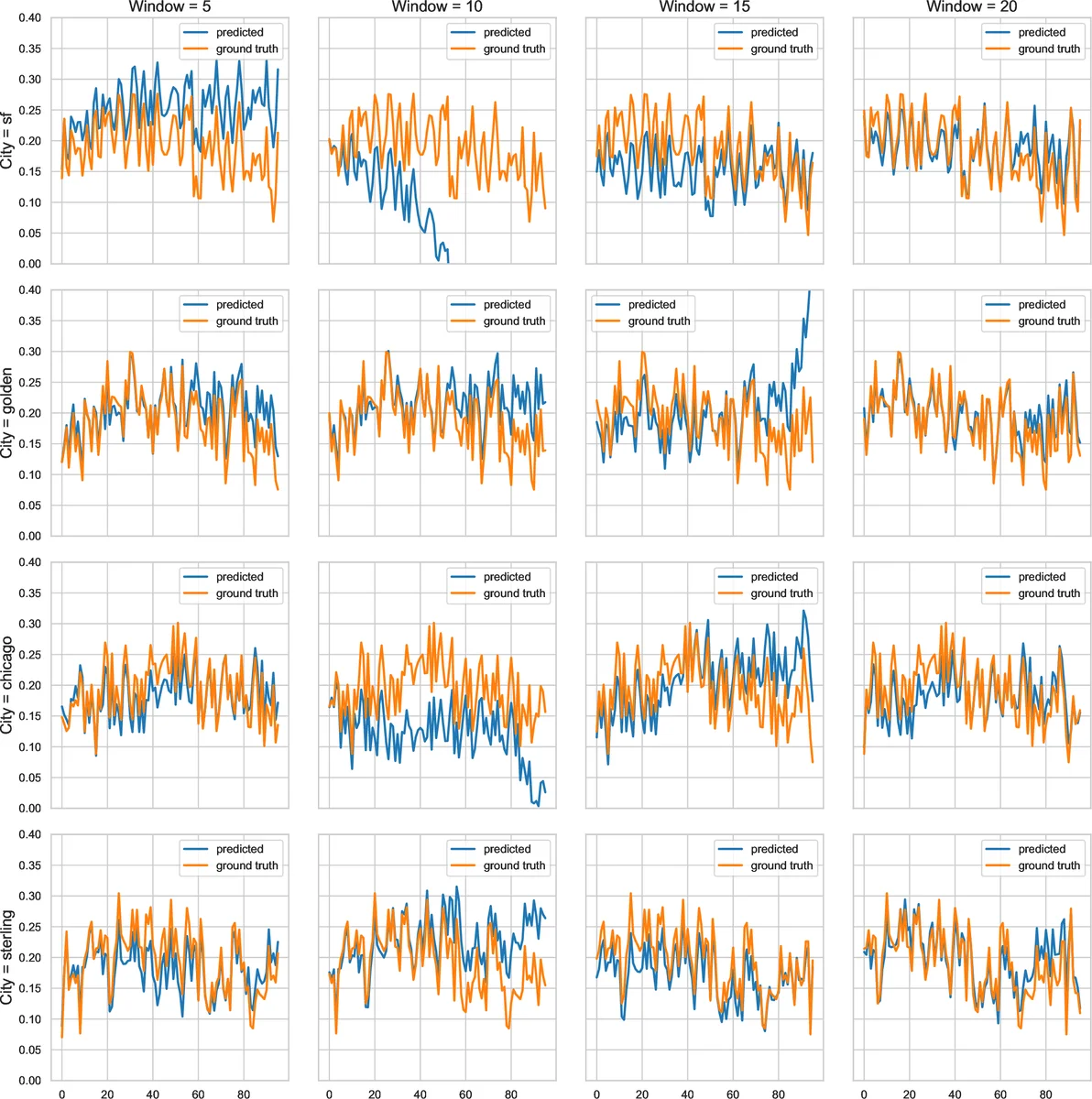

- The learned dynamics model achieves an average trajectory deviation of less than 20 % compared with the ground‑truth EnergyPlus simulator, indicating sufficient fidelity for control purposes.

- Compared with baseline controllers (PID, rule‑based, LQR, and a linear‑model MPC), the proposed MBRL‑MPC reduces total HVAC energy consumption by 17.1 %–21.8 %.

- When compared against a model‑free PPO baseline that uses the same reward shaping (energy cost plus comfort penalties), the MBRL approach converges with roughly one‑tenth the number of training steps, demonstrating a tenfold improvement in sample efficiency.

- Comfort constraints (temperature and humidity limits) are satisfied virtually 100 % of the time, confirming the effectiveness of the safe‑action clipping and penalty design.

The authors highlight several contributions: (1) a systematic analysis of why model‑free DRL is impractical for HVAC scheduling; (2) an online, data‑efficient method for learning HVAC dynamics with neural networks; (3) integration of learned dynamics with MPC and random‑sampling shooting to handle constraints; (4) a policy‑distillation step that enables real‑time inference; and (5) extensive simulation validation showing both energy savings and reduced training effort.

In conclusion, the paper demonstrates that a hybrid approach—combining data‑driven system identification, model‑based planning, and policy imitation—can deliver safe, efficient, and practical HVAC control. Future work is suggested in scaling the method to multi‑zone, multi‑building portfolios, incorporating weather and price forecasts into the planning horizon, and validating the approach on physical testbeds to assess robustness to model mismatch and sensor noise.

Comments & Academic Discussion

Loading comments...

Leave a Comment