Convergence and sample complexity of gradient methods for the model-free linear quadratic regulator problem

Model-free reinforcement learning attempts to find an optimal control action for an unknown dynamical system by directly searching over the parameter space of controllers. The convergence behavior and statistical properties of these approaches are often poorly understood because of the nonconvex nature of the underlying optimization problems and the lack of exact gradient computation. In this paper, we take a step towards demystifying the performance and efficiency of such methods by focusing on the standard infinite-horizon linear quadratic regulator problem for continuous-time systems with unknown state-space parameters. We establish exponential stability for the ordinary differential equation (ODE) that governs the gradient-flow dynamics over the set of stabilizing feedback gains and show that a similar result holds for the gradient descent method that arises from the forward Euler discretization of the corresponding ODE. We also provide theoretical bounds on the convergence rate and sample complexity of the random search method with two-point gradient estimates. We prove that the required simulation time for achieving $\epsilon$-accuracy in the model-free setup and the total number of function evaluations both scale as $\log , (1/\epsilon)$.

💡 Research Summary

This paper investigates the convergence properties and sample complexity of gradient‑based methods for solving the continuous‑time infinite‑horizon Linear Quadratic Regulator (LQR) problem when the system dynamics are unknown (model‑free setting). The authors first reformulate the LQR problem as a direct search over the feedback gain matrix K, defining the cost function f(K) as the expected infinite‑horizon quadratic cost incurred by the linear state‑feedback law u = –Kx. Although f(K) is non‑convex in K, it possesses two crucial structural properties: (i) all its critical points are global minima (the optimal gain K*), and (ii) its sublevel sets are compact. These properties enable the use of Lyapunov‑type arguments for stability analysis.

The paper’s first major contribution is the proof of exponential stability of the continuous‑time gradient‑flow dynamics ˙K = –∇f(K) over the set of stabilizing gains S_K. By treating the cost gap Δf(K) = f(K) – f(K*) as a Lyapunov function and establishing a Polyak‑Łojasiewicz (PL) inequality for f, the authors show that Δf(K(t)) decays as e^{–ρt} for some ρ > 0 that depends on the initial gain and problem parameters. Consequently, the distance ‖K(t) – K*‖_F also contracts exponentially.

Building on this, the authors analyze the forward‑Euler discretization (gradient descent, GD) K_{k+1}=K_k – α∇f(K_k). They prove that for a sufficiently small constant stepsize α (chosen based on spectral properties of A, B, Q, R, and the initial gain), GD inherits the same linear convergence rate γ < 1. The analysis yields explicit expressions for ρ, γ, and the constants governing the contraction, thereby providing a complete picture of the deterministic, model‑based case.

The second major contribution addresses the model‑free scenario, where the exact gradient cannot be computed. The authors adopt a two‑point random search (RS) scheme: at each iteration they sample N directions U_i uniformly from the unit sphere, perturb the current gain by ±rU_i, simulate the closed‑loop system for a finite horizon τ, and compute the empirical cost difference \hat f_{i,1} – \hat f_{i,2}. The gradient estimate is formed as (1/(2rN)) Σ_i ( \hat f_{i,1} – \hat f_{i,2}) U_i. This estimator is unbiased (its expectation equals ∇f(K)) and its variance can be bounded in terms of r, N, τ, and the sub‑Gaussian constant κ of the initial‑state distribution.

Under the assumption that the initial state distribution is i.i.d. zero‑mean sub‑Gaussian, the authors prove an informal theorem (Theorem 3) stating that, for any desired accuracy ε > 0, there exist constants θ_1,…,θ_4 such that if the simulation horizon τ ≥ θ_1 log(1/ε) and the number of perturbation samples satisfies N ≥ c (1+β)^4 κ^4 θ_1 log^6 n·n (for some β > 0), then with a smoothing radius r < θ_3 √ε and a constant stepsize α, the RS iterates satisfy f(K_k) – f(K*) ≤ ε after at most k ≤ θ_4 log( (f(K_0) – f(K*))/ε ) iterations. Moreover, the total number of function evaluations (which equals 2N per iteration) and the total simulation time are both O(log(1/ε)). This logarithmic dependence dramatically improves upon prior results for discrete‑time LQR, which required O(1/ε) evaluations or polynomial simulation time.

A key technical device enabling these results is a convex re‑parameterization of the original non‑convex problem. By introducing variables X ≻ 0 (the solution of the Lyapunov equation for a given K) and Y = KX, the cost can be expressed as h(X,Y) = tr(QX + Y^T R Y X^{-1}), which is jointly convex in (X,Y). The Lyapunov constraint becomes affine in (X,Y), allowing the authors to apply standard convex analysis to derive the PL inequality and exponential stability for the gradient flow in the (X,Y) space. They then map these results back to the original K‑space, establishing that the non‑convex dynamics inherit the same exponential convergence.

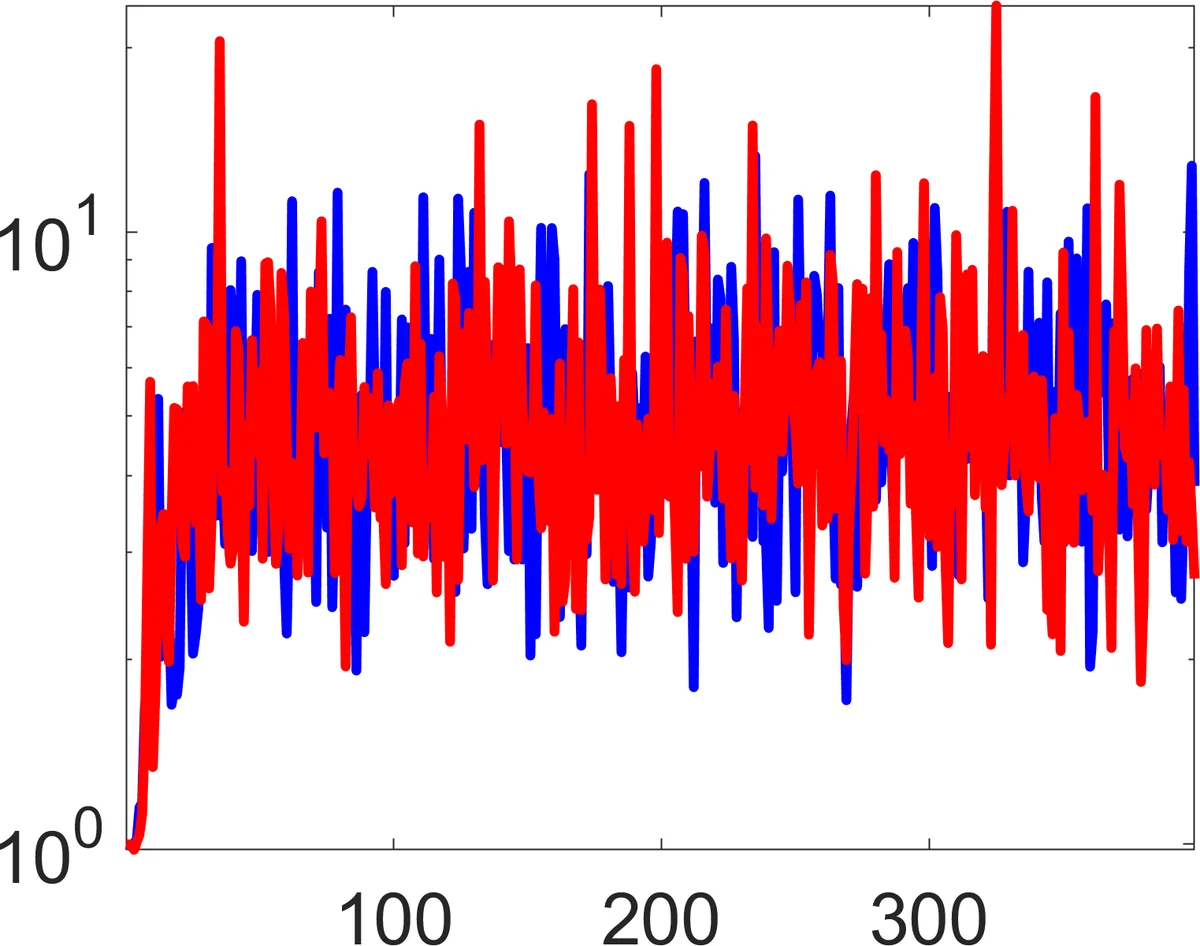

The paper also discusses extensions to structured control design (e.g., sparsity, actuator selection) where the feasible set may be non‑convex or disconnected, and argues that the presented analysis can be adapted to such settings. Numerical experiments on systems of varying dimensions illustrate that the theoretical convergence rates and sample‑complexity bounds are observed in practice: the RS algorithm reaches high‑precision solutions with only a few hundred simulations, confirming the logarithmic scaling.

In summary, the authors provide (1) a rigorous proof of exponential stability for continuous‑time gradient flow and its discretized counterpart on the non‑convex LQR cost landscape, and (2) a comprehensive sample‑complexity analysis for a two‑point random‑search method that achieves ε‑optimality with both simulation time and function‑evaluation count scaling as O(log (1/ε)). These contributions bridge a gap between model‑free reinforcement learning theory and classical optimal control, offering provable efficiency guarantees for learning optimal linear controllers without explicit system identification.

Comments & Academic Discussion

Loading comments...

Leave a Comment