A Unifying Survey of Reinforced, Sensitive and Stigmergic Agent-Based Approaches for E-GTSP

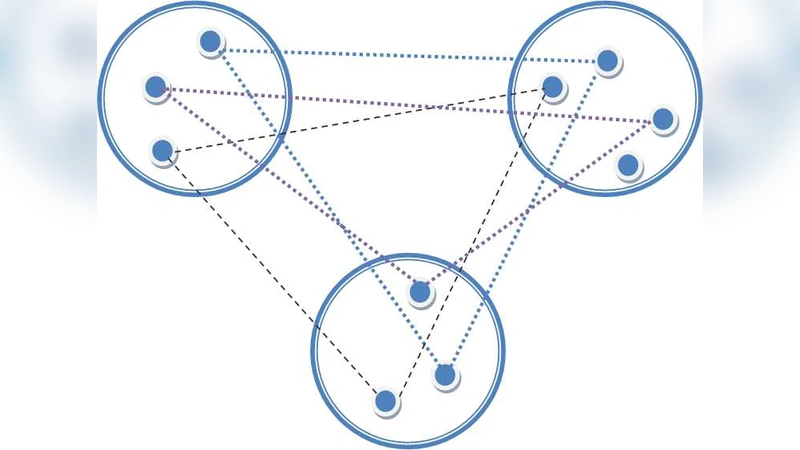

The Generalized Traveling Salesman Problem (GTSP) is one of the NP-hard combinatorial optimization problems. A variant of GTSP is E-GTSP where E, meaning equality, has the constraint: exactly one node from a cluster of a graph partition is visited. The main objective of the E-GTSP is to find a minimum cost tour passing through exactly one node from each cluster of an undirected graph. Agent-based approaches involving are successfully used nowadays for solving real life complex problems. The aim of the current paper is to illustrate some variants of agent-based algorithms including ant-based models with specific properties for solving E-GTSP.

💡 Research Summary

The paper presents a comprehensive survey and unifying framework for three major agent‑based paradigms applied to the Equality Generalized Traveling Salesman Problem (E‑GTSP). E‑GTSP is a constrained variant of the classic GTSP in which exactly one vertex must be selected from each predefined cluster of an undirected graph, and the goal is to find a minimum‑cost Hamiltonian tour that respects this “exactly‑one” requirement. Because the constraint eliminates many feasible combinations while still preserving combinatorial explosion, traditional meta‑heuristics often struggle to balance feasibility enforcement with solution quality.

The authors first review existing approaches—genetic algorithms, simulated annealing, particle swarm, and conventional ant colony optimization (ACO)—highlighting their limitations in handling the strict equality constraint. They then introduce three distinct agent‑based strategies that can be expressed within a common mathematical model: (1) Reinforcement‑Learning (RL) agents, (2) Sensitive agents with a tunable “sensitivity” parameter, and (3) Stigmergic agents based on an enhanced ACO scheme.

In the RL formulation, the state comprises the set of clusters already visited and the last visited vertex. Actions correspond to the choice of a vertex from the next cluster. The reward function simultaneously penalizes tour cost and any violation of the equality constraint, allowing the use of a hybrid algorithm that blends Deep Q‑Learning with policy‑gradient updates. Experience replay and target networks are employed to stabilize learning.

Sensitive agents are equipped with an individual sensitivity coefficient α∈