Threat determination for radiation detection from the Remote Sensing Laboratory

The ability to search for radiation sources is of interest to the Homeland Security community. The hope is to find any radiation sources which may pose a reasonable chance for harm in a terrorist act. The best chance of success for search operations generally comes with fielding as many detection systems as possible. In doing this, the hoped for encounter with the threat source will inevitably be buried in an even larger number of encounters with non-threatening radiation sources commonly used for many medical and industrial use. The problem then becomes effectively filtering the non-threatening sources, and presenting the human-in-the-loop with a modest list of potential threats. Our approach is to field a collection of detection systems which utilize soft-sensing algorithms for the purpose of discriminating potential threat and non-threat objects, based on a variety of machine learning techniques.

💡 Research Summary

The paper addresses a critical challenge in Preventative Radiological Nuclear Detection (PRND): the overwhelming number of benign radiation sources that obscure genuine threats during large‑scale search operations. Traditional PRND relies on human operators equipped with handheld detectors, which is limited by personnel availability, expertise, and the high false‑alarm rate caused by medical isotopes, industrial gauges, and variable background radiation. To overcome these limitations, the authors propose a network of static gamma‑ray sensors coupled with machine‑learning algorithms that can automatically discriminate threat sources from non‑threat sources, thereby reducing the burden on human analysts.

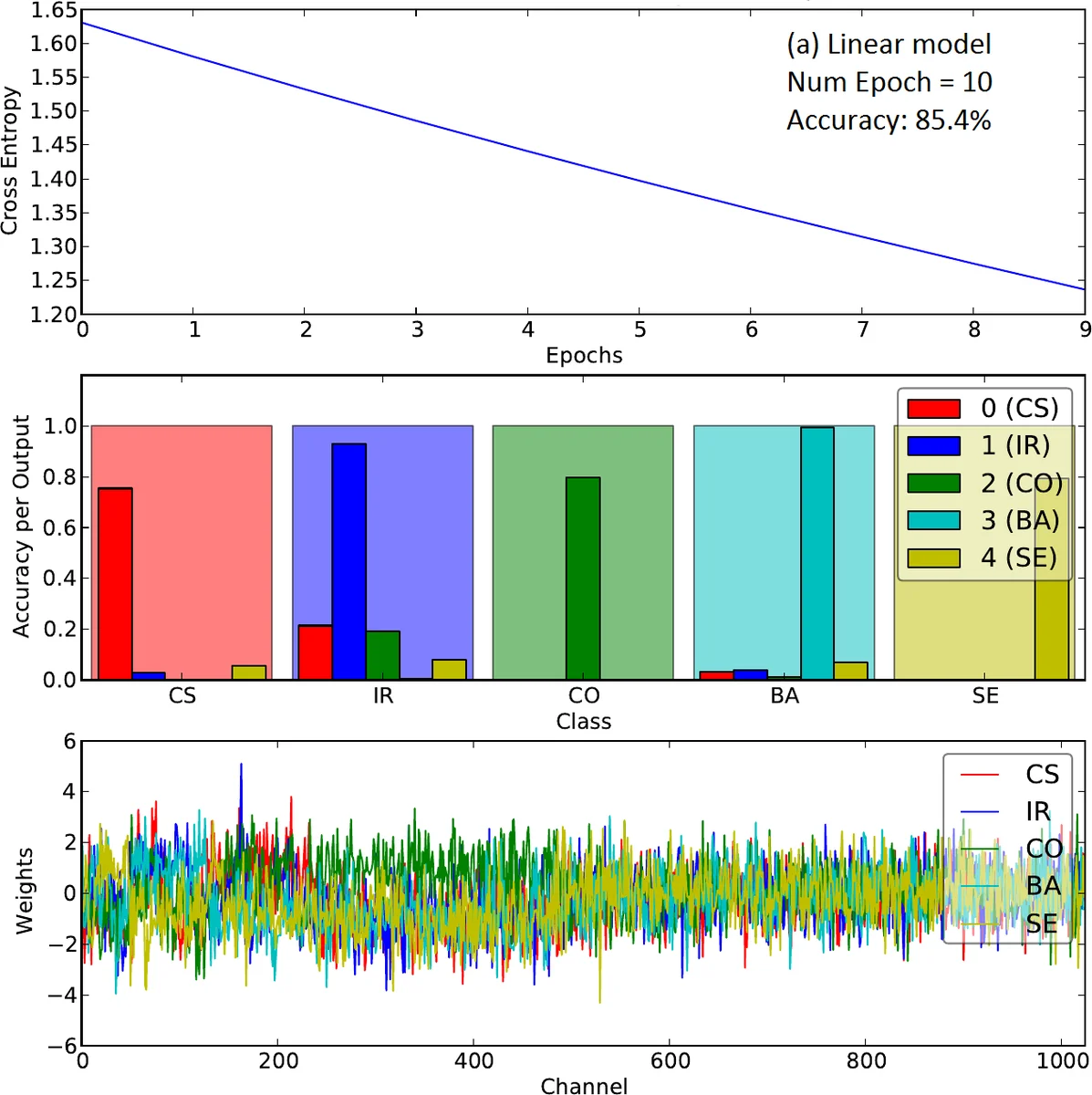

Two neural‑network architectures are evaluated. The first is a simple linear model: a single dense layer with a softmax output that maps a 1024‑channel (or rebinned 256‑channel) gamma spectrum directly to isotope classes. The second adds a hidden layer with a tanh activation, forming a shallow feed‑forward network. Both models are implemented in TensorFlow, trained with the Adam optimizer, and use cross‑entropy as the loss function.

Training data consist of simulated spectra generated by the GADRAS code and measured spectra collected with a 2 × 4 × 16 inch NaI(Tl) detector. Simulations cover a range of isotopes (Cs‑137, Co‑60, Ba‑133, Se‑75, Ir‑192), distances (10–20 m), and shielding materials (bare, concrete, steel, depleted uranium). Measured data include a subset of these isotopes at closer ranges (0–10 m) with bare and steel shielding. Spectra are generated for long dwell times (≈3 h) and then Poisson‑sampled to produce realistic 1‑second acquisition windows, matching the operational sampling rate of the deployed sensors.

Results show that both models converge during training, with the loss decreasing and classification accuracy improving as the number of epochs increases from 10 to 100. Weight visualizations reveal that, after sufficient training, the learned weight matrices exhibit patterns that correspond to spectral peaks, indicating that the networks are internally learning physically meaningful features. At higher energy channels (>600), where counts are negligible, the weights reflect only noise, highlighting a limitation of the current dataset.

When evaluated on simulated test ensembles, the linear model achieves respectable overall accuracy, but its per‑class performance degrades for more complex scenarios, such as distinguishing different shielding configurations. The shallow neural network consistently outperforms the linear model in these tasks, achieving near‑perfect accuracy in identifying shielding type, including the detection of a surrogate industrial gauge (Cs‑137 shielded by steel). In this specific binary classification, the linear model’s accuracy falls below 20 %, whereas the hidden‑layer network reaches almost 100 % accuracy, demonstrating the necessity of non‑linear representation for certain spectral discrimination problems.

Crucially, a model trained solely on simulated data also performs well on real measured spectra, despite the absence of background radiation in the training set. This suggests that high‑fidelity simulations can substitute for extensive field data collection, reducing the cost and time required to develop operational systems.

The authors conclude that a simple linear classifier may suffice for many straightforward isotope identification tasks, but more sophisticated, non‑linear architectures are essential for handling mixed sources, varied shielding, and low‑signal conditions. Future work will expand the simulated and measured datasets, incorporate additional isotopes and shielding scenarios, and explore deeper architectures such as convolutional or recurrent neural networks to improve robustness, real‑time processing, and sensitivity in low‑count environments. The ultimate goal is to enable a single spectroscopist to monitor orders of magnitude more sensors than currently possible, thereby enhancing national security through more effective radiological threat detection.

Comments & Academic Discussion

Loading comments...

Leave a Comment