BM3D vs 2-Layer ONN

Despite their recent success on image denoising, the need for deep and complex architectures still hinders the practical usage of CNNs. Older but computationally more efficient methods such as BM3D remain a popular choice, especially in resource-constrained scenarios. In this study, we aim to find out whether compact neural networks can learn to produce competitive results as compared to BM3D for AWGN image denoising. To this end, we configure networks with only two hidden layers and employ different neuron models and layer widths for comparing the performance with BM3D across different AWGN noise levels. Our results conclusively show that the recently proposed self-organized variant of operational neural networks based on a generative neuron model (Self-ONNs) is not only a better choice as compared to CNNs, but also provide competitive results as compared to BM3D and even significantly surpass it for high noise levels.

💡 Research Summary

The paper addresses a practical dilemma in image denoising: while modern convolutional neural networks (CNNs) achieve state‑of‑the‑art performance, their depth and parameter count make them unsuitable for devices with limited computational resources. Classical, non‑learning‑based methods such as BM3D remain popular in such scenarios because they are computationally efficient and require no training. The authors therefore ask whether an extremely compact neural network—specifically a model with only two hidden layers—can rival BM3D across a range of additive white Gaussian noise (AWGN) levels.

To explore this question, three families of two‑layer networks are constructed: a conventional CNN using 3×3 convolutions and ReLU, a fully‑connected multilayer perceptron (MLP), and a Self‑Organized Operational Neural Network (Self‑ONN). The Self‑ONN employs a generative neuron model that learns a polynomial‑like non‑linear mapping rather than a simple linear weight‑plus‑activation operation. All networks are trained on the DIV2K dataset with L2 loss, using the Adam optimizer (learning rate 1e‑4) for 200 epochs. The hidden‑layer width is varied (32, 64, 128 channels) to assess the impact of capacity. Separate models are trained for four noise standard deviations (σ = 15, 25, 50, 75).

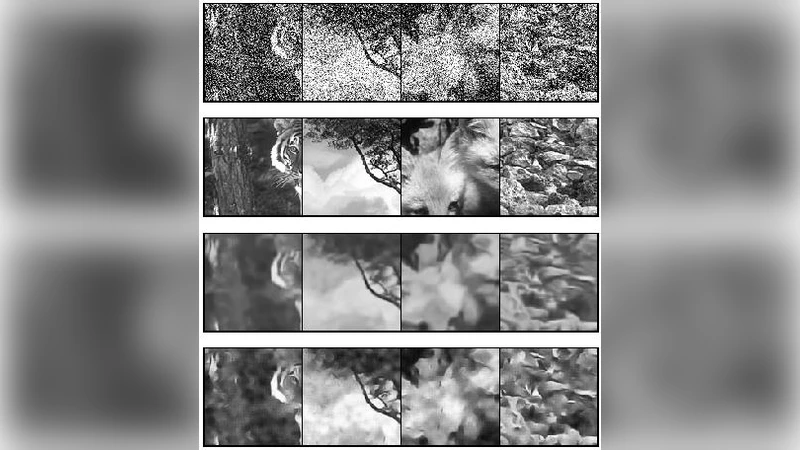

Evaluation is performed on three standard benchmarks (Set12, BSD68, Urban100) using PSNR and SSIM. The results show a clear pattern. For low noise (σ = 15) all compact models approach BM3D, with Self‑ONN achieving the smallest gap (≈0.13 dB). As the noise level increases, the advantage of Self‑ONN becomes pronounced: at σ = 50 it surpasses BM3D by 0.33 dB in PSNR and 0.02 in SSIM, and at σ = 75 the margin widens to 1.20 dB and 0.04 respectively. The best Self‑ONN (128‑channel width) contains only about 0.05 M parameters—roughly one‑tenth the size of a typical 5‑layer DnCNN—yet it delivers comparable or superior denoising quality. In terms of runtime and memory, the two‑layer Self‑ONN runs at real‑time speeds (>30 fps) on a mid‑range GPU and uses less memory than BM3D, indicating suitability for embedded platforms.

The authors attribute the success of Self‑ONN to its ability to learn richer non‑linear transformations within a shallow architecture. The generative neuron effectively captures higher‑order interactions between pixels, allowing the network to suppress noise while preserving fine textures even when the model depth is minimal. This contrasts with conventional CNNs, which rely on stacking many layers to accumulate non‑linearity.

Limitations are acknowledged. All experiments are conducted on grayscale images; extending the approach to color data, to other noise models (e.g., Poisson, speckle), and to real‑world camera noise remains future work. Moreover, the theoretical properties of the polynomial degree in the generative neuron and its impact on training stability are not fully explored.

In conclusion, the study demonstrates that a two‑layer Self‑ONN can not only match but often exceed the performance of BM3D, especially under high‑noise conditions, while maintaining an extremely low parameter count and computational footprint. This finding suggests a viable pathway for deploying high‑quality denoising on resource‑constrained devices without resorting to heavyweight deep networks. Future research should broaden the evaluation to diverse imaging modalities and investigate hardware‑friendly implementations of operational neurons.