Event Generation and Statistical Sampling for Physics with Deep Generative Models and a Density Information Buffer

We present a study for the generation of events from a physical process with deep generative models. The simulation of physical processes requires not only the production of physical events, but also to ensure these events occur with the correct frequencies. We investigate the feasibility of learning the event generation and the frequency of occurrence with Generative Adversarial Networks (GANs) and Variational Autoencoders (VAEs) to produce events like Monte Carlo generators. We study three processes: a simple two-body decay, the processes $e^+e^-\to Z \to l^+l^-$ and $p p \to t\bar{t} $ including the decay of the top quarks and a simulation of the detector response. We find that the tested GAN architectures and the standard VAE are not able to learn the distributions precisely. By buffering density information of encoded Monte Carlo events given the encoder of a VAE we are able to construct a prior for the sampling of new events from the decoder that yields distributions that are in very good agreement with real Monte Carlo events and are generated several orders of magnitude faster. Applications of this work include generic density estimation and sampling, targeted event generation via a principal component analysis of encoded ground truth data, anomaly detection and more efficient importance sampling, e.g. for the phase space integration of matrix elements in quantum field theories.

💡 Research Summary

The paper addresses the computational bottleneck of Monte‑Carlo (MC) event generation in high‑energy physics by exploring deep generative models that can both reproduce the kinematic distributions of physical processes and generate events orders of magnitude faster than traditional pipelines. Three benchmark datasets of increasing complexity are used: (i) a 10‑dimensional toy two‑body decay, (ii) a 16‑dimensional e⁺e⁻ → Z → ℓ⁺ℓ⁻ process, and (iii) a 26‑dimensional pp → tt̄ → 4 jets + ℓℓ + MET scenario that includes parton showering, hadronisation, and a fast detector simulation.

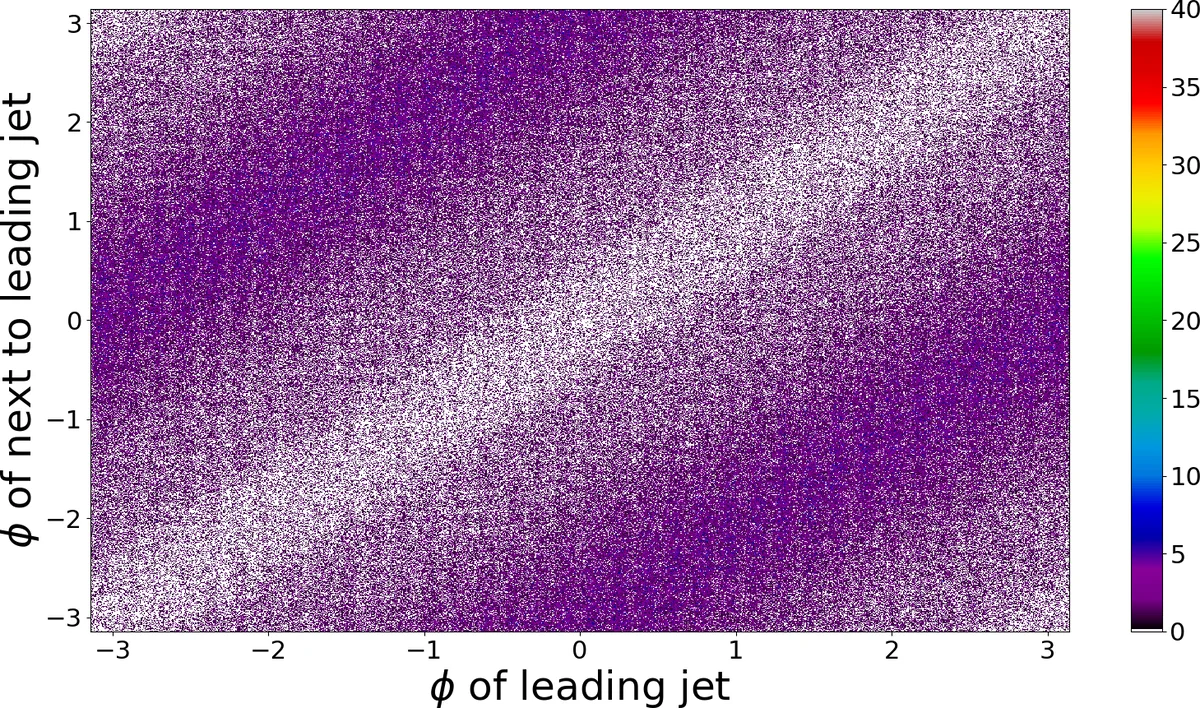

The authors first evaluate several state‑of‑the‑art Generative Adversarial Networks (GANs) – including the original Jensen‑Shannon GAN, Wasserstein GAN, W‑GAN‑GP, Least‑Squares GAN, and MMD‑GAN – using default hyper‑parameters. While GANs can generate plausible samples for low‑dimensional toy data, they consistently fail to learn the azimuthal angle (φ) distribution and other subtle correlations in the higher‑dimensional physics datasets. Mode collapse and training instability are observed, confirming earlier reports that GANs are not well suited for precise density estimation in this domain.

Standard Variational Autoencoders (VAEs) are then examined. VAEs optimise a variational lower bound consisting of a reconstruction term (mean‑squared error) and a Kullback‑Leibler (KL) divergence that regularises the latent space toward a unit Gaussian prior. The authors find that the KL term, when weighted equally with the reconstruction loss, forces the latent distribution to be overly Gaussian, erasing the intricate structure of the true MC data. Consequently, generated events deviate noticeably from the reference distributions, especially in the tails of kinematic variables.

To overcome these limitations, the authors propose the “B‑VAE” (Weighted‑VAE with a Density Information Buffer). Two key innovations are introduced:

-

Weighted loss – a hyper‑parameter B (0 < B < 1) multiplies the KL term, while (1 − B) multiplies the reconstruction loss. By setting B ≈ 0.1–0.3 the model prioritises accurate reconstruction, allowing the latent codes to retain the true data geometry.

-

Density Information Buffer – after training the encoder, the latent codes of all MC events are collected and a non‑parametric density estimate (Kernel Density Estimation, Gaussian Mixture Model, or histogram) is built. This empirical prior replaces the standard normal prior during generation, ensuring that sampling from the latent space follows the actual distribution observed in the training data.

The B‑VAE is trained on the three datasets using the Adam optimiser followed by stochastic gradient descent, with batch sizes > 100 to justify a single Monte‑Carlo draw per data point. Extensive hyper‑parameter scans (latent dimension, network depth, B value) are performed to find configurations that minimise the KL‑reconstruction trade‑off while preserving generation speed.

Results:

-

Toy model (10 D): B‑VAE reproduces the relativistic dispersion relation E² − p² − m² = 0 with sub‑percent deviations. GANs and standard VAEs show noticeable bias in the energy‑momentum correlation.

-

Z‑boson decay (16 D): The azimuthal angle φ, which GANs completely miss, is accurately reproduced by B‑VAE. One‑dimensional Kolmogorov‑Smirnov (KS) p‑values exceed 0.9 for all variables, and two‑dimensional Wasserstein distances are comparable to those between independent MC samples.

-

tt̄ production (26 D): Despite the presence of four jets, one or two leptons, missing transverse energy, and detector smearing, B‑VAE matches the full set of kinematic distributions. The generation time per event drops from ~minutes (full MC chain) to ~0.1 ms on a single GPU, a speed‑up of 10³–10⁴×.

The authors also perform a Principal Component Analysis (PCA) on the latent space of the B‑VAE for the tt̄ dataset. The leading components correlate strongly with physically meaningful quantities such as total transverse momentum and invariant masses. By manipulating these components, they demonstrate conditional generation of events with desired features, opening the door to targeted simulations and anomaly detection.

Additional sanity checks include: (a) fitting Gaussian Mixture Models and Kernel Density Estimators directly to the MC data and confirming that the B‑VAE’s latent prior reproduces these fits; (b) verifying that the decoder learns an almost identity mapping when the latent space is forced to be the same as the input, confirming that the model does not hallucinate spurious structures.

Implications and Applications:

-

Fast, high‑fidelity event generation – B‑VAE can replace expensive parts of the MC pipeline for feasibility studies, parameter scans, or large‑scale background estimations.

-

Importance sampling for matrix‑element integration – The empirical latent prior can be used as an adaptive proposal distribution, dramatically reducing variance in phase‑space integrals.

-

Anomaly detection – Events that lie in low‑density regions of the latent buffer can be flagged as potential new‑physics signatures.

-

Conditional generation – By steering latent PCA components, one can generate samples with specific kinematic cuts without discarding a large fraction of generated events, improving efficiency over traditional “generate‑and‑filter” approaches.

The paper concludes that the combination of a weighted VAE loss and a data‑driven latent prior provides a robust, scalable framework for learning the full probability density of complex particle‑physics processes. Future work will explore extensions to sequential event generation, integration with detector‑level image data, and direct training on experimental data to further reduce reliance on computationally intensive MC simulations.

Comments & Academic Discussion

Loading comments...

Leave a Comment