User interface for in-vehicle systems with on-wheel finger spreading gestures and head-up displays

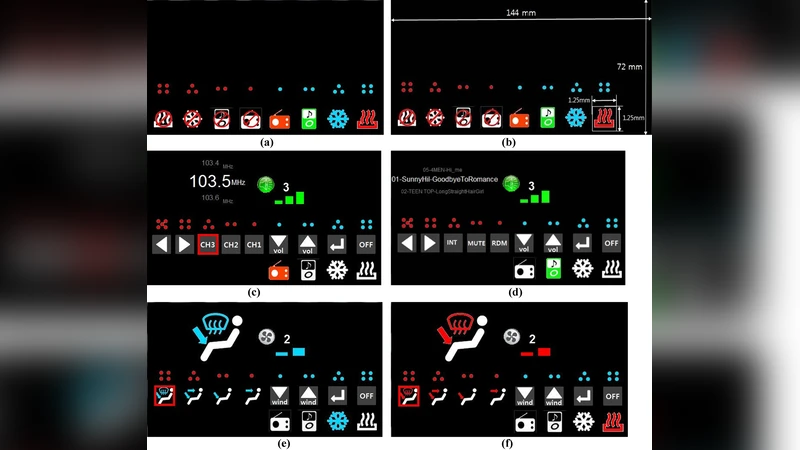

Interacting with an in-vehicle system through a central console is known to induce visual and biomechanical distractions, thereby delaying the danger recognition and response times of the driver and significantly increasing the risk of an accident. To address this problem, various hand gestures have been developed. Although such gestures can reduce visual demand, they are limited in number, lack passive feedback, and can be vague and imprecise, difficult to understand and remember, and culture-bound. To overcome these limitations, we developed a novel on-wheel finger spreading gestural interface combined with a head-up display (HUD) allowing the user to choose a menu displayed in the HUD with a gesture. This interface displays audio and air conditioning functions on the central console of a HUD and enables their control using a specific number of fingers while keeping both hands on the steering wheel. We compared the effectiveness of the newly proposed hybrid interface against a traditional tactile interface for a central console using objective measurements and subjective evaluations regarding both the vehicle and driver behaviour. A total of 32 subjects were recruited to conduct experiments on a driving simulator equipped with the proposed interface under various scenarios. The results showed that the proposed interface was approximately 20% faster in emergency response than the traditional interface, whereas its performance in maintaining vehicle speed and lane was not significantly different from that of the traditional one.

💡 Research Summary

The paper addresses the well‑known problem that interacting with a vehicle’s central console forces drivers to divert their eyes and hands away from the steering wheel, increasing visual and biomechanical distraction and consequently raising accident risk. To mitigate this, the authors propose a hybrid user interface that combines an on‑wheel finger‑spreading gesture with a head‑up display (HUD). The gesture recognises the number of extended fingers (1–5) while the driver’s hands remain on the steering wheel; the selected command is instantly projected onto the HUD, providing visual feedback without requiring the driver to look down at a console. The system is designed to control common functions such as audio and climate settings.

A user study was conducted with 32 participants in a high‑fidelity driving simulator. Each participant performed a series of tasks under three driving scenarios: normal cruising, an emergency braking event, and a dual‑task condition that added secondary workload. For each scenario the authors compared the hybrid interface against a conventional tactile console interface. Objective metrics included emergency response time, speed deviation, and lane‑keeping deviation; subjective metrics comprised NASA‑TLX workload scores and SUS usability ratings.

Results showed that in the emergency braking scenario the hybrid interface reduced response time by roughly 20 % relative to the traditional console, indicating that keeping the driver’s gaze on the HUD and eliminating the need to reach for controls speeds up critical actions. In the normal and dual‑task scenarios, there were no statistically significant differences in speed or lane‑keeping performance, suggesting that the new interface does not impair routine vehicle control. Subjectively, participants reported slightly lower mental workload with the hybrid system, though the difference was modest.

The study highlights several strengths: a clear motivation grounded in driver safety, an innovative combination of tactile‑free gesture input and HUD feedback, and a balanced experimental design that captures both objective performance and user perception. However, limitations are also evident. The gesture set is limited to finger count, constraining the number of distinct commands and potentially leading to ambiguity as the command set expands. Recognition accuracy may degrade when drivers wear gloves or experience hand tremor, and HUD visibility can be compromised under bright sunlight or adverse weather, requiring adaptive brightness or contrast control. Moreover, the simulator environment cannot fully replicate real‑world driving dynamics, so field validation is necessary.

Future work suggested by the authors includes expanding the gesture vocabulary (e.g., incorporating hand orientation or dynamic motions), improving robustness of finger detection under varied lighting and glove conditions, and integrating adaptive HUD technologies that adjust luminance and projection angle based on ambient light. A broader multimodal approach—combining voice, gesture, and HUD—could allow the system to select the most appropriate input modality according to driving context, further reducing distraction.

In summary, the research provides empirical evidence that a steering‑wheel‑based finger‑spreading gesture paired with a HUD can accelerate emergency responses without compromising normal driving performance. This hybrid interface represents a promising direction for next‑generation in‑vehicle infotainment systems, offering a pathway to reduce driver distraction while maintaining intuitive, eyes‑free control of essential vehicle functions.

Comments & Academic Discussion

Loading comments...

Leave a Comment