Kanerva++: extending The Kanerva Machine with differentiable, locally block allocated latent memory

Episodic and semantic memory are critical components of the human memory model. The theory of complementary learning systems (McClelland et al., 1995) suggests that the compressed representation produced by a serial event (episodic memory) is later restructured to build a more generalized form of reusable knowledge (semantic memory). In this work we develop a new principled Bayesian memory allocation scheme that bridges the gap between episodic and semantic memory via a hierarchical latent variable model. We take inspiration from traditional heap allocation and extend the idea of locally contiguous memory to the Kanerva Machine, enabling a novel differentiable block allocated latent memory. In contrast to the Kanerva Machine, we simplify the process of memory writing by treating it as a fully feed forward deterministic process, relying on the stochasticity of the read key distribution to disperse information within the memory. We demonstrate that this allocation scheme improves performance in memory conditional image generation, resulting in new state-of-the-art conditional likelihood values on binarized MNIST (<=41.58 nats/image) , binarized Omniglot (<=66.24 nats/image), as well as presenting competitive performance on CIFAR10, DMLab Mazes, Celeb-A and ImageNet32x32.

💡 Research Summary

The paper introduces Kanerva++ (K++), a novel memory‑augmented generative model that bridges episodic (fast) and semantic (slow) memory by extending the Kanerva Machine (KM) with a differentiable, locally block‑allocated latent memory. Inspired by traditional heap allocation, K++ treats memory as a fixed‑size 3‑D tensor and allocates contiguous sub‑blocks to store information about an episode of data. The key contribution is a deterministic, feed‑forward write operation: an episode X is encoded by a temporal‑shift‑module‑enhanced ResNet encoder (f_enc), pooled, and then transformed by a deterministic writer network (f_mem) into the full memory M. No iterative optimization (e.g., OLS) is required, eliminating the cubic‑time bottleneck of the Dynamic Kanerva Machine (DKM).

Reading is performed via K stochastic keys y_tk sampled from a learned Gaussian (μ_key, σ_key). Each key drives a Spatial Transformer (ST) that extracts a localized, contiguous memory sub‑region ˆM_k from M. These sub‑regions serve as the prior for latent variables Z: p_θ(Z|ˆM,Y) = N(μ_z, σ_z²). The model’s variational lower bound (ELBO) consists of three terms: (1) reconstruction log‑likelihood, (2) KL divergence between the amortized posterior q_φ(Z|X) and the memory‑conditioned prior p_θ(Z|ˆM,Y), and (3) KL between the key posterior q_φ(Y|X) and a standard normal prior. Because M is deterministic, the computational cost of inference does not scale with memory size; only the number of keys K influences runtime.

K++ also incorporates a Temporal Shift Module (TSM) into the encoder, allowing the network to capture temporal context across the episode and produce richer embeddings for memory writing. Empirically, this addition yields a 6.3 nats/image improvement over DKM on binarized Omniglot.

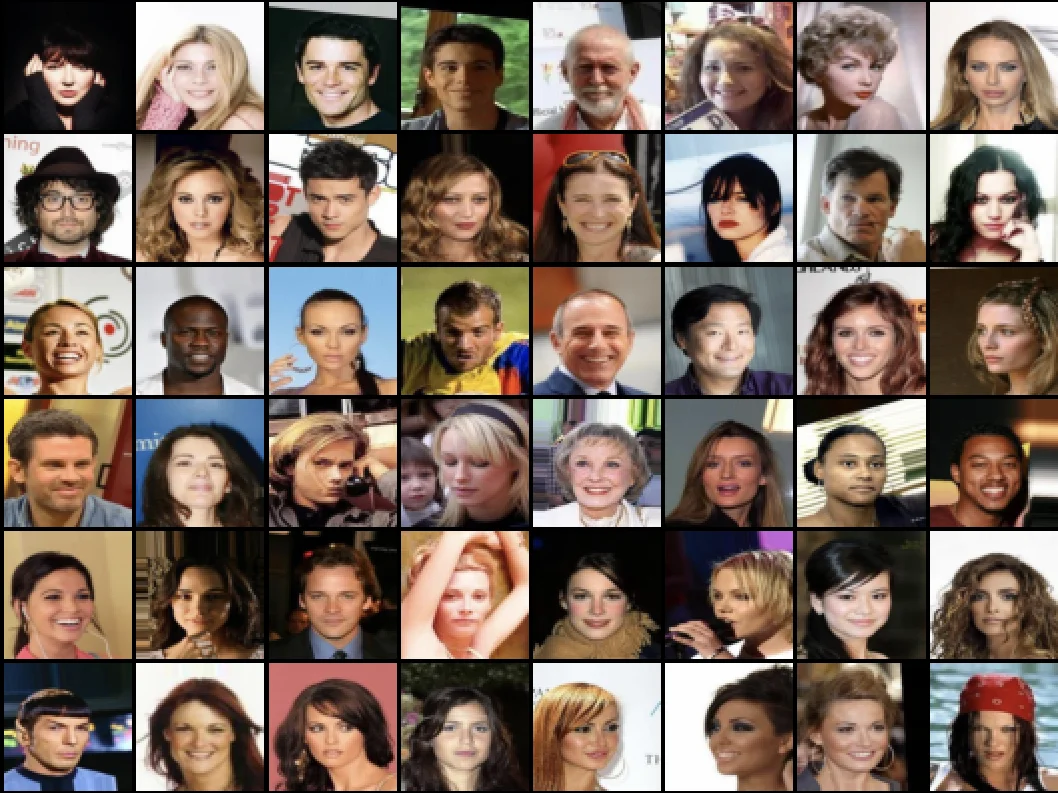

The authors evaluate K++ on several benchmarks. On binarized MNIST and Omniglot, K++ achieves new state‑of‑the‑art conditional log‑likelihoods (≤ 41.58 nats/image and ≤ 66.24 nats/image respectively). It also delivers competitive results on CIFAR‑10, DMLab Mazes, Celeb‑A, and ImageNet‑32×32. Ablation studies show that increasing the number of memory blocks K improves compression but may reduce reconstruction fidelity, offering a controllable trade‑off.

In comparison to KM and DKM, K++ offers several advantages: (i) deterministic writes remove the need for costly inner‑loop optimization; (ii) block‑wise addressing via spatial transformers provides O(K) read complexity versus O(N) slot‑wise attention; (iii) the stochastic key distribution preserves expressive power despite deterministic memory; (iv) the model aligns with psychological findings that visual memory changes deterministically.

Overall, Kanerva++ demonstrates that a principled Bayesian allocation scheme, combined with modern differentiable programming tools (spatial transformers, TSM), can yield a scalable, high‑performing memory‑augmented generative model that more closely mirrors the dual‑system architecture of human memory.

Comments & Academic Discussion

Loading comments...

Leave a Comment