Architectural Decay as Predictor of Issue- and Change-Proneness

💡 Research Summary

The paper investigates whether architectural decay—manifested as “architectural smells”—can be used to predict a software system’s future issue‑proneness and change‑proneness. The authors analyze ten open‑source projects from the Apache Software Foundation, covering 466 versions in total. For each version they recover three architectural views using the ARCADE tool suite (techniques ACDC, ARC, and PKG) and automatically detect eleven distinct architectural smells (e.g., dependency cycles, duplicate functionality, logical coupling, unused interfaces).

Implementation issues are harvested from the projects’ Jira issue trackers, including bug reports, feature requests, improvements, and tasks. The authors map each issue to the architectural smells present in the versions it affects, defining an “infection” relationship when the issue’s fixing commits modify files that belong to a smell instance. This mapping yields a labeled dataset where each version is annotated with binary targets: whether it is issue‑prone and whether it is change‑prone.

The raw data are pre‑processed in WEKA: labeling, class balancing, and 10‑fold cross‑validation. Multiple machine‑learning algorithms (Random Forest, Support Vector Machine, Logistic Regression, etc.) are trained to predict the two targets based solely on the smell‑derived feature vectors. Two research questions guide the evaluation.

RQ1 – System‑specific prediction: Models trained on historical versions of the same system achieve high predictive performance. Precision and recall are at least 70 % for all systems and reach up to 95 % for certain architectural views. This demonstrates that architectural smells have a consistent, measurable impact on future defects and code churn, independent of other evolving factors such as system size. Practitioners can therefore use smell detection to flag components that are likely to cause future maintenance problems and plan refactorings proactively.

RQ2 – Cross‑project (general‑purpose) prediction: The authors also train models on a pooled dataset comprising unrelated projects and test them on a held‑out system. Although the cross‑project models are slightly less accurate than the system‑specific ones, the drop in precision/recall is modest—typically under 10 %. This result suggests that the relationship between architectural decay and implementation issues is not confined to a particular domain, language, or development team; rather, it reflects a broader, domain‑independent phenomenon. Consequently, developers of a brand‑new project can leverage pre‑trained models to obtain early risk assessments even when no historical data exist for their own codebase.

Key contributions of the work include:

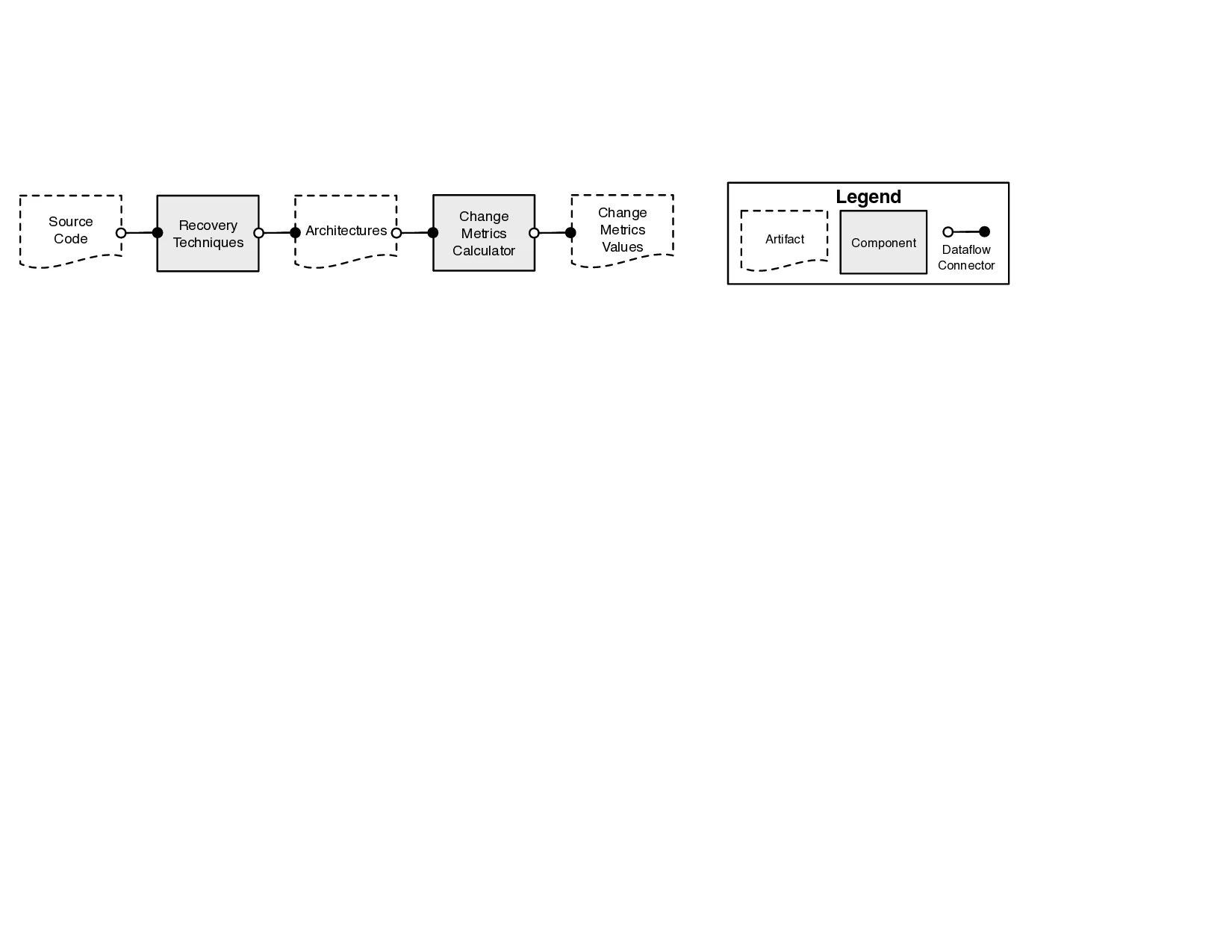

- An automated data‑pipeline that integrates architecture recovery, smell detection, issue extraction, and machine‑learning preprocessing, enabling large‑scale empirical studies.

- Empirical evidence that architectural‑level metrics alone can predict future maintenance problems with high accuracy, extending prior work that focused on code‑level metrics (e.g., churn, complexity, code smells).

- Demonstration of model generalizability across heterogeneous systems, opening the possibility of reusable prediction services for early‑stage software projects.

The study acknowledges several limitations. The accuracy of smell detection depends on the chosen recovery technique; errors in recovered architectures propagate to the prediction models. The “infection” relation between a smell instance and an issue is heuristic and does not guarantee causality—multiple issues may share a smell, and fixing an issue may not eliminate the underlying smell. Moreover, the evaluation is limited to Jira‑based issue trackers; applicability to other tracking systems remains to be validated.

Future research directions proposed by the authors include: enhancing architecture recovery with hybrid static‑dynamic analyses to improve smell detection fidelity; constructing explicit cause‑effect graphs linking smells to issues for more precise refactoring guidance; and expanding the empirical evaluation to additional languages, platforms, and industrial settings. By advancing these avenues, the community can move toward systematic, architecture‑aware maintenance tooling that reduces technical debt and improves software quality over the long term.

Comments & Academic Discussion

Loading comments...

Leave a Comment