Scaling Creative Inspiration with Fine-Grained Functional Aspects of Ideas

Large repositories of products, patents and scientific papers offer an opportunity for building systems that scour millions of ideas and help users discover inspirations. However, idea descriptions are typically in the form of unstructured text, lacking key structure that is required for supporting creative innovation interactions. Prior work has explored idea representations that were either limited in expressivity, required significant manual effort from users, or dependent on curated knowledge bases with poor coverage. We explore a novel representation that automatically breaks up products into fine-grained functional aspects capturing the purposes and mechanisms of ideas, and use it to support important creative innovation interactions: functional search for ideas, and exploration of the design space around a focal problem by viewing related problem perspectives pooled from across many products. In user studies, our approach boosts the quality of creative search and inspirations, substantially outperforming strong baselines by 50-60%.

💡 Research Summary

The paper tackles a fundamental bottleneck in leveraging massive repositories of ideas—products, patents, and scientific papers—for creative innovation: the lack of structured representations in the raw textual descriptions. Existing approaches either rely on coarse document‑level embeddings, hand‑crafted functional ontologies, or require extensive manual annotation, limiting both expressiveness and scalability. To overcome these constraints, the authors propose a novel, fine‑grained functional representation that automatically extracts two key aspects from each idea description: purpose (the goal or intended effect) and mechanism (the means by which the goal is achieved).

Data collection and annotation

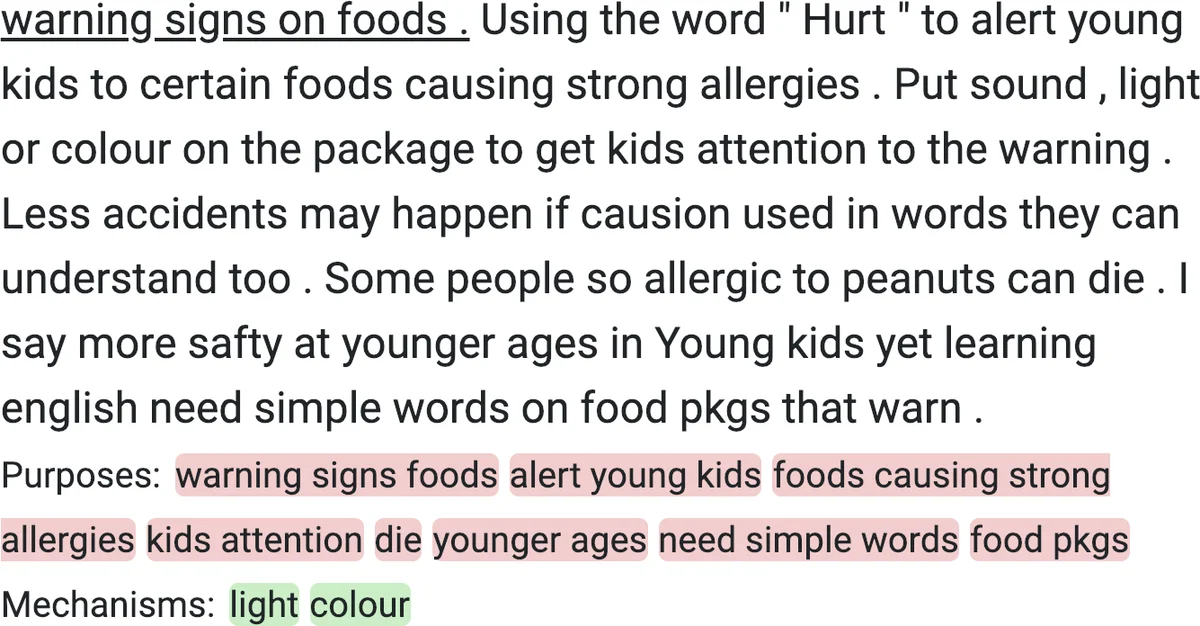

The authors start with 8,500 user‑generated product descriptions from the online invention platform Quirky.com, a dataset characterized by informal language, spelling errors, and varied domain coverage (kitchen, health, clean energy, etc.). They design a crowdsourcing task on Amazon Mechanical Turk to label short text spans as either purpose, mechanism, or other. To ensure high‑quality, fine‑grained annotations, they impose a maximum span length, prohibit stop‑word labeling, and filter workers by a >95 % approval rate and reasonable completion times. In total, 400 workers contributed, earning $0.10 per task (approximately a $7 hourly rate). The resulting dataset provides a solid ground truth for training a span‑tagging model.

Model architecture

A BERT‑based sequence tagging model is trained on the annotated data to predict token‑level BIO tags for purpose, mechanism, and non‑functional text. After training, the model can be applied to any new product description to automatically locate purpose and mechanism spans. Each identified span is then embedded using the contextual representation from the same BERT model (e.g., averaging the final‑layer token vectors). Consequently, each idea is represented as a set of purpose‑span embeddings and a set of mechanism‑span embeddings, preserving the fine‑grained functional semantics that are lost in a single document vector.

Clustering and functional network construction

Purpose and mechanism embeddings are separately clustered (using K‑means or hierarchical clustering) to form semantically coherent groups. Human‑readable labels (e.g., “wash clothes”, “dry ice cleaning”) are assigned for illustration. Co‑occurrence statistics across the entire corpus are used to compute similarity edges between clusters, yielding a functional concept graph. This graph mirrors traditional engineering functional ontologies but is automatically derived at scale, enabling navigation across abstract and concrete functional relationships.

Applications

- Functional aspect‑based search – Users can formulate queries that combine desired purposes with constraints on mechanisms (e.g., “wash clothes without water”). The system retrieves ideas whose purpose spans match the query while filtering out undesired mechanisms, returning alternatives such as dry‑ice cleaning or chemical coatings.

- Design‑space exploration – By selecting a purpose node in the functional graph, users can explore neighboring purpose clusters, effectively reframing the problem (e.g., from “wash clothes” to “remove odor” or “eliminate dirt”). This supports the cognitive process of problem re‑definition, a known catalyst for divergent thinking.

User studies and evaluation

Two controlled user experiments assess the efficacy of the proposed representation.

Study 1 (Alternative product‑use search): Participants performed a search task using the functional search interface. The system achieved a Mean Average Precision (MAP) of 87 %, outperforming strong baselines (standard document embeddings, keyword search) by 50‑60 %.

Study 2 (Design‑space exploration): Participants used the functional graph to discover inspirations for a focal problem. 62 % of the presented inspirations were rated as both useful and novel, again a 50‑60 % improvement over baseline methods. These results demonstrate that fine‑grained functional representations materially enhance creative search and ideation support.

Technical strengths

- Dual‑aspect modeling (purpose + mechanism) enables nuanced matching that respects both what an idea does and how it does it.

- Span‑level embeddings retain interpretability; users can see the exact textual fragment driving a match.

- Scalable automatic pipeline (annotation → model → clustering → graph) works on tens of thousands of items without manual ontology construction.

Limitations

- The approach depends on a sizable, high‑quality annotated corpus; extending to new domains may require additional labeling effort.

- The model is trained on informal product descriptions; performance on formal patent or scientific abstracts is untested.

- Purpose/mechanism labeling is inherently subjective; cluster boundaries can be fuzzy, potentially affecting downstream retrieval quality.

- Evaluation is limited to laboratory user studies; real‑world impact in corporate R&D or open‑innovation platforms remains to be demonstrated.

Future directions

The authors outline several promising extensions:

- Domain transfer – Fine‑tune the tagger on patents, scholarly articles, or multimodal data (images, schematics) to broaden applicability.

- Active learning & interactive annotation – Reduce labeling cost by selecting the most informative examples for human review.

- Multimodal functional extraction – Combine textual spans with visual cues (e.g., product diagrams) to enrich mechanism representations.

- Integration with recommendation systems – Embed the functional graph into enterprise innovation pipelines, measuring long‑term effects on idea generation and product development cycles.

Conclusion

By automatically decomposing idea texts into purpose and mechanism spans, embedding them, and organizing them into a functional concept graph, the paper delivers a scalable, expressive representation that markedly improves functional search and design‑space exploration. The work bridges a gap between unstructured textual repositories and the structured, analogical reasoning required for creative problem solving, offering a concrete step toward AI‑augmented innovation at industrial scale.

Comments & Academic Discussion

Loading comments...

Leave a Comment