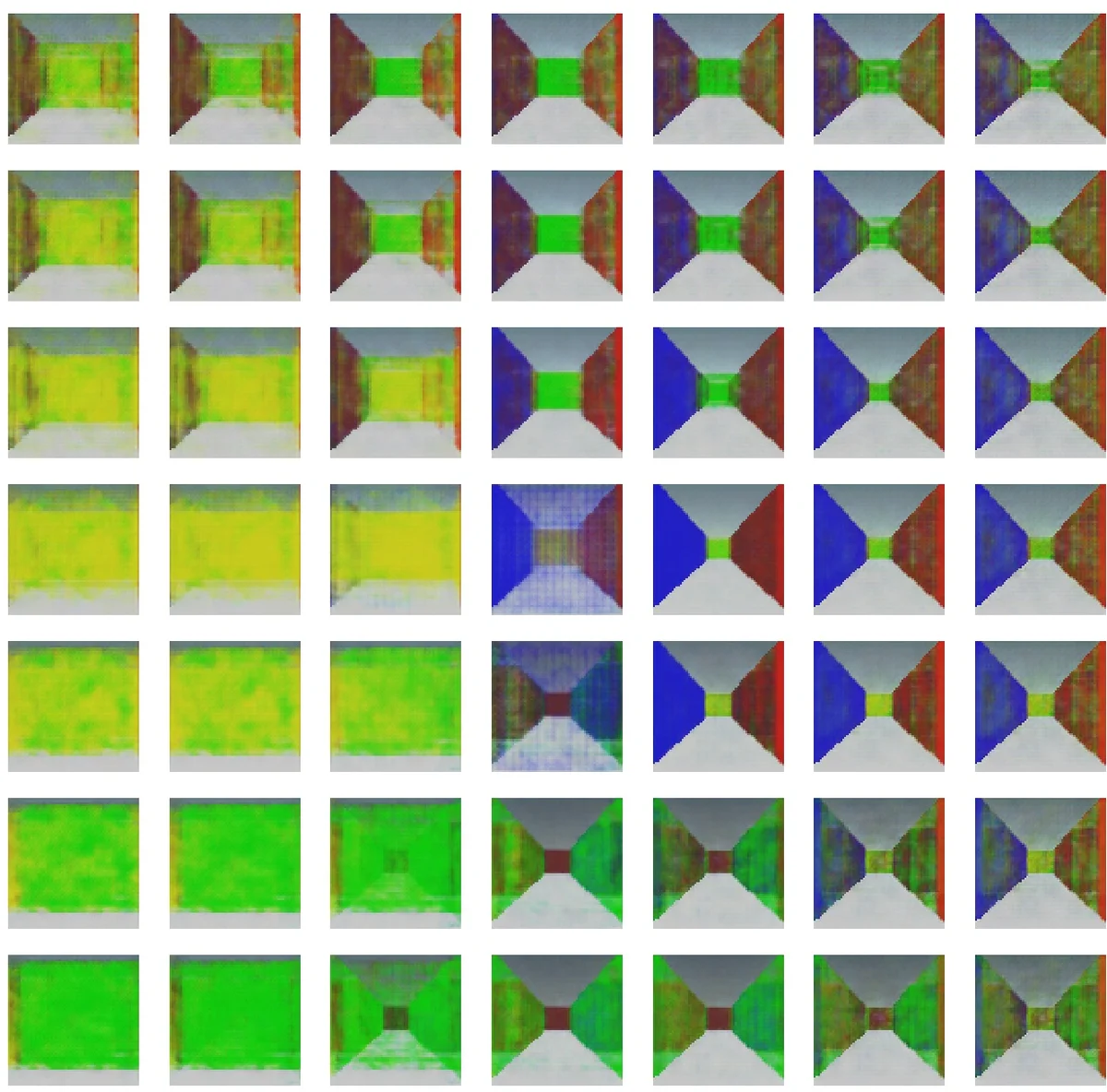

Organization of a Latent Space structure in VAE/GAN trained by navigation data

We present a novel artificial cognitive mapping system using generative deep neural networks, called variational autoencoder/generative adversarial network (VAE/GAN), which can map input images to latent vectors and generate temporal sequences internally. The results show that the distance of the predicted image is reflected in the distance of the corresponding latent vector after training. This indicates that the latent space is self-organized to reflect the proximity structure of the dataset and may provide a mechanism through which many aspects of cognition are spatially represented. The present study allows the network to internally generate temporal sequences that are analogous to the hippocampal replay/pre-play ability, where VAE produces only near-accurate replays of past experiences, but by introducing GANs, the generated sequences are coupled with instability and novelty.

💡 Research Summary

The paper proposes an artificial cognitive‑mapping system that relies solely on visual navigation data and combines a Variational Autoencoder (VAE) with a Wasserstein Generative Adversarial Network (wGAN‑gp) into a VAE/GAN architecture. The system consists of three convolutional sub‑networks: an encoder (Enc) that compresses each input frame x(t) into a low‑dimensional latent vector z(t), a generator (Gen) that reconstructs an image from a latent code, and a discriminator (Dis) that forces the generated image to be indistinguishable from real data. Unlike conventional VAEs that reconstruct the current frame, the authors train the network to predict the frame τ steps ahead, i.e., Gen(Enc(x(t))) = x(t + τ).

Training data are 64 × 64 RGB frames captured from an agent moving at constant speed through a simple Unity‑based figure‑8 maze. The environment contains a single junction where the agent randomly chooses left or right, yielding 480 frames that encode both spatial layout and visual continuity. Experiments vary the latent dimensionality (d_z = 5, 10, 20) and prediction horizon (τ = 0, 5, 30), each condition being trained three times with different random seeds. Adam optimizer (β1 = 0, β2 = 0.9, lr = 2 × 10⁻⁴) and a batch size of 64 are used; the discriminator is updated five times per generator step following the wGAN‑gp protocol.

The first set of analyses examines how the latent space reflects the geometry of the visual data. Pairwise distance matrices are computed for (i) the input frames, (ii) the target frames τ steps ahead, and (iii) the corresponding latent vectors. Correlation coefficients between the distance matrices reveal that latent vectors align more closely with the distance structure of the target frames than with the input frames, especially for τ = 30 (p < 0.001). This indicates that the model’s latent space encodes future visual relationships rather than merely reconstructing the present. Principal Component Analysis (PCA) shows that VAE/GAN achieves higher cumulative variance explained than a plain VAE, meaning that the latent representation can be compressed into fewer dimensions without losing much information. When projected onto the first two principal components, the latent trajectories form smooth curves that trace the actual path through the maze, and left‑right branches occupy distinct regions. Quantitative metrics—smoothness (S_PCA) and trajectory dissimilarity (d_LR)—confirm that VAE/GAN yields smoother, more separable trajectories than VAE alone.

The second major contribution is a closed‑loop generation experiment that mimics hippocampal replay/pre‑play. After training, the generator’s output for a given frame is fed back into the encoder to predict the next frame, and this process is iterated. With VAE only, the closed‑loop produces near‑perfect replays of the experienced trajectory, but the images gradually blur, reflecting deterministic reconstruction. Introducing the GAN component injects controlled instability: generated images retain realism while exhibiting novel variations that were never seen during training. Consequently, the closed‑loop produces sequences that diverge from exact replay, resembling the “pre‑play” phenomenon where hippocampal place cells fire for future, unvisited locations. The authors argue that this instability, mediated by the adversarial loss, provides a computational mechanism for generating imaginative, non‑experienced trajectories.

Overall, the study demonstrates that (1) a purely visual predictive model can self‑organize its latent space to mirror the relational structure of future sensory inputs, and (2) coupling VAE with GAN enables the system to generate both accurate replays and creative, novel sequences. These findings bridge deep generative modeling with neuroscientific concepts of cognitive maps, hippocampal replay, and episodic memory reconstruction. By showing that spatial metrics are not required for map formation—visual continuity alone suffices—the work challenges path‑integration‑centric theories and suggests new avenues for building AI agents that learn and imagine environments in a brain‑inspired manner. Future work could extend the approach to multimodal inputs, larger and more complex environments, and integrate mechanisms for long‑term memory consolidation.

Comments & Academic Discussion

Loading comments...

Leave a Comment