Generalized Entropy Concentration for Counts

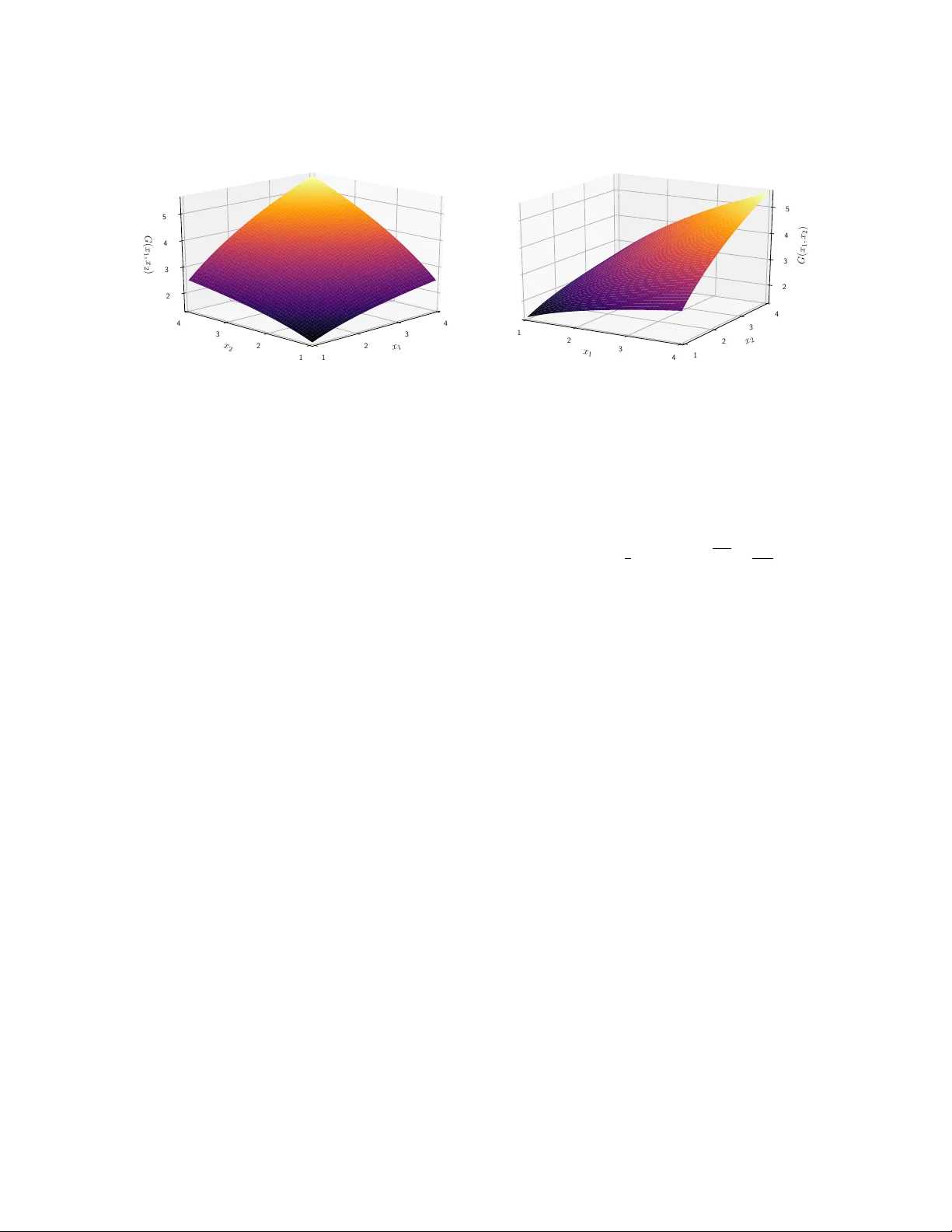

The phenomenon of entropy concentration provides strong support for the maximum entropy method, MaxEnt, for inferring a probability vector from information in the form of constraints. Here we extend this phenomenon, in a discrete setting, to non-nega…

Authors: Kostas N. Oikonomou