Programmable Spectrometry -- Per-pixel Classification of Materials using Learned Spectral Filters

Many materials have distinct spectral profiles. This facilitates estimation of the material composition of a scene at each pixel by first acquiring its hyperspectral image, and subsequently filtering it using a bank of spectral profiles. This process is inherently wasteful since only a set of linear projections of the acquired measurements contribute to the classification task. We propose a novel programmable camera that is capable of producing images of a scene with an arbitrary spectral filter. We use this camera to optically implement the spectral filtering of the scene’s hyperspectral image with the bank of spectral profiles needed to perform per-pixel material classification. This provides gains both in terms of acquisition speed — since only the relevant measurements are acquired — and in signal-to-noise ratio — since we invariably avoid narrowband filters that are light inefficient. Given training data, we use a range of classical and modern techniques including SVMs and neural networks to identify the bank of spectral profiles that facilitate material classification. We verify the method in simulations on standard datasets as well as real data using a lab prototype of the camera.

💡 Research Summary

The paper addresses the fundamental inefficiencies of conventional hyperspectral imaging (HSI) for material classification, namely the long acquisition times and low signal‑to‑noise ratio (SNR) caused by splitting limited light across hundreds of narrow spectral bands. The authors propose a programmable spectral camera that directly implements the linear projections required for classification, thereby eliminating the need to capture the full hyperspectral cube.

The optical architecture builds on a 4‑f system with a coded aperture placed at the “rainbow plane” where the spectrum is spatially dispersed by a diffraction grating. A binary coded aperture introduces an invertible blur in both spatial and spectral domains, avoiding the classic trade‑off where a slit gives high spectral but low spatial resolution and an open aperture gives the opposite. An LCoS (liquid‑crystal‑on‑silicon) spatial light modulator (SLM) is positioned at the same plane to load arbitrary spectral weighting functions sₖ(λ). When the scene’s HSI passes through the coded aperture and the SLM, the sensor records an image that is exactly the integral ∫H(x,y,λ)·sₖ(λ)·c(λ)dλ, i.e., the desired linear projection of the spectrum onto filter sₖ.

Learning the optimal filters is performed offline using labeled training data. Two classifier families are explored:

-

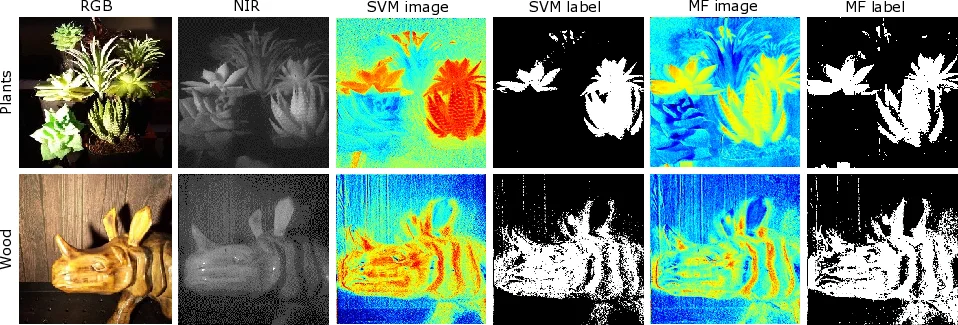

Support Vector Machines (SVM) – For each class a separating hyperplane wᵗx + c is learned; the weight vector w becomes a spectral filter that can be programmed into the camera. Multi‑class problems use a one‑vs‑all scheme, requiring K filters for K classes.

-

Deep Neural Networks (DNN) – A five‑layer fully‑connected network is trained with spectral vectors as input and one‑hot class labels as output. The first layer’s weight matrix A₁ represents a set of linear filters; these are the only operations performed optically. Subsequent non‑linear layers are computed digitally.

The authors evaluate the approach on the NASA Indian Pines dataset (220 spectral bands, five material classes) and on a laboratory prototype. Simulations show that with only 16 captured images (i.e., 16 linear projections) the DNN achieves about 90 % classification accuracy, comparable to state‑of‑the‑art methods that use the full 220‑band cube. The prototype demonstrates real‑time operation at 4 fps, successfully distinguishing real plants from plastic replicas using only 3–5 programmed filters per frame. Because each filter is broadband, the captured images contain significantly more photons than narrowband HSI, resulting in higher SNR and shorter exposure times.

Key advantages highlighted include:

- Speed: Acquisition time drops from minutes (full HSI) to hundreds of milliseconds per frame.

- Efficiency: Light is not divided among many narrow bands, improving SNR.

- Hardware‑software co‑design: The first linear layer is off‑loaded to optics, reducing the number of required measurements dramatically.

Limitations are also discussed. The current model assumes pure pixels (each pixel belongs to a single material), so mixed‑pixel scenarios are not directly addressed. The resolution and refresh rate of the coded aperture and LCoS impose a ceiling on spatial resolution and frame rate. Moreover, only linear spectral projections are realizable optically; any non‑linear feature extraction must be performed digitally after the optical stage.

Future work suggested includes extending the method to handle mixed pixels via non‑linear optical processing, employing faster high‑resolution spatial light modulators (e.g., MEMS micromirror arrays), and integrating spatial information to create joint spatial‑spectral filters that could further boost classification robustness.

In summary, the paper introduces a novel programmable spectrometer that optically computes the discriminative spectral features needed for per‑pixel material classification. By learning a compact set of spectral filters and implementing them directly in hardware, the system achieves video‑rate classification with far fewer measurements and higher photon efficiency than traditional hyperspectral cameras, opening a practical pathway for real‑time spectroscopic imaging in fields such as remote sensing, precision agriculture, and biomedical diagnostics.

Comments & Academic Discussion

Loading comments...

Leave a Comment