yNet: a multi-input convolutional network for ultra-fast simulation of field evolvement

💡 Research Summary

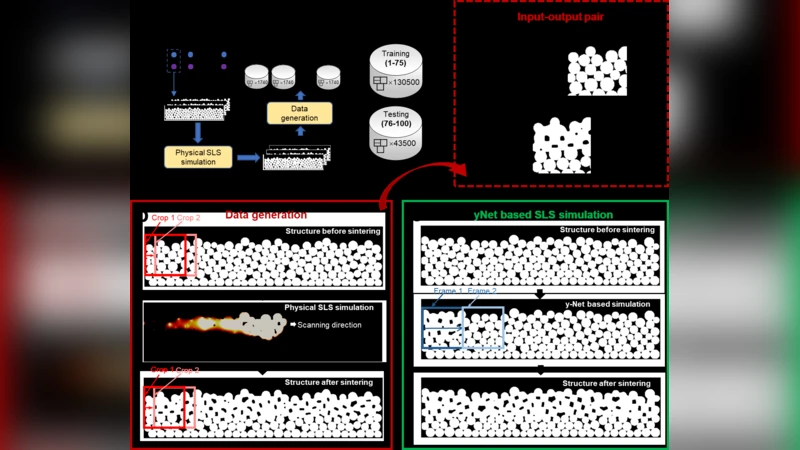

The paper introduces yNet, a novel multi‑input convolutional neural network designed to simulate the temporal evolution of physical fields at speeds far exceeding conventional numerical solvers. Traditional finite‑difference, finite‑element, or finite‑volume methods provide high fidelity but become computationally prohibitive when fine spatial resolution or small time steps are required. yNet addresses this bottleneck by ingesting three distinct inputs simultaneously: (1) the current field distribution, (2) a vector of physical parameters (e.g., viscosity, permittivity, reaction coefficients), and (3) boundary‑condition specifications. Each input stream is processed by a dedicated encoder composed of multi‑scale convolutional blocks, residual connections, and layer normalizations, producing high‑dimensional feature maps that capture both local and global information.

The encoded features are merged in a Y‑shaped architecture: after the separate encoders, the network branches converge into a shared decoder that reconstructs the field at the next time step. Skip connections from encoder to decoder preserve fine‑grained spatial details, while weight‑sharing across similar blocks reduces the total parameter count, facilitating transfer learning across different physical systems. The loss function combines a standard L2 reconstruction term with physics‑based regularizers such as mass conservation, energy dissipation, and divergence‑free constraints, encouraging the network to respect underlying governing equations. Training data are generated from high‑resolution simulations of three benchmark problems: (i) 2‑D incompressible Navier‑Stokes flow (the classic “lid‑driven cavity”), (ii) Maxwell’s equations for electromagnetic wave propagation, and (iii) the Cahn‑Hilliard phase‑field model for binary alloy separation. For each benchmark, a wide range of parameters and boundary conditions is sampled, and data augmentation ensures robust generalization.

Experimental results demonstrate that yNet achieves speed‑ups of three to four orders of magnitude relative to state‑of‑the‑art CFD/FEM solvers while maintaining an average relative L2 error below 2 %. Importantly, the model retains accuracy when evaluated on out‑of‑distribution parameter sets and boundary configurations, indicating strong extrapolation capability—a critical requirement for design‑space exploration and real‑time control. Transfer‑learning experiments further reveal that re‑using a pretrained yNet on a new physical scenario (e.g., a different Reynolds number regime) requires only 10 % of the original training data to reach comparable performance, underscoring the network’s ability to learn physics‑agnostic feature representations.

The authors acknowledge limitations: memory consumption grows sharply for full 3‑D high‑resolution fields, and highly nonlinear phenomena such as shock waves or phase‑transition fronts may demand additional physics‑informed loss terms or adaptive mesh‑aware architectures. Future work is outlined to address these challenges through memory‑efficient tokenization, hybrid PINN‑style regularization, and custom GPU/TPU kernels optimized for the yNet topology.

In summary, yNet offers a powerful, general‑purpose framework for ultra‑fast field evolution simulation. By coupling multi‑input processing with physics‑aware training, it bridges the gap between data‑driven speed and physics‑based fidelity, opening new possibilities for rapid prototyping, inverse design, and real‑time decision making across fluid dynamics, electromagnetics, materials science, and beyond.