DA-RefineNet:A Dual Input Whole Slide Image Segmentation Algorithm Based on Attention

Automatic medical image segmentation has wide applications for disease diagnosing. However, it is much more challenging than natural optical image segmentation due to the high-resolution of medical images and the corresponding huge computation cost. The sliding window is a commonly used technique for whole slide image (WSI) segmentation, however, for these methods based on the sliding window, the main drawback is lacking global contextual information for supervision. In this paper, we propose a dual-inputs attention network (denoted as DA-RefineNet) for WSI segmentation, where both local fine-grained information and global coarse information can be efficiently utilized. Sufficient comparative experiments are conducted to evaluate the effectiveness of the proposed method, the results prove that the proposed method can achieve better performance on WSI segmentation compared to methods relying on single-input.

💡 Research Summary

The paper addresses a fundamental limitation of sliding‑window based whole slide image (WSI) segmentation: the lack of global contextual information that hampers accurate pixel‑wise predictions, especially near patch borders. To overcome this, the authors propose DA‑RefineNet, a dual‑input attention network that simultaneously processes a high‑resolution local patch (slice) and a down‑sampled version of the entire slide (full image). The full image is resized to the same spatial dimensions as the slice (typically a 10× down‑sampling) to keep computational overhead low while still providing coarse‑grained semantic cues that enlarge the effective receptive field.

The architecture consists of three main components: (1) two parallel encoders (ENC_slice and ENC_full) that follow a U‑Net‑style encoder‑decoder backbone, extracting multi‑scale features from the slice and the full image respectively; (2) a series of Attention‑Refine blocks that fuse the two streams, and (3) a decoder (DEC_refine) that aggregates the fused features to produce the final segmentation map. Each Attention‑Refine block contains two Residual Convolution Units (RCU) – simplified ResNet blocks without batch normalization – followed by an attention‑based feature fusion module and a Chain Residual Pooling (CRP) unit. The attention fusion takes the slice feature X_S, the full‑image feature X_F, and the previous block output O_{k‑1}, computes a fused representation X_fusion, learns attention weights W_attn = W(X_fusion), and updates the block output as O_k = W_attn · X_S + O_{k‑1}. This mechanism allows the coarse global context to re‑weight and reorganize fine‑grained local features dynamically.

Three fusion strategies are compared: simple concatenation, element‑wise addition, and the proposed attention‑based fusion. Concatenation increases channel dimensionality but treats all channels equally; addition is computationally cheap but discards inter‑channel relationships; attention fusion learns adaptive weights, yielding the best performance in all reported metrics.

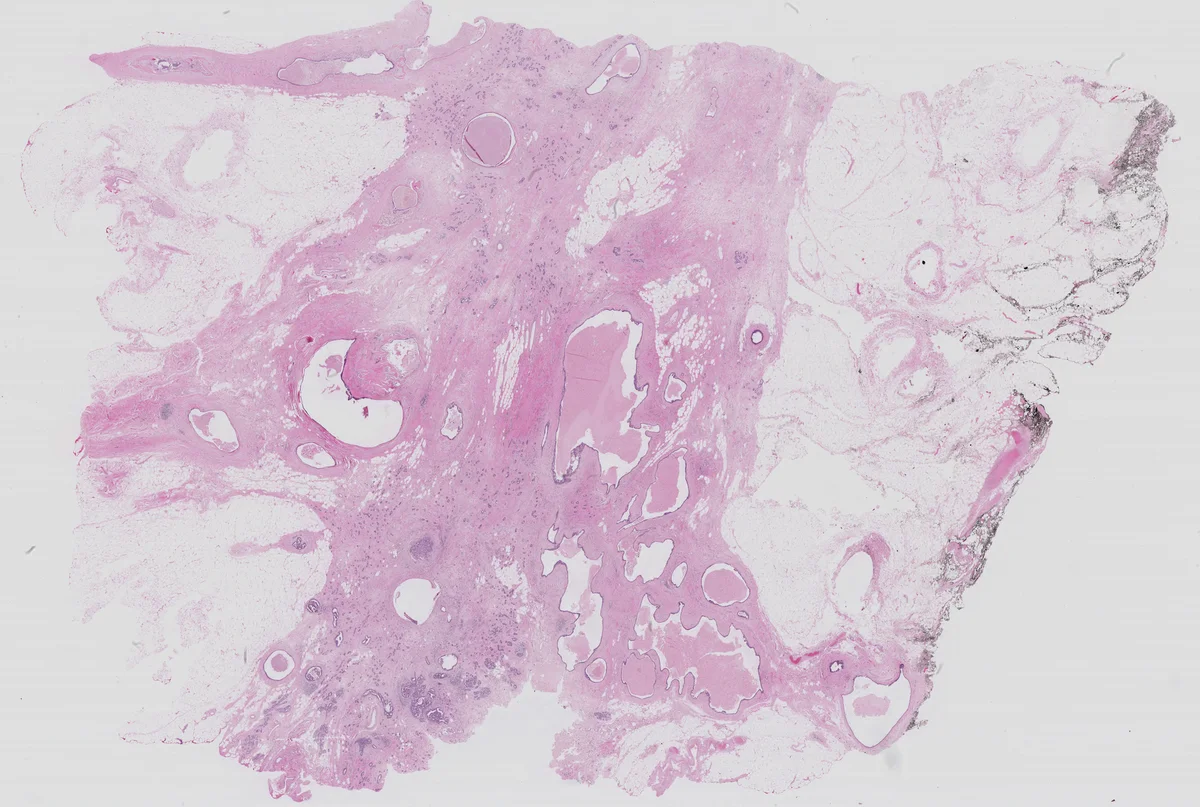

The model is evaluated on the ICIAR2018 dataset, which contains 400 breast histology WSIs annotated with four classes (normal, benign, in‑situ carcinoma, invasive carcinoma). Using five‑fold cross‑validation, the authors report mean Intersection‑over‑Union (MIoU), overall pixel accuracy, and a task‑specific “Score” that penalizes large deviations from ground truth. DA‑RefineNet achieves MIoU = 78.3 % (versus 71.2 % for baseline U‑Net), accuracy = 91.2 % (versus 86.5 %), and Score = 0.84 (versus 0.77). Qualitative results show smoother, more consistent boundaries, confirming that the global context mitigates the patch‑border inconsistencies typical of sliding‑window methods.

Key contributions include: (1) a novel dual‑input framework that expands receptive field without substantial memory increase; (2) an attention‑refine block that effectively merges coarse global and fine local features; (3) an empirical study of fusion strategies demonstrating the superiority of attention‑based fusion. Limitations are acknowledged: the fixed down‑sampling factor may cause information loss for extremely large slides, and the dual‑encoder design increases training time and GPU memory by roughly 30 % compared to single‑input models. Future work is suggested to explore multi‑scale pyramids, dynamic sampling, and more sophisticated attention mechanisms to further balance efficiency and contextual richness.

Comments & Academic Discussion

Loading comments...

Leave a Comment