Deep Model Predictive Control with Online Learning for Complex Physical Systems

The control of complex systems is of critical importance in many branches of science, engineering, and industry. Controlling an unsteady fluid flow is particularly important, as flow control is a key enabler for technologies in energy (e.g., wind, tidal, and combustion), transportation (e.g., planes, trains, and automobiles), security (e.g., tracking airborne contamination), and health (e.g., artificial hearts and artificial respiration). However, the high-dimensional, nonlinear, and multi-scale dynamics make real-time feedback control infeasible. Fortunately, these high-dimensional systems exhibit dominant, low-dimensional patterns of activity that can be exploited for effective control in the sense that knowledge of the entire state of a system is not required. Advances in machine learning have the potential to revolutionize flow control given its ability to extract principled, low-rank feature spaces characterizing such complex systems. We present a novel deep learning model predictive control (DeepMPC) framework that exploits low-rank features of the flow in order to achieve considerable improvements to control performance. Instead of predicting the entire fluid state, we use a recurrent neural network (RNN) to accurately predict the control relevant quantities of the system. The RNN is then embedded into a MPC framework to construct a feedback loop, and incoming sensor data is used to perform online updates to improve prediction accuracy. The results are validated using varying fluid flow examples of increasing complexity.

💡 Research Summary

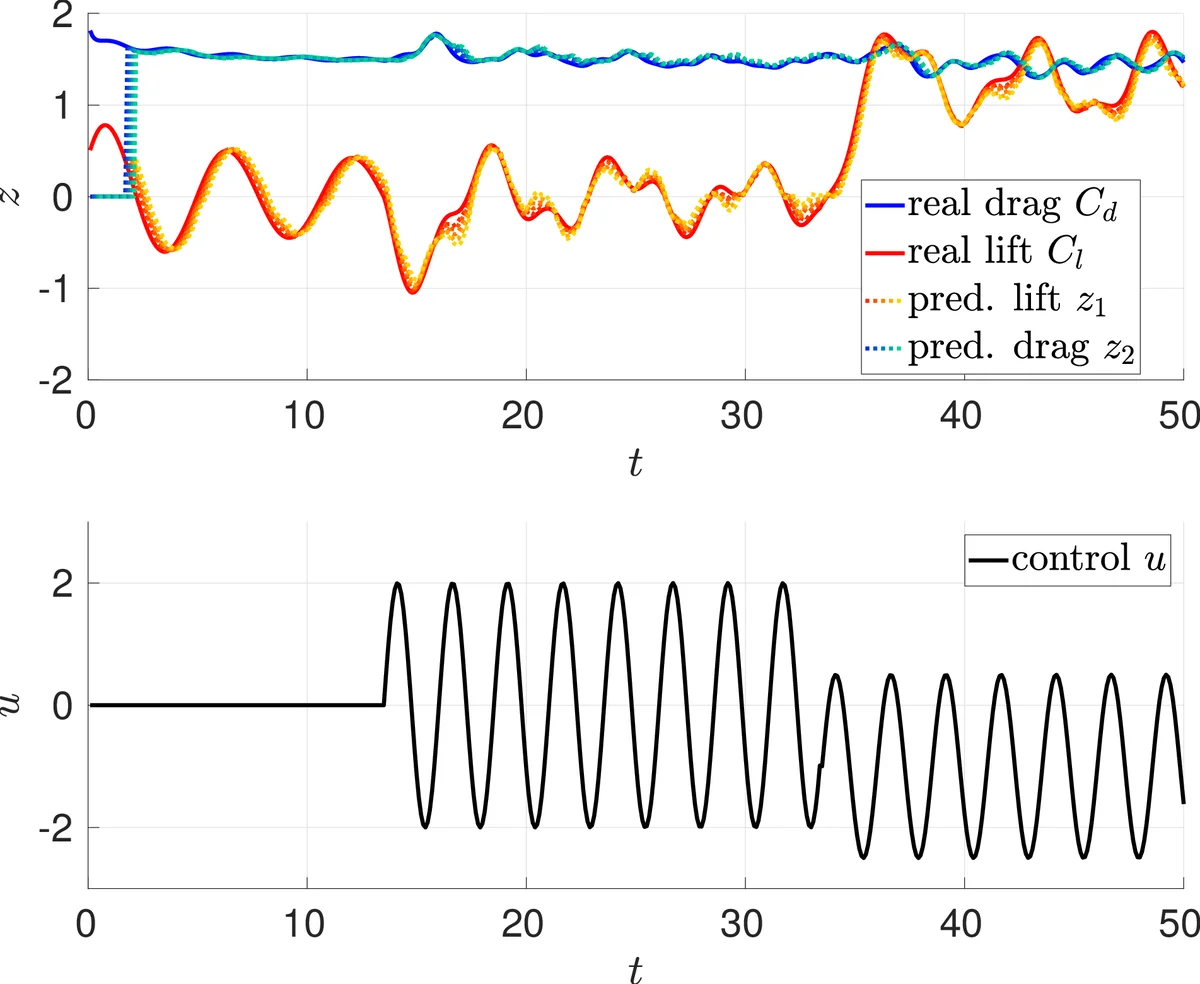

The paper introduces a novel Deep Model Predictive Control (DeepMPC) framework designed to enable real‑time feedback control of high‑dimensional, nonlinear, multi‑scale fluid flows. Traditional MPC requires a model of the full state, which is computationally prohibitive for fluid dynamics where the state dimension can reach millions. The authors address this by (1) focusing on a low‑dimensional set of control‑relevant observables—specifically lift and drag forces on bodies—and (2) learning a surrogate dynamics model for these observables using a deep recurrent neural network (RNN). The RNN is trained offline on data generated with smoothly varying random actuation, and then updated online as new sensor measurements arrive, ensuring the model remains accurate despite drift or changing flow conditions.

The RNN architecture follows an encoder‑decoder design. The encoder processes a history of delayed observations and control inputs to produce a latent state that captures long‑term dynamics. The decoder consists of N cells, each predicting the observable at the next time step given the latent state and the current control input. This structure allows the network to incorporate both long‑term memory and immediate control effects. Training proceeds in three stages to mitigate exploding/vanishing gradients: (i) a Conditional Restricted Boltzmann Machine initializes the network parameters, (ii) single‑step prediction is learned, and (iii) the full N‑step prediction horizon is trained.

Once trained, the RNN replaces the system map Φ in the MPC optimization problem:

min ∑₀^{N‑1}‖z_{i+1}−z_ref_{i+1}‖² + α‖u_i‖² + β‖u_i−u_{i‑1}‖²

subject to z_{i+1}=Φ̂(z_i,u_i) (the RNN).

The cost penalizes tracking error, control effort, and rapid input changes. The constrained optimization is solved at each sampling instant using a gradient‑based method such as BFGS, with gradients obtained via back‑propagation through time.

Four numerical experiments validate the approach. The first case controls the lift of a single rotating cylinder at Re = 100. The RNN accurately predicts lift and drag several steps ahead, and the DeepMPC successfully forces the lift to follow a piecewise‑constant reference (+1, 0, −1) with smooth actuation. The second set of experiments uses the “fluidic pinball” configuration—three cylinders arranged in a triangle—where the lift of each cylinder is independently tracked. Simulations are performed at Re = 100 (quasi‑periodic), 140 (mildly chaotic), and 200 (strongly chaotic). In the quasi‑periodic case the controller performs comparably to the single‑cylinder scenario. In the chaotic regimes the tracking error grows, as expected due to sensitivity to initial conditions, yet the controller still follows the reference trajectories reasonably well, demonstrating robustness of the learned surrogate model.

Key contributions of the work are:

- A data‑driven surrogate model that predicts only the low‑dimensional, control‑relevant quantities, eliminating the need for full‑field reconstruction.

- Integration of online learning into the MPC loop, allowing the model to adapt continuously to new sensor data.

- Demonstration that a sensor‑limited, physically realizable setup can achieve high‑performance control of complex flows, bridging the gap between academic benchmarks and practical engineering applications.

The authors discuss future directions, including handling measurement noise, scaling to higher Reynolds numbers and three‑dimensional turbulence, and incorporating additional objectives such as energy efficiency or multi‑objective trade‑offs. Overall, the paper showcases how deep learning, when combined with classical optimal control, can extend model‑based control techniques to regimes previously considered intractable.

Comments & Academic Discussion

Loading comments...

Leave a Comment