A Convex Formulation for Binary Tomography

Binary tomography is concerned with the recovery of binary images from a few of their projections (i.e., sums of the pixel values along various directions). To reconstruct an image from noisy projection data, one can pose it as a constrained least-sq…

Authors: Ajinkya Kadu, Tristan van Leeuwen

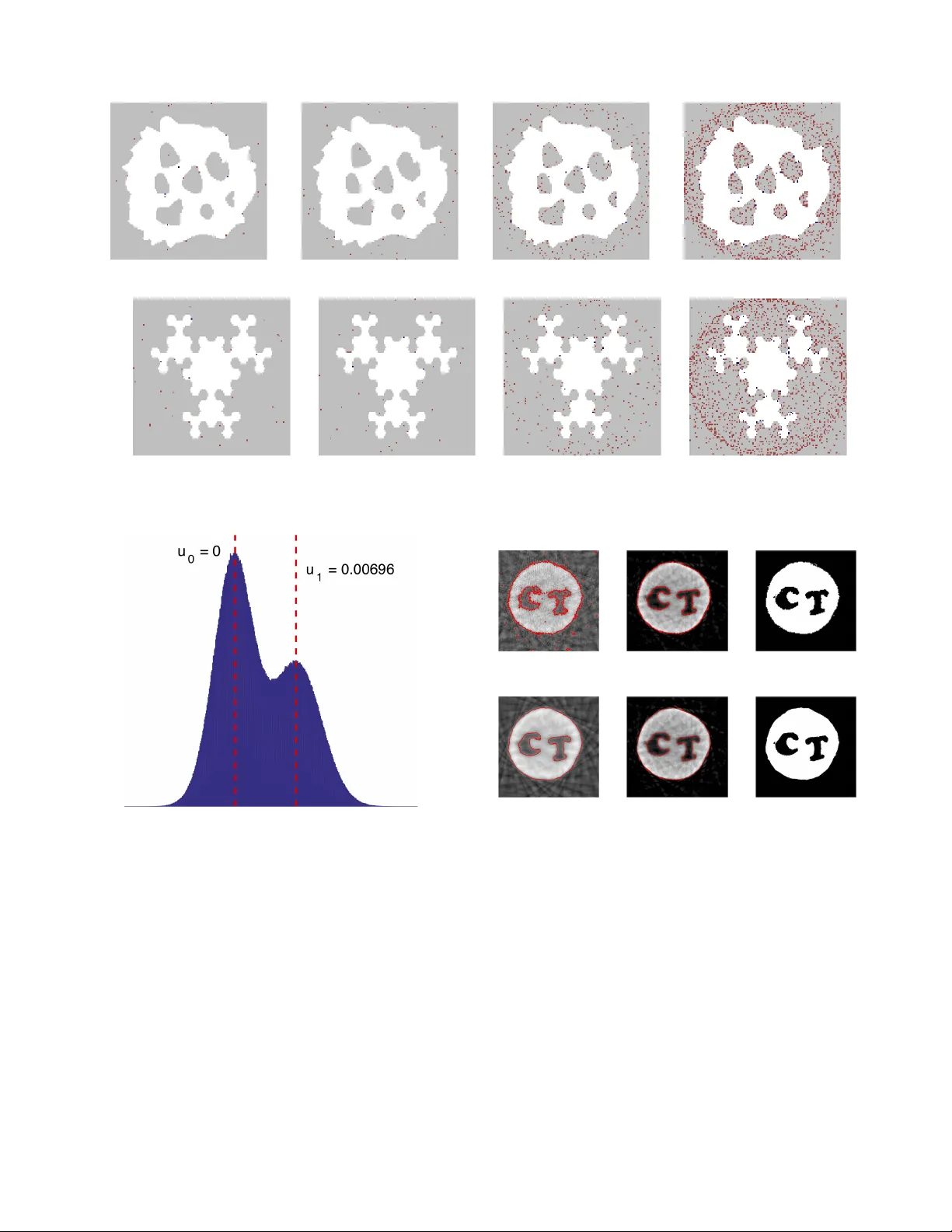

1 A Con v e x F ormulation for Binary T omography Ajinkya Kadu and T ristan van Leeuwen Abstract —Binary tomograph y is concerned with the recovery of binary images from a few of their projections (i.e., sums of the pixel values along various directions). T o reconstruct an image from noisy projection data, one can pose it as a constrained least-squares pr oblem. As the constraints are non-convex, many approaches f or solving it r ely on either r elaxing the constraints or heuristics. In this paper we pr opose a novel convex formulation, based on the Lagrange dual of the constrained least-squares problem. The resulting problem is a generalized LASSO problem which can be solv ed efficiently . It is a relaxation in the sense that it can only be guaranteed to give a feasible solution; not necessarily the optimal one. In exhaustive experiments on small images ( 2 × 2 , 3 × 3 , 4 × 4 ) we find, however , that if the problem has a unique solution, our dual approach finds it. In case of multiple solutions, our approach finds the commonalities between the solutions. Further experiments on realistic numerical phantoms and an experiment on X-ray dataset show that our method compares fav ourably to T otal V ariation and D AR T . The code associated with this paper is av ailable at https:// github .com/ajinkyakadu/BinaryT omo . Index T erms —Binary tomography , in verse problems, duality , LASSO I . I N T R O D U C T I O N D ISCRETE tomography is concerned with the recovery of discrete images (i.e., images whose pixels take on a small number of prescribed grey values) from a few of their projections (i.e., sums of the pixel values along various directions). Early work on the subject mostly deals with the mathematical analysis, combinatorics, and geometry . Since the 1970s, the development of algorithms for discrete tomography has become an activ e area of research as well [1]. It has found applications in image processing and computer vision [2], [3], atomic-resolution electron microscopy [4], [5], medicine imaging [6], [7] and material sciences [8]–[11]. A. Mathematical formulation The discrete tomography problem may be mathematically formulated as follows. W e represent an image by a grid of N = n × n pixels taking values x j ∈ U = { u 0 , u 1 , . . . , u K } . The projections are linear combinations of the pixels along m different (lattice) directions. W e denote the linear transforma- tion from image to projection data by y = Ax , where x j denotes the value of the image in the j th cell, y i is the (weighted) sum of the image along the i th ray and a ij is proportional to the length of the i th ray in the j th cell 1 . A. Kadu and T . van Leeuwen are with the Mathematical Institute, Utrecht Univ ersity , The Netherlands. 1 W e note that other projection models exist and can be similarly represented by a ij . The goal is to find a solution to this system of equations with the constraint that x j ∈ U , i.e., find x ∈ U N such that Ax = y . When the system of equations does not have a unique solution, finding one that only takes values in U N has been sho wn to be an NP-hard problem for more than 3 directions, i.e. , m ≥ 3 [12]. Due to the presence of noise, the system of equations may not have a solution, and the problem is sometimes formulated as a constrained least-squares problem min x ∈U N 1 2 k Ax − y k 2 . (1) Obviously , the constraints are non-con ve x and solving (1) exactly is not trivial. Next, we briefly discuss some existing approaches for solving it. B. Literatur e Review Methods for solving (1) can be roughly divided into four classes: algebraic methods, stochastic sampling methods, (con ve x) relaxation and (heuristic/greedy) combinatorial ap- proaches. The algebraic methods exploit the algebraic structure of the problem and may gi ve some insight into the (non-) uniqueness and the required number of projections [13], [14]. While theoretically very elegant, these methods are not readily generalised to realistic projection models and noisy data. The stochastic sampling methods typically construct a prob- ability density function on the space of discrete images, allow- ing one to sample images and use Marko v Chain Monte Carlo type methods to find a solution [15]–[17]. These methods are very flexible but may require a prohibitiv e number of samples when applied to large-scale datasets. Relaxation methods are based on some form con ve x or non- con ve x relaxation of the constraint. This allows for a natural extension of existing v ariational formulations and iterativ e algorithms [18]–[22]. While these approaches can often be implemented efficiently and apply to large-scale problems, getting them to con ver ge to the correct binary solution can be challenging. Another variant of con ve x relaxation include the linear-programming based method [23]. This method works well on small-scale images and noise-free data. The heuristic algorithms, finally , combine ideas from com- binatorial optimization and iterati ve methods. Such methods are often efficient and known to perform well in practice [24], [25]. A more extensiv e ov erview of various methods for binary tomography and variants thereof (e.g., with more than two grey levels) are discussed in [26]. 2 C. Contributions and outline W e propose a novel, con vex, reformulation for discrete tomography with two grey values { u 0 , u 1 } (often referred to as binary tomography). Starting from the constrained least- squares problem (1) we derive a corresponding Lagrange dual problem, which is con ve x by construction. Solving this problem yields an image with pixel values in { u 0 , 0 , u 1 } . Setting the remaining zero-valued pixels to u 0 or u 1 generates a feasible solution of (1) but not necessarily an optimal one. In this sense, our approach is a relaxation. Exhaustiv e enumer- ation of small-scale ( n = 2 , 3 , 4 ) images with fe w directions ( m = 2 , 3 ) show that if the problem has a unique solution, then solving the dual problem yields the correct solution. When there are multiple solutions, the dual approach finds the common elements of the solutions, leaving the remaining pixels undefined (zero-valued). W e conjecture that this holds for larger n and m as well. This implies that we can only expect to usefully solve problem instances that allow a unique solution and characterize the non-uniqueness when there are a fe w solutions. For practical applications, the most rele vant setting is where the equations alone do not permit a unique solution, but the constrained problem does. Otherwise, more measurements or prior information about the object would be needed in order to usefully image it. W ith well-chosen numerical experiments on synthetic and real data, we show that our new approach is competitiv e for practical applications in X-ray tomography as well. The outline of the paper is as follows. W e first gi ve an intuitiv e deriv ation of the dual problem for inv ertible A before presenting the main results for general A . W e then discuss two methods for solving the resulting conv ex problem. Then, we offer the numerical results on small-scale binary problems to support our conjecture. Numerical results on numerical phantoms and real data are presented in Section IV . Finally , we conclude the paper in Section V . I I . D UA L P RO B L E M For the purpose of the deriv ation, we assume that the problem has pixel values are ± 1 . The least-squares binary tomography problem can then be formulated as: min x , φ 1 2 k Ax − y k 2 , subject to x = sign ( φ ) , (2) where φ ∈ R N is an auxiliary v ariable, and sign ( · ) denotes the elementwise signum function. In our analysis, we consider the signum function such that sign (0) = 0 . The Lagrangian for this problem is defined as L ( x , φ , ν ) , 1 2 k Ax − y k 2 + ν T ( x − sign ( φ )) . (3) The variable ν ∈ R N is the Lagrange multiplier associated with the equality constraint x = sign ( φ ) . W e refer to this variable as the dual v ariable in the remainder of the paper . W e define the dual function g ( ν ) corresponding to the Lagrangian (3) as g ( ν ) , inf x , φ L ( x , φ , ν ) The primal problem (2) has a dual objective expressed as max ν g ( ν ) . As the dual function is always conca ve [27], this provides a way to define a con vex formulation for the original problem. W e should note two important aspects of duality theory here: i) we are not guaranteed in general that maximizing g ( ν ) yields a solution to the primal problem; ii) the reformulation is only computationally useful if we can efficiently e valuate g . The conditions under which the dual problem yields a solution to the primal problem are known as Slater’ s conditions [28] and are difficult to check in general unless the primal problem is con ve x. W e will later show , by example, that the dual problem does not always solve the primal problem. Classifying under which conditions we can solve the primary problem via its dual is beyond the scope of this paper . It turns out we can obtain a closed-form expression for g . Before presenting the general form of g , we first present a detailed deriv ation for in vertible A to provide some insight. A. In vertible A The Lagrangian is separable in terms of x and φ . Hence, we can represent the dual function as the sum of two functions, g 1 ( ν ) and g 2 ( ν ) . g ( ν ) = inf x 1 2 k y − Ax k 2 + ν T x | {z } g 1 ( ν ) + inf φ − ν T sign ( φ ) | {z } g 2 ( ν ) (4) First, we consider g 1 ( ν ) . Setting the gradient to zero we find the unique minimizer: x ? = A T A − 1 A T y − ν . (5) Substituting x ? back in the expression and re-arranging some terms we arriv e at the following expression for g 1 : g 1 ( ν ) = − 1 2 k A T y − ν k 2 ( A T A ) − 1 + 1 2 y T y , (6) where k z k 2 W = z T W z is a weighted ` 2 -norm. Next, we consider g 2 ( ν ) . Note that this function is separa- ble in terms of ν i g 2 ( ν ) = inf φ − N X i =1 ν i sign ( φ i ) . The function − ν sign ( φ ) achieves its smallest value for φ = ν when ν 6 = 0 . This solution is not unique of course, but that does not matter as we are only interested in the sign of φ . When ν = 0 the function takes on value 0 regardless of the value of φ . W e thus find g 2 ( ν ) = −k ν k 1 . (7) Hence, the dual function for the Lagrangian in (3) takes the following explicit form: g ( ν ) = − 1 2 k A T y − ν k 2 ( A T A ) − 1 − k ν k 1 + 1 2 y T y (8) 3 The maximizer to dual function (8) is found by solving the following minimization problem: min ν ∈ R N 1 2 k ν − A T y k 2 ( A T A ) − 1 + k ν k 1 . (9) This optimization problem is famously known as the least absolute shrinkage and selection operator (LASSO) [29] in the statistics literature. It tries to find a sparse vector in image space by minimizing the distance to the back projection of the data in a scaled ` 2 norm. The primal solution can be synthesized from the solution of the dual problem via x ? = sign ( φ ? ) = sign ( ν ? ) . It is important to note at this point that the solution of the dual problem only determines those elements of the primal problem, x i , for which ν i 6 = 0 . The remaining degrees of freedom in x need to be determined by alternativ e means. The resulting solution is a feasible solution of the primal problem, but not necessarily the optimal one. T o gain some insight into the behaviour of the dual objec- tiv e, consider a one-dimensional example with A = 1 : min x ∈{− 1 , 1 } 1 2 ( x − y ) 2 . (10) The solution to this problem is giv en by x ? = sign ( y ) . The corresponding dual problem is min ν ∈ R 1 2 ( ν − y ) 2 + | ν | , (11) the solution of which is given by ν ∗ = max( | y | − 1 , 0) sign ( y ) . Hence, for | y | > 1 , the solution of the dual problem yields the desired solution. For | y | ≤ 1 , howe ver , the dual problem yields ν ? = 0 in which case the primal solution x ∗ = sign ( ν ∗ ) is not well-defined. W e will see in section II-C that when using certain iterative methods to solve the dual problem, the iterations will naturally approach the solution ν ? = 0 from the correct side, so that the sign of the approximate solution may still be useful. B. Main results W e state the main results below . The proofs for these statements are provided in the Appendix section. Proposition 1. The dual objective of (2) for general A ∈ R m × N is given by g ( ν ) = − 1 2 k ν − A T y k 2 ( A T A ) † − k ν k 1 + 1 2 y T y ν ∈ R A , −∞ otherwise wher e † denotes the pseudo-in verse and R A is the r ow-space of A (i.e., the range of A T ). This leads to the following optimization pr oblem min ν ∈R A 1 2 k ν − A T y k 2 ( A T A ) † + k ν k 1 . (12) Remark 1. In case m ≥ N and A has full rank, A T A is in vertible and the general form (12) simplifies to (9) . Corollary 1. The minimization problem (12) can be restated as min µ ∈ R m 1 2 k AA † ( µ − y ) k 2 + k A T µ k 1 , (13) and the primal solution is r ecover ed thr ough x ? = sign ( A T µ ? ) , wher e µ ? is the solution to (13) . Remark 2. F or m ≤ N and A full rank, we have AA † = I and the formulation (13) simplifies to min µ ∈ R m 1 2 k µ − y k 2 + k A T µ k 1 . (14) This form implicitly handles the constraints on the searc h space of ν in Pr oposition 1. It allows us to use the functional form for matrix A thereby r educing the storage and incr easing the computational speed to find an optimal dual variable µ ? . Proposition 2. The dual pr oblem for a binary tomography pr oblem with gre y levels u 0 < u 1 is given by: min ν ∈R A 1 2 k ν − A T y k 2 ( A T A ) † + p ( ν ) , (15) wher e p ( ν ) = P i | u 0 | max( − ν i , 0) + | u 1 | max( ν i , 0) is an asymmetric one-norm. The primal solution is obtained using x ? = u 0 1 + ( u 1 − u 0 ) H ( ν ? ) , wher e H ( · ) denotes the Heaviside function. W e summarize the procedure in algorithm 1 for finding the optimal solution via solving the dual problem. In practical applications, the formulation in step 10 is very useful since the projection matrix A generally has a low rank, i.e . , rank ( A ) < min( m, N ) . Algorithm 1 Dual problem for various cases Input: A ∈ R m × N , y ∈ R m Output: x ? ∈ {− 1 , 1 } N 1: if rank ( A ) = min( m, N ) then 2: if m > N then 3: ν ? , argmin ν 1 2 k ν − A T y k 2 ( A T A ) − 1 + k ν k 1 4: retur n x ? = sign ( ν ? ) 5: else 6: ν ? , argmin ν 1 2 k ν − y k 2 + k A T ν k 1 7: retur n x ? = sign A T ν ? 8: end if 9: else 10: ν ? , argmin ν 1 2 k AA † ( ν − y ) k 2 + k A T ν k 1 11: retur n x ? = sign A T ν ? 12: end if Remark 3. The r ealistic tomographic data contains P oisson noise. In such case, the binary tomography pr oblem takes the constrained weighted least-squar es form [30], [31]: min x , φ 1 2 k y − Ax k 2 Λ , subject to x = sign ( φ ) , (16) wher e Λ ∈ R m × m is a diagonal matrix with elements Λ i > 0 r epr esenting the least-squares weight per pr ojection. The dual objective of (16) is given by g ( ν ) = ( − 1 2 k ν − A T Λ y k 2 B − k ν k 1 + 1 2 y T y ν ∈ R A , −∞ otherwise , 4 − 2 − 1 1 2 1 1 . 5 2 2 . 5 ν − g ( ν ) − 2 − 1 1 2 0 . 5 1 1 . 5 2 ν − g ( ν ) Figure 1. Plot of the dual function g (gray line) corresponding the the primal objective ( x − y ) 2 for y = 1 . 5 (left) and y = 0 . 5 (right) and its approximations (red line) at x = 1 . wher e B , A T Λ A † . The optimization pr oblem for this dual objective is min ν ∈R A 1 2 k ν − A T Λ y k 2 ( A T Λ A ) † + k ν k 1 . (17) If rank ( A ) = m with m ≤ N , the pr oblem (17) reduces to min µ ∈ R m 1 2 k µ − Λ 1 / 2 y k 2 + k A T Λ 1 / 2 µ k 1 , (18) and the primal solution is r ecover ed from x ? = sign ( A T Λ 1 / 2 µ ? ) , wher e µ ? is the solution to (18) . C. Solving the dual pr oblem When A T A is in vertible, the dual formulation (12) can be readily solved using a proximal gradient algorithm ( [32], [33]): ν k +1 , S L − 1 ν k − L − 1 A T A − 1 A T y − ν k , (19) where L = k A − 1 k 2 2 and the soft thresholding operator S τ ( · ) = max( | · | − τ , 0) sign ( · ) is applied component-wise to its input. W e can interpret this algorithm as minimizing subsequent approximations of the problem, as illustrated in figure 1. An interesting note is that, when starting from ν 0 = 0 , the first iteration yields a thresholded version of A † y . As such, the proposed formulation is a natural extension of a naiv e segmentation approach and allows for segmentation in a data- consistent manner . If AA T is in vertible we have AA † = I and it seems more natural to solve (14) instead. Due to the appearance of A T in the one-norm, it is no longer straightforward to apply a proximal gradient method. A possible strategy is to replace the one-norm with a smooth approximation of it, such as | · | = p ( · ) 2 + . As illustrated in figure 2, this will slightly shift the minimum of the problem. Since we are ultimately only using the sign of the solution, this may not be a problem. The resulting objectiv e is smooth and can be solved using any gradient-descent algorithm. W e also note that splitting methods can be used to solve (14). For example, the alternating direction method of mul- tipliers (ADMM) [34] and/or split-Bregman method [35]. Another class of method that can solv e (14) are the primal-dual methods (e.g., Arrow-Hurwicz primal-dual algorithm [36], Chambolle-Pock algorithm [37]). These methods rely on the proximal operators of functions and iterate tow ards finding − 2 − 1 1 2 1 1 . 5 2 2 . 5 ν − g ( ν ) − 2 − 1 1 2 0 . 5 1 1 . 5 2 ν − g ( ν ) Figure 2. Plot of the dual function g (gray line) corresponding the the primal objectiv e ( x − y ) 2 for y = 1 . 5 (left) and y = 0 . 5 (right) and its smooth approximation (red line) using | · | ≈ p ( · ) 2 + with = 0 . 1 . the saddle point of the problem. If the proximal operators are simple, these are computationally faster than the splitting methods. The dual problem (15) for binary tomography problem with grey levels u 0 < u 1 is also solved using proximal gradient method. W e provide the proximal operator for an asymmetric one-norm in the following proposition. Proposition 3. The proximal operator for an asymmetric one- norm function p ( x ) = N X i =1 | u 0 | max ( − x i , 0) + | u 1 | max ( x i , 0) with u 0 < u 1 , is given by P p,λ ( z ) , argmin x 1 2 k x − z k 2 + λp ( x ) = S λu 0 <λu 1 ( z ) , wher e λ > 0 , and S a

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment