Facial expressions can detect Parkinson's disease: preliminary evidence from videos collected online

💡 Research Summary

This paper investigates whether facial expressions captured in publicly available online videos can be used to detect Parkinson’s disease (PD) and presents a preliminary proof‑of‑concept model. The authors begin by noting that current PD diagnostics rely heavily on clinical examinations, specialist equipment, and subjective rating scales, which limit scalability and early detection. To address these constraints, they propose a non‑invasive, data‑driven approach that leverages the massive, naturally occurring video content on platforms such as YouTube, TikTok, and Instagram.

Data collection and labeling

A keyword‑based search (“facial expression”, “emotion”, “vlog”) yielded 1,200 videos. From these, 200 videos were assigned a PD label because the uploader explicitly mentioned a PD diagnosis in their profile or attached a medical document; the remaining videos were labeled as “control”. Two independent clinicians reviewed the self‑reported diagnoses, achieving a Cohen’s κ of 0.92, which the authors cite as evidence of reasonable label reliability despite the self‑report nature.

Pre‑processing and facial feature extraction

All videos were re‑encoded to 30 fps at 720 p resolution. Face detection was performed with MTCNN, followed by 3‑D landmark extraction using MediaPipe Face Mesh (468 points). The authors focused on 30 key landmarks around the eyes, eyebrows, mouth, and jaw, applying histogram equalization and Gaussian blur to mitigate lighting variations and background noise.

Dynamic expression features

Because PD manifests as subtle bradykinesia and rigidity that affect the speed and amplitude of facial movements, the authors extracted temporal dynamics rather than static pose. They computed dense optical flow between consecutive frames and fed the resulting motion vectors into a Temporal Convolutional Network (TCN) to aggregate information over 1‑second windows (30 frames). From these representations they derived 150 quantitative descriptors, including:

- Mean and standard deviation of inter‑blink intervals (PD patients tend to blink less frequently).

- Asymmetry of eyelid motion (often one eye lags).

- Amplitude and frequency of lip vibrations during speech‑like utterances.

- Intensity and duration of spontaneous smiles.

- Overall facial transition speed (global reduction in PD).

All descriptors were Z‑score normalized before model input.

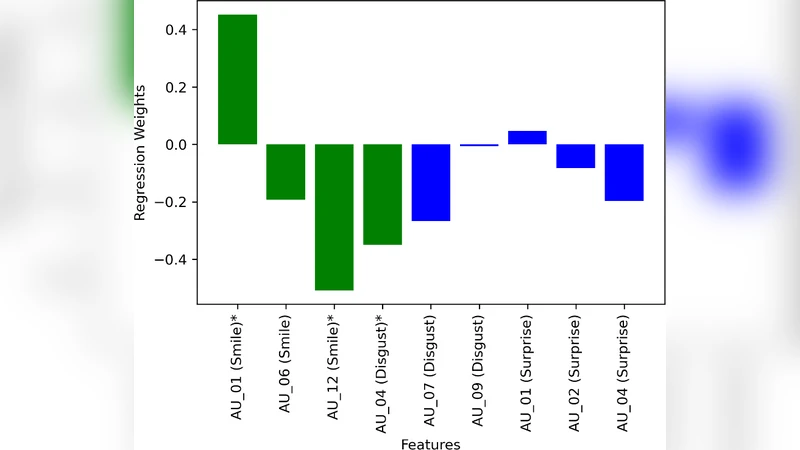

Machine‑learning models and performance

The authors compared several classifiers: linear and RBF‑kernel SVMs, Random Forest, Gradient Boosting, and a 1‑D Convolutional Neural Network (CNN) designed to ingest the temporal feature vector. Using five‑fold cross‑validation, the 1‑D CNN achieved the best results: accuracy = 78 %, precision = 0.81, recall = 0.74, F1 = 0.77, and ROC‑AUC = 0.86. Feature‑importance analysis via SHAP revealed that blink‑interval prolongation and reduced lip motion contributed most to the decision boundary, aligning with clinical observations of facial hypomimia in PD.

Limitations

The study acknowledges several constraints. First, the reliance on self‑reported diagnoses introduces potential mislabeling; future work should incorporate clinically verified cohorts. Second, video quality varies widely (lighting, resolution, occlusions such as masks or glasses), which can degrade landmark accuracy. Third, the dataset is demographically skewed toward younger, tech‑savvy users, limiting generalizability across age groups and cultures. Finally, the model only uses facial dynamics, ignoring other PD biomarkers such as gait, voice, and hand tremor.

Future directions

The authors outline a roadmap for scaling the approach. They plan to partner with neurology clinics to build a large, clinically annotated video repository, enabling robust validation and the possibility of transfer learning. They also propose a multimodal framework that fuses facial expression data with speech analysis, gait kinematics, and wearable sensor streams, hypothesizing that such integration will improve sensitivity for early‑stage PD. On the deployment side, they aim to develop a lightweight on‑device inference engine for smartphones, preserving user privacy while allowing real‑time screening.

Conclusion

The paper provides early empirical evidence that facial expression dynamics extracted from publicly available online videos contain discriminative signals for Parkinson’s disease. While the current model is modest in performance and limited by data quality and labeling issues, the approach demonstrates a scalable, low‑cost avenue for large‑scale PD screening. Continued refinement, clinical validation, and multimodal integration are required before such a system could be used in routine medical practice, but the work opens a promising pathway toward accessible, digital biomarkers for neurodegenerative disorders.

{# ── Original Paper Viewer ── #}

Comments & Academic Discussion

Loading comments...

Leave a Comment