Reconstructing networks

💡 Research Summary

The paper provides a comprehensive overview of network reconstruction, focusing on methods rooted in statistical physics and information theory. It begins by highlighting the pervasive problem of missing or noisy data across various domains—biology, sociology, finance, and e‑commerce—and argues that reconstructing the unknown part of a network is essential for reliable inference and decision‑making. The authors adopt the principle of statistical homogeneity, assuming that observed portions of a network are representative of its overall statistical properties; this assumption underpins all subsequent reconstruction techniques.

The discussion is organized into three scales: macroscopic, mesoscopic, and microscopic.

At the macroscopic level, the paper reviews basic global descriptors such as connectance (link density), degree distribution, assortativity, clustering coefficient, and hierarchical organization. The Erdős‑Rényi (ER) model is presented as the simplest baseline that reproduces only the overall link density. Because real networks are typically sparse yet exhibit heavy‑tailed degree distributions, the Chung‑Lu (CL) model is introduced to enforce the empirical degree sequence while preserving density. However, CL fails to capture degree‑degree correlations and clustering. To address these shortcomings, the Configuration Model (CM) is described: it maximizes entropy under the constraint of a fixed degree sequence, thereby reproducing degree heterogeneity and providing a more realistic baseline for higher‑order statistics. The authors also discuss how macroscopic reconstruction can be applied to systemic‑risk assessment in financial networks, where only aggregate exposure data are available.

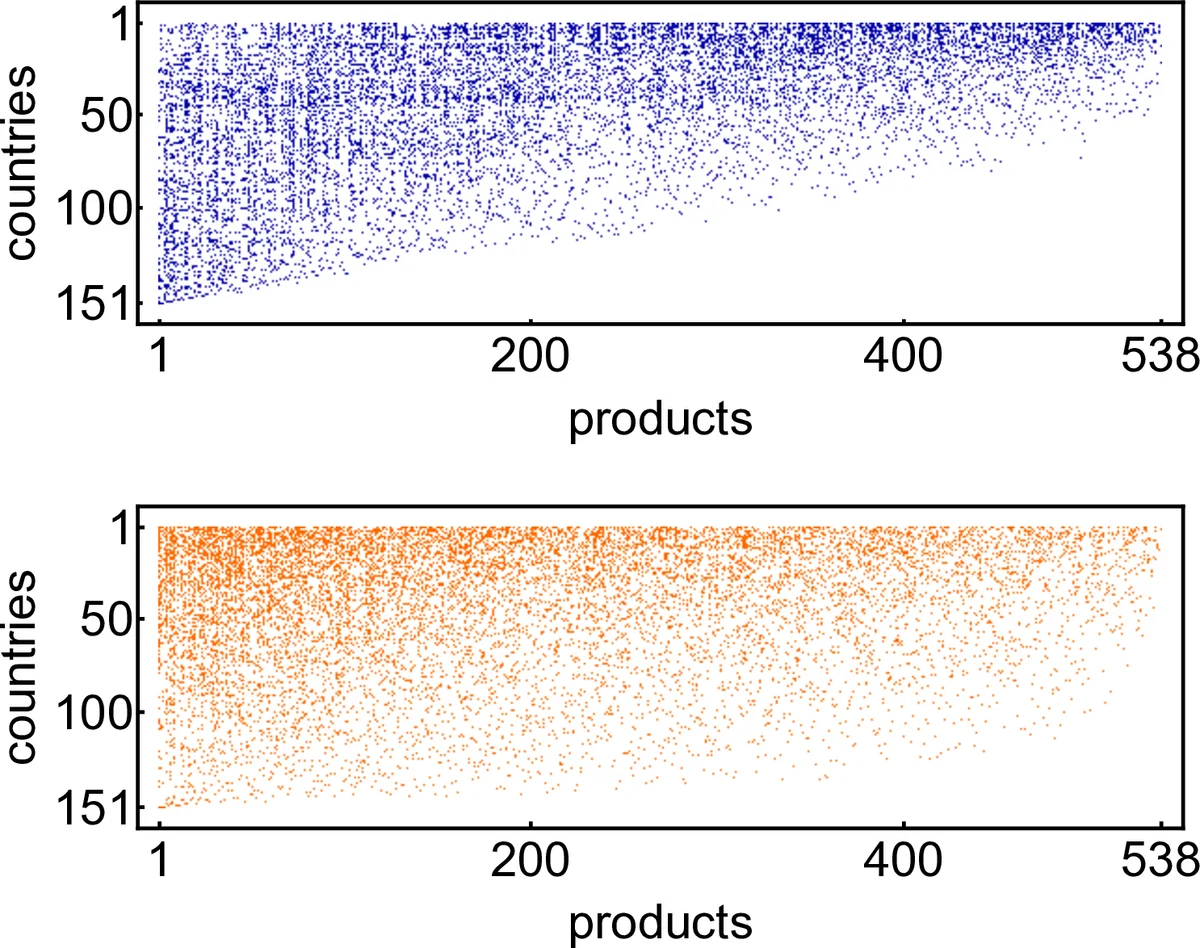

The mesoscopic section focuses on structural patterns that lie between global averages and individual links. Community detection is treated via Stochastic Block Models (SBM) and degree‑corrected SBM, which infer block memberships and inter‑block connection probabilities. Core‑periphery organization is modeled by separating a densely connected core from a sparsely linked periphery, while the bow‑tie architecture (SCC, IN, OUT components) is examined for directed networks. The authors emphasize model selection using information criteria (AIC, BIC) to avoid over‑fitting, and they provide a dedicated appendix on bipartite network reconstruction.

At the microscopic scale, the paper addresses link prediction, i.e., inferring missing individual edges. Traditional similarity‑based scores (common neighbors, Jaccard, Adamic‑Adar) are compared with model‑based approaches, including probabilistic block models and latent‑space embeddings. A notable highlight is the hyperbolic latent‑space model, which embeds nodes in a hyperbolic plane; distances in this space naturally generate both scale‑free degree distributions and high clustering, matching many real‑world networks. The authors discuss Bayesian inference for noisy observations, and they evaluate prediction performance using ROC‑AUC, precision‑recall, and MAP metrics. Applications range from protein‑protein interaction discovery to recommender systems.

The concluding chapter synthesizes the three scales, arguing that they are complementary: macroscopic reconstruction provides a global risk picture, mesoscopic analysis uncovers functional modules and core‑periphery dynamics, and microscopic link prediction refines the network at the finest resolution. The paper also includes appendices detailing bipartite reconstruction and practical guidelines for model selection. Overall, the work serves as a detailed guide for researchers and practitioners seeking to reconstruct complex networks using principled, entropy‑based methods, offering both theoretical foundations and concrete case studies across multiple disciplines.

Comments & Academic Discussion

Loading comments...

Leave a Comment