Gaussian Process Based Message Filtering for Robust Multi-Agent Cooperation in the Presence of Adversarial Communication

💡 Research Summary

The paper tackles the vulnerability of multi‑agent systems to malicious or faulty communication. It proposes a two‑stage solution that first models the relationship between agents’ observations and their physical positions using a Gaussian Process (GP). Each agent encodes its local observation o_i into a latent posterior q(z_i|o_i) via a neural auto‑encoder and broadcasts this as a message m_i. The GP, equipped with a learnable kernel, captures position‑dependent mutual information among agents, effectively defining a distribution over the mapping from spatial coordinates to latent encodings.

In the second stage, a receiving agent i computes a “confidence” weight c_i(j) for each neighbor j by evaluating the GP‑derived posterior together with the relative position x_{ji}. These confidences serve as attention‑like weights in the aggregation step of a Graph Neural Network (GNN): f_i = ∑_{j∈N_i} c_i(j)·φ(m_j). When confidences are near one, the GNN behaves like the standard unfiltered version; when they approach zero, the influence of the corresponding neighbor is essentially nullified.

To evaluate robustness, the authors introduce a taxonomy of adversarial agents: (1) Faulty agents that emit random errors, (2) Naive agents that know the cooperative encoding and policy but are unaware of any detection mechanism, (3) Cautious agents that know a detection mechanism exists and stay within normal communication bounds, and (4) Omniscient agents that have full knowledge of both the cooperative communication protocol and the detection strategy, allowing them to craft optimal attacks.

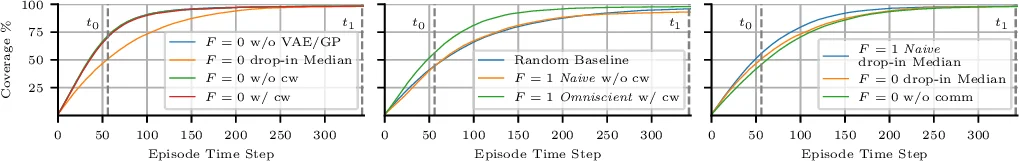

Two experimental domains are presented. The first is a static group‑classification task where spatially proximate agents share strong observation correlations. The GP‑based confidence weighting dramatically reduces the impact of all adversarial types, especially the Omniscient attacker, while preserving near‑perfect accuracy when no adversary is present. The second experiment involves cooperative multi‑agent reinforcement learning, where a rogue agent attempts to manipulate the team for its own benefit. Here, the confidence‑weighted GNN successfully suppresses the misleading messages, maintaining team performance and preventing reward degradation.

Overall, the contributions are: (i) a novel probabilistic model that captures position‑dependent mutual information via a learnable GP, (ii) a systematic taxonomy of adversarial agents, (iii) a low‑overhead confidence‑based message filtering mechanism that integrates seamlessly with existing GNN communication layers, and (iv) extensive empirical validation showing strong robustness across diverse attack scenarios with negligible cost when attacks are absent.

Comments & Academic Discussion

Loading comments...

Leave a Comment