An Empirical Investigation on the Challenges of Creating Custom Static Analysis Rules for Defect Localization

Background: Custom static analysis rules, i.e., rules specific for one or more applications, have been successfully applied to perform corrective and preventive software maintenance. Pattern-Driven Maintenance (PDM) is a method designed to support the creation of such rules during software maintenance. However, as PDM was recently proposed, few maintainers have reported on its usage. Hence, the challenges and skills needed to apply PDM properly are unknown. Aims: In this paper, we investigate the challenges faced by maintainers on applying PDM for creating custom static analysis rules for defect localization. Method: We conducted an observational study on novice maintainers creating custom static analysis rules by applying PDM. The study was divided into three tasks: (i) identifying a defect pattern, (ii) programming a static analysis rule to locate instances of the pattern, and (iii) verifying the located instances. We analyzed the efficiency and acceptance of maintainers on applying PDM and their comments on task challenges. Results: We observed that previous knowledge on debugging, the subject software, and related technologies influenced the performance of maintainers as well as the time to learn the technology involved in rule programming. Conclusions: The results strengthen our confidence that PDM can help maintainers in producing custom static analysis rules for locating defects. However, a proper selection and training of maintainers is needed to apply PDM effectively. Also, using a higher level of abstraction can ease static analysis rule programming for novice maintainers.

💡 Research Summary

The paper investigates the practical challenges that novice software maintainers encounter when creating custom static analysis rules for defect localization using the recently proposed Pattern‑Driven Maintenance (PDM) approach. The authors motivate their work by pointing out that off‑the‑shelf static analysis tools rely on generic rule sets, which often miss defects that are specific to a particular application or domain. Custom rules can fill this gap, but the process of authoring them is non‑trivial, especially for maintainers who lack deep expertise in program analysis or the target system.

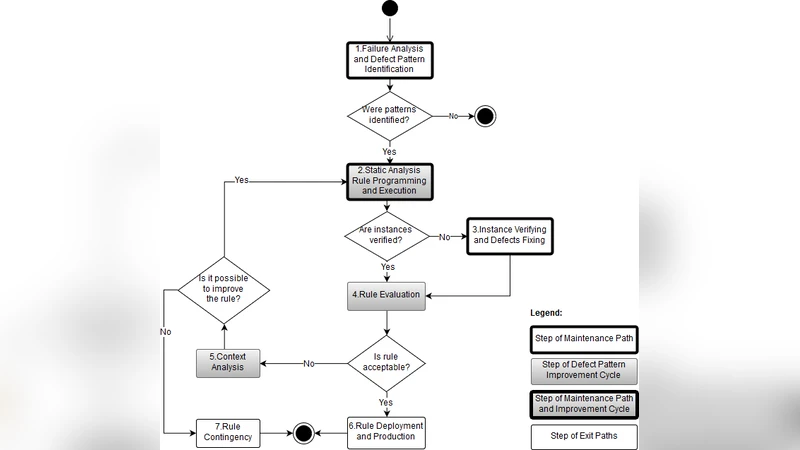

To explore these issues, the researchers conducted an observational study with twelve graduate‑level participants who had basic experience with static analysis tools but no prior exposure to custom rule development. The study was structured around three sequential tasks that mirror the PDM workflow: (1) identifying a defect pattern from bug reports and source code, (2) programming a static analysis rule that captures the identified pattern, and (3) verifying that the rule correctly locates defect instances in the target code base. The participants used a Java‑centric static analysis framework (e.g., Spoon, PMD) and a domain‑specific language (DSL) supplied by PDM to express the rules.

Quantitative data were collected for each task, including time‑on‑task, number of compilation or logical errors, and subjective difficulty ratings. Qualitative data were gathered from think‑aloud comments and post‑task debriefings. The analysis revealed several key findings. First, prior knowledge strongly influenced performance: participants with solid debugging experience and familiarity with the application domain completed the pattern‑identification phase about 30 % faster and produced far fewer DSL errors in the rule‑programming phase. Second, the rule‑programming step (Task 2) was the most demanding. Novices spent an average of 45 minutes learning the DSL and incurred roughly 3.2 compilation errors and 2.1 logical errors per rule, whereas more experienced peers made only about half as many mistakes. Third, overall acceptance of the PDM methodology was high—83 % of participants agreed that a pattern‑driven approach gave them structural insight into defects—but 58 % expressed that the low‑level DSL was a barrier for newcomers, preferring higher‑level abstractions or visual assistance.

Based on these observations, the authors propose practical recommendations. They argue that organizations should carefully select maintainers who already possess strong debugging skills and domain knowledge before assigning them to PDM tasks. Targeted pre‑training on AST manipulation, the specific DSL, and the underlying static analysis engine is essential to reduce the learning curve. Moreover, tool developers should consider augmenting PDM with higher‑level, template‑based rule creation interfaces or visual editors that hide the intricacies of AST traversal, thereby making rule authoring more accessible to novices. Finally, the verification phase should be supported by automated test suites and quantitative metrics (precision, recall) to ensure that newly created rules are both effective and reliable.

The study’s limitations include its modest sample size, focus on a single programming language (Java), and reliance on a specific set of analysis tools. Long‑term effects such as rule maintainability, reuse across projects, and impact on overall maintenance cost were not measured. Future work is suggested to broaden the empirical scope to multiple languages and domains, and to develop a lifecycle model for custom rule management that integrates PDM into continuous maintenance pipelines.

In conclusion, the research provides empirical evidence that PDM can enable maintainers to prototype custom static analysis rules for defect localization, but success hinges on appropriate personnel selection, dedicated training, and tool support that raises the abstraction level of rule authoring. When these conditions are met, even novice maintainers can efficiently produce accurate, application‑specific analysis rules, thereby enhancing the precision of defect detection and supporting more proactive software maintenance practices.