Rapid Exploration of Optimization Strategies on Advanced Architectures using TestSNAP and LAMMPS

Authors: Rahulkumar Gayatri, Stan Moore, Evan Weinberg

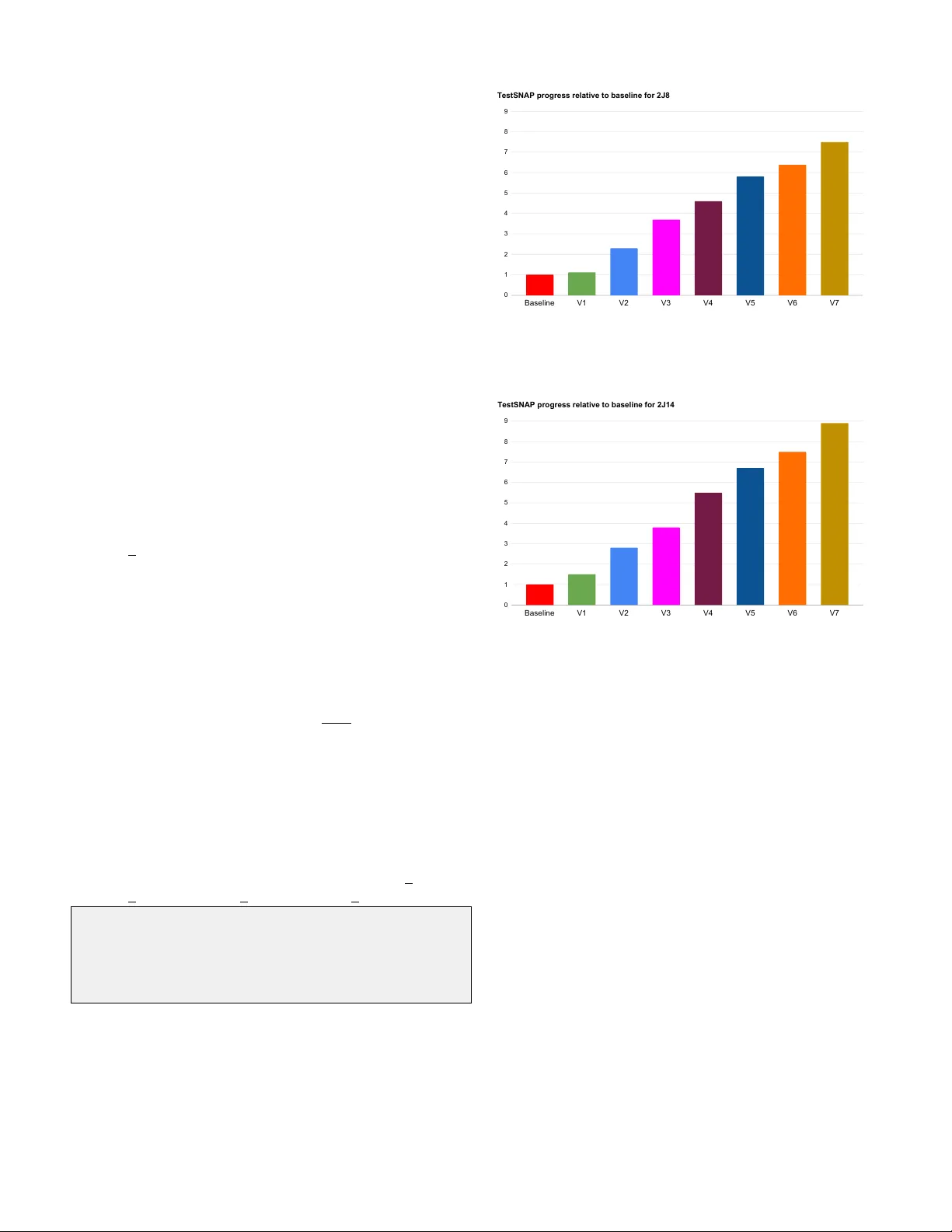

Rapid Exploration of Optimization Strate gies on Adv anced Architectures using T estSN AP and LAMMPS 1 st Rahulkumar Gayatri NERSC Lawr ence Berkele y National Lab Berkeley , USA rgayatri@lbl.go v 2 nd Stan Moore Sandia National Laboratories Albuquerque, USA stamoor@sandia.gov 3 rd Ev an W einberg NVIDIA Corporation Santa Clara, USA eweinber g@n vidia.com 4 th Nicholas Lubbers Los Alamos National Lab Los Alamos, USA nlubbers@lanl.gov 5 th Sarah Anderson Cray Inc Minneapolis, USA saraha@hpe.com 6 th Jack Deslippe NERSC Lawr ence Berkele y National Lab Berkeley , USA jrdeslippe@lbl.gov 7 th Danny Perez Los Alamos National Lab Los Alamos, USA danny perez@lanl.gov 8 th Aidan P . Thompson Sandia National Laboratories Albuquerque, USA athomps@sandia.gov Abstract —The exascale race is at an end with the announce- ment of the A urora and Frontier machines. This next gener - ation of supercomputers utilize diverse hardwar e architectur es to achieve their compute perf ormance, pr oviding an added onus on the performance portability of applications. A solu- tion to this challenge is the evolution of perf ormance-portable frameworks, pro viding unified models for mapping abstract hierarchies of parallelism to diverse architectures. Kokkos is one such performance portable programming model for C++ applications, pro viding back-end implementations for each ma- jor HPC platform. Even with the availability of performance portable frameworks, modern compute resources which feature heterogeneous node architectur es containing multicor e processors and manycore accelerators, challenge application developers to restructur e algorithms to expose higher degrees of parallelism. The Spectral Neighbor Analysis P otential (SN AP) is a machine- learned inter -atomic potential utilized in cutting-edge molecular dynamics simulations. Pre vious implementations of the SNAP calculation showed a downward trend in their perf ormance relati ve to peak on newer -generation CPUs and low perf ormance on GPUs. In this paper we describe the restructuring and opti- mization of SNAP as implemented in the Kokk os CUD A back end of the LAMMPS molecular dynamics package, benchmarked on NVIDIA GPUs. W e identify novel patterns of hierarchical parallelism, facilitating a minimization of memory access over - heads and pushing the implementation into a compute-saturated regime. Our implementation via Kokk os enables recompile-and- run efficiency on upcoming architectur es. W e find a ∼ 22x time- to-solution improvement relativ e to an existing implementation as measured on an NVIDIA T esla V100-16GB for an important benchmark. Sandia National Laboratories is a multimission laboratory managed and operated by National T echnology and Engineering Solutions of Sandia, LLC., a wholly owned subsidiary of Honeywell International, Inc., for the U.S. Department of Energy’ s National Nuclear Security Administration under contract DE-NA-0003525. This paper describes objective technical results and analysis. Any subjective views or opinions that might be expressed in the paper do not necessarily represent the vie ws of the U.S. Department of Energy or the United States Go vernment. Index T erms —exascale, GPUs, programming framew orks, Kokk os, hierarchical parallelism, shared memory , manycore architectur es, multicore architectur es. I . I N T RO D U C T I O N In order to reach exaflop performance while maintaining power constraints, heterogeneous node architectures hav e be- come the norm. The heterogeneous node combination consists of one or more host CPUs, which are usually multicore processors, and one or more accelerators or GPUs also called a device . As of June 2020, six out of the top ten machines in the list of top 500 supercomputers depend on such heterogeneous architectures to achieve their compute performance [1]. The next generation of supercomputers such as Perlmutter , Aurora, and Frontier follow the same path by relying on NVIDIA, Intel, and AMD GPUs, respecti vely , for the majority of their compute bandwidth. The trend of heterogeneous node composition has also led to an increased focus on application de velopment. Application dev elopers are no w forced to re-think their parallelization strategies to effecti vely utilize nearly a 100 × increase in the number of threads av ailable on each node. Apart from effecti v ely utilizing the increase in the computational po wer , programmers now need to parallelize the application code for both multicore and man ycore architectures. This has led to the dev elopment of multiple performance-portability framew orks such as Kokk os [2], Raja [3], OpenMP of fload, etc. The intention of these models is to provide a single coding front- end for the application developers while the frameworks main- tain multiple backend implementations optimized for specific hardware architectures such as CPUs and GPUs. While these framew orks striv e to generate optimized code tailored for the underlying architecture, it is also important for the application de velopers to restructure their code to exploit the massiv e increase in computational power via hardware tailored to exploiting parallelism. The threads on a GPU are organized in a hierarchical fashion. For example on NVIDIA GPUs, O (100 − 1000) threads are grouped as a thread block and share resources among themselves. Similarly on Intel’ s Gen9 GPU architectures, the threads are di vided into thread- groups and threads. Application dev elopers need to design a parallel implementation that can distribute work ef fectiv ely across both lev els of the hierarchy . Additionally , GPUs have their own memory space and memory hierarchies, increasing the complexity of the appli- cation. On GPUs, threads performing fully coalesced memory reads (i.e., access of consecutiv e memory locations) minimize memory transactions and thus minimize latencies. Such a memory access pattern should be a voided on CPUs since separate threads accessing memory locations in the same cache line results in false sharing and thrashing. Compared to CPUs, modern GPUs have a very large ratio of compute throughput to memory bandwidth, also referred to as a high arithmetic intensity (AI). Optimally written kernels on CPUs often become memory bound on GPUs. In these scenarios new strategies which reduce reads are inv aluable: kernel fusion and even redundant computation can be net- beneficial if such optimizations improve the AI of a kernel [4]. Spectral Neighbor Analysis Potential (SNAP) is a compu- tationally intensi ve interatomic potential in the LAMMPS [5] molecular dynamics software package. W ith the introduction of new CPU and GPU architectures, SNAP’ s performance relativ e to the peak flop rating of the architecture showed a downw ard trend on CPUs and low compute utilization on GPUs. In this paper we describe our efforts to improve the performance of SNAP by optimizing on all the points mentioned above. W e explain how we overcame performance deterrents in SNAP to gain a ∼ 22 × performance increase over the existing GPU implementation. I I . T H E S NA P F O R C E K E R N E L Interatomic potentials (IAPs) are a critical part of any classical molecular dynamics (MD) simulation. The use of a classical IAP implies that accuracy-limiting approximations are acceptable. The most important assumptions shared by many IAPs are as follows. First, all-electron mediated inter- actions of atoms can be described by the Born-Oppenheimer potential energy surface. Second, the force on a given atom does not depend on the positions of atoms beyond a certain distance. This latter constraint permits the use of efficient algorithms that are linear scaling in the number of atoms, as well as ensuring that large problems can be ef ficiently distributed ov er leadership computing platforms. Many IAPs capture local interactions using approximations inspired by known physical and chemical phenomena, such as chemical bonding, electrostatic screening, local coordination, etc. The dev elopment of new IAPs follows a decades long trend where much of the ef fort has focused on more accurate, b ut also more complex and computationally expensiv e IAPs [6]. A recent branch of this dev elopment combines strategies of MD and data science, producing machine-learned (ML) IAPs. Machine-learned IAPs translate the atomic neighbor- hood into a set of generalized descriptors . These descriptors are independently weighted to match a database of higher fidelity results (e.g. ab initio quantum electronic structure calculations). A variety of dif ferent descriptors which describe the local en vironment of an atom exist in the literature [7], such as symmetry functions [8], bispectrum components [9], and the Coulomb matrix [10]. The Spectral Neighbor Analysis Potential (SNAP) [11] utilizes a basis expansion of bispectrum components as a descriptor of atomic en vironments. Drautz has recently sho wn that many of these descriptors, including the SN AP bispectrum, share a common mathematical founda- tion in the atomic cluster expansion for the Born-Oppenheimer potential energy function [12]. A. Mathematical Structure of SNAP In the SN AP potential energy model, the total energy of a configuration of atoms is composed as a sum of atomic energies. For each atom i , its energy E i is assumed to be a function of the positions of neighbor atoms out to some finite distance R cut . The positions of neighbor atoms are represented as an atomic density function defined in the 3D ball of radius R cut . In SN AP , this compact domain is mapped to the unit 3-sphere. Expanding the neighbor density as a Fourier series, we obtain the following Fourier coef ficients U j = X r ik , int >; MDPolicyType2D mdPolicy2D({0, 0}, {num_atoms, num_nbor }); parallel_for(mdPolicy2D, KOKKOS_LAMBDA( const int natom , const int nbor) { dulist(natom,nbor) = ...; }); } Listing 4: MDRangeP olicy to collapse atom and neighbor loops in compute dU routine. Line 3 of listing 4 acquires the default ex ecution space for launching the work inside a Kokk os parallel for construct, the NVIDIA GPU in the context of this work. Line 4 shows the declaration of a 2D range policy type in the default ex ecution 0.00 0.25 0.50 0.75 1.00 Atom only Atom+nbor Baseline 2J8 2J14 Baseline vs TestSNAP Fig. 1: Baseline performance compared with T estSNAP after atom and neighbor loop parallelization on V100. space with a 32-bit inte ger iterator . Line 5 creates a 2D policy type where the first and second loop are over num atoms and num nbor respectiv ely . Line 7 shows the launching of the 2D range policy . The results of the two parallelization strategies discussed abov e are shown in Fig. 1 for the 2J8 and 2J14 problem sizes on NVIDIAs V100 architecture. As explained earlier, we present the results of our GPU implementation relativ e to the baseline implementation. Hence anything higher than “1” would imply performance improvement and conv ersely anything lower would mean that our implementation is slo wer than the baseline. In Fig. 1, the first set of bars show the performance comparison of our implementation when only atom loop is distributed across threads of a GPU. For our target problem size we see a 1.5 × and 2.0 × regression for the 2J8 and 2J14 problem sizes. A regression at this stage is reasonable. W e increased our memory footprints to 3 and 5GB for the two problem sizes, respectiv ely , with implications for cache reuse. In addition, relative to the baseline we are exposing less parallelism by not threading over neighbors and j , j 1 , j 2 . The latter is our next point of focus. The second set of bars in Fig. 1 show the performance comparison of atom and neighbor loop parallelization. Refac- toring to use an MDRangeP olicy increases the amount of exposed parallelism by a factor equal to the number of neighbors per atom, here 26. Unfortunately , we now also need to store information between kernel launches as a function of both atom and neighbor number . This increases the memory footprint of [U,dU ,dE]list by a number-of-neighbors factor , again 26, leading to a total memory footprint of 5 GB for the 2J8 problem size and an out-of-memory error for the 2J14 problem size! Hence as can be observed in the second set of bars in the figure, while the performance parity for 2J8 is restored with the baseline code, the comparison for 2J14 is conspicuously missing. For comparison, the baseline code has a GPU memory footprint of 2 GB for the 2J8 problem and 14 GB for the 2J14 problem. There is no trivial solution to the out-of-memory error for the 2J14 problem size. The robust solution to this problem is gi ven by the so-called adjoint refactorization which we describe in section IV. I V . A D J O I N T R E FAC T O R I Z A T I O N The original formulation of the SN AP force calculation relied on pre-calculating and storing the Z matrices for each atom. This avoided repeated calculation of the O ((2 j + 1) 4 ) Clebsch-Gordan products for each of the (2 J + 1) Z matrices. W ith this strategy the total memory footprint per atom scales as O ( J 5 ) . T o a void this issue we combine (5) and (6) and define a new quantity Y that is the adjoint of B with respect to U , Y j = X j 1 j 2 β j j 1 j 2 Z j j 1 j 2 . (7) In this formulation each Z matrix can be computed and immediately accumulated to the corresponding Y j . This re- duces the O ( J 5 ) storage requirement for Z , replacing it by the O ( J 3 ) storage requirement for Y j . As noted in a recent paper by Bachmayr et al. [16], this refactorization is equi v alent to the backward differentiation method for obtaining gradients from neural networks. This separate computation of Y j has the additional benefit of eliminating the sum over j 1 and j 2 from compute dB . Since Y j is neighbor -independent this eliminates an additional O ( N nbor ) of storage and computation relati ve to the previous implementation. W ith this refactorization we can av oid calculating and storing dB prior to the force calculation, an O ( J 3 ) reduction in memory ov erheads. The optimized SNAP force calculation is now formulated as a sum ov er one bispectrum index j instead of three, giving F k = − N X i =1 J X j =0 Y j : ∂ U ∗ j ∂ r k . (8) In practice we store the force contributions to an N atom × N neig h structure dElist . This is because in the full LAMMPS MD workflo w indi vidual dElist components contribute to other quantities of interest, such as the virial tensor . Listing 5 shows the modified T estSN AP algorithm with the adjoint factorization implemented. In summary , we have replaced the routines compute Z and compute dB by compute Y and compute dE , respectively . int natom, nbor; build_neighborlist(); compute_U(); compute_Y(); compute_dU(); compute_dE(); update_forces(); Listing 5: T estSNAP code V . O P T I M I Z A T I O N O F R E FAC T O R E D C O D E The adjoint refactorization reduced both memory o verheads and the computational complexity of the SN AP calculation. 0 1 2 3 4 5 6 7 8 9 Baseline V1 V2 V3 V4 V5 V6 V7 TestSNAP progress relative to baseline for 2J8 Fig. 2: T estSNAP progress relativ e to baseline for 2J8 problem size on NVIDIA V100. 0 1 2 3 4 5 6 7 8 9 Baseline V1 V2 V3 V4 V5 V6 V7 TestSNAP progress relative to baseline for 2J14 Fig. 3: T estSNAP progress relati ve to baseline for 2J14 prob- lem size on NVIDIA V100. W e additionally flattened jagged multi-dimensional arrays re- lated to the j 1 , j 2 , j structures which further reduced memory use. These optimizations reduced the memory requirements for the 2 J = 14 problem size to 12 GB, rendering our algorithm tractable on a V100-16GB. The adjoint refactorization and memory reduction improved performance on CPUs as well giving us a 3 × performance boost on the Intel Broadwell CPU for the 2J8 problem size. W e document next a series of optimizations we performed on the refactored algorithm. A summary of our figure of merit, the grind-time, relati ve to the baseline is gi ven in Fig. 2 and 3 for the 2J8 and 2J14 problem sizes, respectively . The performance numbers shown in the figures are obtained by running T estSNAP on NVIDIA ’ s V100 GPU. The labels on the x-axis correspond to subsection numbers, V1 through V7 , in which we provide detailed descriptions of our optimizations. The height of the bar for any giv en subsection assumes the optimizations from all previous subsections are in place. A. V1 - Atom loop parallelization Follo wing the pattern of our initial T estSNAP Kokk os implementation, our first step is to refactor the algorithm into four discrete kernels, each of which act on all atoms. In summary , for each of compute [U ,Y ,dU,dE] we launch a K okkos::parallel for in which each Kokk os thread (here mapping to a CUDA thread) performs work for a single atom. For further details we refer the reader back to Sec. III-B1 and to the reference code for compute U in listing 3. In particular the Kokkos re-implementation of compute U is unchanged relativ e to T estSN AP as the adjoint refactoring did not effect that kernel. Because this stage is a prescriptiv e refactorization, success was ev aluated by verifying correctness relati ve to the baseline. This is provided by construction in T estSN AP as discussed in Section II. In contrast to the implementation before the adjoint refactorization demonstrated in Fig. 1, we already see a 15% and 50% improvement for the 2J8 and 2J14 problem sizes respecti vely . W e owe this benefit to the reduction in memory transfers, most importantly the elimination of the O ( J 5 ) storage required for Zlist . Both problem sizes already show a speed-up relativ e to the baseline code. B. V2 - Atom and neighbor loop parallelization The next step is to expose additional parallelism over the neighbor dimension in the compute dU and compute dE routines. W e refer the reader back to Sec. III-B2 for the broad motiv ations. In contrast to the T estSN AP case where we used an MDRangeP olicy , here we consider additional methods of parallelizing over the nested atom, neighbor loops. One strategy to consider is assigning one K okk os team, equiv alently CUD A threadblock, to each atom and distributing the neigh- bors across the Kokk os threads, equiv alently CUD A threads, within a block. In our benchmark each atom has exactly 26 neighbors, implying one warp per threadblock (32 threads) would be sufficient. Another strategy , the one we found most effecti v e, is to collapse the neighbor and atom loops and launch a one-dimensional K okkos parallel for . This allows K okkos to manage scheduling work across threads and thread blocks. W e sketch this implementation in listing 6. This strategy begged an opportunity to expose neighbor parallelism in the compute U routine. This requires the in- troduction of atomic additions. As noted in Sec. II, for each atom the compute U routine calculates the sum of expan- sion coefficients U j ov er each neighbor . When we did not thread over neighbors this sum could be safely performed without atomics. Our implementation featuring both atom, neighbor parallelism and atomic additions is giv en in listing 7. void compute_dU { const int nTotal = num_atoms * num_nbor; Kokkos::parallel_for(nTotal, KOKKOS_LAMBDA( int iter) { int natom = iter / num_nbor; int nbor = iter % num_nbor; dUlist(natom,nbor,...) = ... }); } Listing 6: Atom and neighbor loops collapsed in compute dU routine void compute_U { //Kernel 1 Kokkos::parallel_for(nTotal, KOKKOS_LAMBDA( int iter) { int natom = iter / num_nbor; int nbor = iter % num_nbor; for ( int j = 0; j < idxz; ++j) Ulist(natom,nbor,j) = ... //Update Ulist }); //Kernel 2 Kokkos::parallel_for(nTotal, KOKKOS_LAMBDA( int iter) { int natom = iter / num_nbor; int nbor = iter % num_nbor; for ( int j = 0; j < idxz; ++j) Kokkos::atomic_add(Ulisttot(natom,j), Ulist(natom, nbor,j)); }); } Listing 7: 2 kernels in compute U routine In contrast to V1 , the ev aluation of merit is non-trivial because the atomic additions we introduced do ha ve a per - formance penalty relative to non-atomic additions. Nonethe- less, profiler-dri ven optimization indicates this penalty is well amortized by the introduction of additional parallelism. As documented in Figs. 2 and 3 we find a net ∼ 2 × performance improv ement from a verified correct introduction of neighbor parallelism for both the problem sizes. C. V3 - Data layout optimizations The previous two sections document exposing additional parallelism in each routine. Exposing additional parallelism requires a careful reconsideration of data layouts to preserve sequential memory accesses across threads. For GPUs, the data dimension over which the parallelization happens should be stored contiguously in memory . Our T estSNAP implemen- tation originally used a CPU-friendly row-major data layout where the flattened j indices were fastest while the paral- lelization happened over the atom dimension. The strategy of threading ov er atoms lends itself to an atom-fastest data layout on GPUs. Changing to a column-major data layout, and profiling each kernel to verify if the intended change resulted in memory coalescing, sho wed that this transformation only improv ed coalescing in compute Y . The reason for this will be addressed in V4 . For now , we note that this data layout modification in compute Y gav e us a 1.6 × speedup for 2J8 and 1.3 × speedup for the 2J14 problem sizes. D. V4 - Atom loop as the fastest moving index As mentioned earlier in compute [U,dU ,dE] there are still non-coalesced accesses because our con vention for unflattening the atom, neighbor loop was neighbor- fastest. The solution to this problem is tri vially changing to atom-fastest. Comparing the atom and neighor index calculation in listing 6 and listing 8, sho ws us how the atom loop can be made the fastest index. int nbor = iter / num_atoms; int natom = iter % num_atoms; Listing 8: Atom loop as the fastest moving index This loop reversal gav e us another 2 × performance boost. W e verified that these optimizations were implemented correctly by , as with V3 , profiling and checking memory coalescing metrics. The data layout and loop order optimizations documented above ga ve an aggregate ∼ 3.5 × speedup for 2J8 and ∼ 4 × speedup for 2J14 problem size. This is sho wn in bars V3 and V4 of Fig. 2 and 3. E. V5 - Collapse bispectrum loop At this point, the compute Y routine was the most compute intensiv e. T o expose more parallelism in the routine we assigned a single Kokk os team to an atom and distributed the flattened loops ov er j , j 1 , j 2 across the Kokk os threads within a team. As this optimization is prescriptive, success is defined by an improvement in performance. W e benchmarked this optimization and verfied a ∼ 3 × performance boost in the particular kernel and an 80% overall improvement in the performance as can be observed in the V5 bars of the performance figures. F . V6 - T r anspose Ulisttot Coalesced memory access is preferred on GPUs if con- secutiv e threads access consecutiv e memory locations in a data structure. W e were aw are at the conclusion of V3 that in compute Y consecutive threads access strided indexes in Ulisttot . This led to row-major being the optimal access pattern for Ulisttot in compute Y . Howe ver its access in compute U should be column-major to optimize the atomic add calls shown in listing 7. The solution to this problem is to add a transpose of Ulisttot between compute U and compute Y . This is a negligible overhead, 0.2%, compared to the benefit of coalescing the repeated reads on Ulisttot , giving a 15% and 20% performance improvement for 2J8 and 2J14 problem sizes. This optimization is reflected in column V6 of Figs. 2 and 3. G. V7 - 128 bit load/store T argeted profiling of T estSNAP using Nsight Compute showed that the SN Acomplex complex data type was gener- ating two distinct 64 bit loads and stores instead of a single 128 bit transaction. This led to non-coalesced reads and writes on GPUs. T o guarantee the alignment requirements of 128 bit instructions we marked the SN Acomplex with the C++11 alignas(16) specifier . This optimization gave an additional 15% performance improvement for 2J8 and 19% improvement for 2J14. While T estSNAP ga ve us an overall of 7 . 5 × speedup for 2J8 and 8 . 9 × for 2J14, when these optimizations were imple- mented in the LAMMPS production code [17], we achiev ed a 7.3 × speedup for 2J8 and an 8.2 × speedup for 2J14 relati ve to the baseline. V I . A R C H I T E C T U R E S P E C I FI C O P T I M I Z A T I O N S T o this point each of our optimizations were agnostic to running on the CPU or GPU up to dif ferences hidden within implicit conv entions in the Kokk os framework. W e identified that further optimizations were possible on the GPU relative to the CPU because of the required arithmetic intensity (AI) to properly saturate each device. It is well known that GPU devices require a higher AI, equi valently FLOPS/byte fetched from memory , to break out of a memory bandwidth bound regime. In contrast to the previous section, these optimizations were implemented directly in LAMMPS without first being implemented in the T estSN AP proxy . Numerical correctness of the optimizations was v erified by comparing the thermody- namic output (e.g. energy and pressure) of the new v ersion to that of the baseline version over several timesteps. A. Recursive P olynomial Calculations V ia a straightforward analysis, the kernels Compute U and compute dU should be (close to) compute-bound due to the large numbers of floating-point operations per atom, neigh- bor pair . Howe ver , GPU profiling found these kernels were memory bandwidth bound as implemented. After analysis, we determined this is due to repeated loads and stores to Ulist and dUlist , respecti vely , represented for example in listing 7. This is less of an issue on the CPU again because of the lower required arithmetic intensity to saturate the host processor . On the GPU, eliminating these loads and stores to de vice memory is a high priority optimization. W e will use the structure of the hyperspherical harmonic calculation as a motiv ator for our optimizations. The hyper- spherical harmonics are defined by a recursion relation u j = F ( u j − 1 2 ) (9) where F is a linear operator in which each of the (2 j + 1) 2 elements of u j is a linear combination of two adjacent elements of u j − 1 2 . The key observation is that this bounds the size of the state required for one atom, neighbor pair for any giv en value of j to 16 × (2 j ) 2 bytes, the 16 corresponding to a complex double, from the pre vious lev el. This moti v ates using low-latenc y GPU shar ed memory to cache the state, exposed in K okkos as scratch memory . Shared memory is a limited resource per GPU compute unit, which imposes a strict bound on the maximum thread occupancy , limiting the throughput of atom, neighbor pairs. This can be addressed using more CUD A threads per pair , both expediting the calculation and relaxing the occupancy limitations. Extra parallelism is af forded by the structure of (9): for fix ed j the (2 j + 1) 2 elements of u j can be computed independently and thus concurrently . Furthermore, we can exploit the symmetry property of u j to reduce the scratch memory requirements by roughly an additional factor of two. For fixed j , u j is dependent, up to a small overcounting for conv enience of indexing, on ceil ( j + 1 2 ) rows of u j − 1 2 . This reduces the scratch memory ov erheads to (2 j + 1)( floor ( j ) + 1) elements, ag ain times two for the double buf fer . Of important note, this memory layout optimization also carries o ver to Ulist and Uarraytot , roughly halving memory overheads, and also reducing the number of atomic additions required into Uarraytot . As a second, more nuanced optimization, we split the Uarraytot data structure into tw o data structures corresponding to the real and imaginary parts. This is informed by the lack of “ double2 ” atomics, where two consecutiv e double precision values are updated atomically . While this removes the benefit of 128-bit loads and stores, this does allow non-strided atomic additions, net boosting performance. An ef ficient implementation of this pattern is accomplished by assigning one GPU warp per atom, neighbor pair , exposed in K okkos via a V ectorRange construct. Here we parallelize computing the elements of u j ov er the “vector lanes. ” The compute U kernel in isolation achiev es a 5.2 × and 4.9 × speedup for the 2J8 and 2J14 problem sizes, respectively , gi ven these optimizations. This implementation has additional benefits. Compared with listing 7, this implementation fuses the parallel loops for com- puting Ulist and accumulating into Uarraytot . This eliminates the need to store any value to Ulist , allowing us to remove one of two data structures of size O ( J 3 N atom N nbor ) . Furthermore, now that one warp is assigned to each atom/neighbor pair, coalesced memory accesses require a return to a row-major or right layout for Uarraytot , remo ving the need for a transpose kernel before compute Y . W e note that the number of components in a giv en u j is generally not divisible by 32, the warp length, and as such some threads will go unused. While this is not ideal, this load imbalance is a reasonable penalty to pay relati ve to the overall speed-up. These optimizations transfer well to the compute dU kernel. A recursion relation for du j can be written from the deriv ati ve of (9). There are two novel new constraints on the kernel. First, because we hav e eliminated Ulist , we need to recompute values. This is net beneficial due to the relative cost of compute versus loads from memory . Next, because we need to compute dUlist for x, y , z , we need four times as much shared memory . Relativ e to compute U this is a prohibitive occupancy limiter . The solution is to split compute dU into three separate kernels, one per direction. Despite the redundant work this leads to a net speed-up. W e can eliminate the standing need to write dUlist to memory via kernel fusion with update forces . This requires re-introducing a global memory read from Ylist , ho wev er the latency of this read can be hidden behind the computation of dU ; further , because Ylist is a smaller data structure the reads are well cached. This is further improved by noting there is a one-to-one product between components of dU and Ylist in the kernel, letting us carry the symmetry properites of dU ov er to Ylist , again halving memory overheads. Last, because dE is a function of atom, neighbor pairs, it can be accumu- lated within the kernel via a low-o verhead parallel r educe construct. For discussion we will refer to this ne w kernel as compute fused dE . The aggregate benefit of shared memory optimizations, splitting the kernels per -direction, halving memory overheads, and fusing in the force computation is a 3.3 × and 5.0 × speedup for the 2J8 and 2J14 problem sizes, respectiv ely , for the new compute fused dE kernel in isolation. B. Data Layout Optimizations A second point of optimization is improving the data layout of the Ylist data structure. As described in V5 , the current data layout on the GPU has the flattened index j, j 1 , j 2 as the fast- moving inde x and atom number as the slow inde x. While this led to a large improvement in performance, it was not without flaw . As noted in (7), Y is a sum o ver elements of Z j j 1 j 2 , which it- self is a Clebsch-Gordon contraction of elements of U as seen in (2). The Clebsch-Gordon contractions are over a variable number of elements as a function of j, j 1 , j 2 . The conv ention of gi ving each thread of compute U a dif ferent Clebsch- Gordon contraction implies both imperfect read coalescing and a natural load imbalance between different sums. The former issue is a well-understood consequence of using an “array-of-structures” (AoS) data layout for Uarraytot and Ylist , where the “S” corresponds to the quantum numbers, while the “ A ” is for atom number . This layout was beneficial for Uarraytot because, in the optimized implementation of compute U , coalesced reads and writes over quantum numbers were naturally achieved—the “S” incidentally ended up acting as an “ A ” for the purpose of the kernel. On the other hand, compute Y sees no such benefit. Profiling indicates that, while compute Y does indeed lea ve read coalescing lacking, it still achiev es good L1 cache reuse, which sav es performance. This leav es the problem of load imbalance between dif ferent sums. The solution to this problem is changing the data layout of Uarraytot and Ylist to an “array-of-structures-of-arrays” (AoSoA) data layout, and changing the threading pattern appropriately . For the ne w data layout, the inner-most (fastest index) “ A ” is fixed at 32—the length of a CUDA warp. This guarantees perfect read and write coalescing. The intermediate index “S” is the same as before—all quantum numbers. Last, the outer-most “ A ” is ceil ( N atom / 32) . A natural threading pattern follo ws, most easily achiev ed by a MDRangeP olicy as noted in listing 4, e xcept no w it is a rank-3 polic y mapping hierarchically to the data layout above. Last, as compute Y also requires atomic additions, we follow the pattern noted for compute U of spliting the AoSoA data structure into real and imaginary parts. W ith this formulation, all reads and writes are perfectly coalesced, and there is perfect load balancing within a warp. For the compute Y kernel in isolation, this optimization leads to a 1.4x speed-up for both the 2J8 and 2J14 problem sizes, owed to the elimination of load imbalance within a warp. This reformulation to an AoSoA data layout does require the re-introduction of “transpose” kernels, as existed in opti- mization V6 . As was observed there, these additional transpose kernels are a negligible ov erhead relativ e to the improvement offered by the optimization of compute Y . C. Overall Impr ovements Fig. 4 shows the final performance comparison of the newer implementation in LAMMPS [18] over the baseline after all the optimizations discussed in this paper . For the 2J8 benchmark, we see a 19.6 × ov erall speedup ov er the baseline, Performance Comparison of Baseline implementation versus the most optimized 0 5 10 15 20 25 2J8 2J14 Baseline Optimized Fig. 4: Performance boost of ne w implementation compared to the older GPU implementation. whereas in the 2J14 case we observe a 21.7 × speedup. Memory use was also greatly reduced: the 2J8 benchmark now uses only 0.1 GB of GPU memory , while the 2J14 benchmark uses 0.9 GB. The fine-tuned optimizations for the GPU in this section lead to a performance regression on the CPU. F or this reason, in our LAMMPS Kokk os implementation we used div ergent code paths, where the CPU path largely uses only the opti- mizations up through section V. W e note that some aspects of the fine-tuned GPU optimiza- tions do carry over to the CPU codepath. One is e xploiting the symmetries of u j , as well as the implicit extensions to U j , ∂ U j ∂ r k , and Y via the one-to-one contraction with the deriv ati ve of U j . This can still be used to half memory ov erheads across these data structures, which is important gi ven the O ( N atom N nbor J 3 ) memory scaling of Ulist and dUlist in particular . W e note that backporting this optimization to the CPU (non-K okkos) codepath has not yet occurred, b ut will be done in the future. The second optimization of switching to an AoSoA data layout for Ylist also has a generalization to the CPU, where instead of the inner-most “ A ” being of length 32, correspond- ing to the size of the warp, the inner-most “ A ” corresponds to the vector index of a CPU SIMD data type. CPU SIMD optimizations to the SN AP potential will be explored in the future. V I I . C O N C L U S I O N S In this paper we presented our efforts to improve the performance of the SNAP interatomic potential by using the NVIDIA V100-16GB GPU as the benchmark hardware. W e hav e quantified the benefits of a series of algorithmic and implementation strategies, leading up to an aggregate ∼ 22 × speedup over a previous GPU implementation on the same hardware. The fundamental adjoint refactorization of Sec. IV was essential for a viable implementation. K ey implementation optimizations included the counterintuiti ve approach of kernel fission instead of kernel fusion, loop reordering and data access optimizations, and architecture-specific optimizations such as shared memory caching of intermediate computations. Each performance optimization in Sec. V is inherently performance portable. Additional shared memory optimization in Sec. VI are applicable across GPU architectures. The majority of our algorithmic explorations were done in the standalone T estSNAP proxy-app which enabled the rapid conception and prototyping of many different optimizations. W e encourage all dev elopers to dev elop proxy apps to shorten the design/imple- ment/test/revie w cycle as was beneficial here. Since then, all optimizations described here hav e been ported into the public release of the LAMMPS and are freely av ailable. R E F E R E N C E S [1] “T op500, ” 2020, [Online; accessed 19-October-2020]. [Online]. A vailable: https://www .top500.org/lists/2020/06/ [2] H. C. Edwards, C. R. Trott, and D. Sunderland, “Kokkos: Enabling manycore performance portability through polymorphic memory access patterns, ” Journal of P arallel and Distrib uted Computing , v ol. 74, no. 12, pp. 3202 – 3216, 2014. [3] D. A. Beckingsale, J. Burmark, R. Hornung, H. Jones, W . Killian, A. J. Kunen, O. Pearce, P . Robinson, B. S. Ryujin, and T . R. Scogland, “Raja: Portable performance for large-scale scientific applications, ” in 2019 IEEE/A CM International W orkshop on P erformance, P ortability and Pr oductivity in HPC (P3HPC) . IEEE, 2019, pp. 71–81. [4] J. Filipovi ˇ c, M. Madzin, J. Fousek, and L. Matyska, “Optimizing CUD A code by kernel fusion: application on BLAS, ” The Journal of Super computing , vol. 71, no. 10, pp. 3934–3957, 2015. [5] S. Plimpton, “Fast parallel algorithms for short-range molecular dynamics, ” J. Comput. Phys. , vol. 117, no. 1, pp. 1 – 19, 1995. [Online]. A v ailable: http://lammps.sandia.gov [6] S. J. Plimpton and A. P . Thompson, “Computational aspects of many- body potentials, ” MRS Bulletin , vol. 37, pp. 513–521, 2012. [7] A. P . Bart ´ ok, R. K ondor , and G. Cs ´ anyi, “On representing chemical en vironments, ” Phys. Rev . B , v ol. 87, p. 184115, May 2013. [8] J. Behler and M. Parrinello, “Generalized neural-network representation of high-dimensional potential-energy surfaces, ” Phys. Rev . Lett. , vol. 98, p. 146401, Apr 2007. [9] A. P . Bart ´ ok, M. C. Payne, R. Kondor , and G. Cs ´ anyi, “Gaussian approximation potentials: The accuracy of quantum mechanics, without the electrons, ” Phys. Rev . Lett. , vol. 104, p. 136403, Apr 2010. [10] M. Rupp, A. Tkatchenko, K.-R. M ¨ uller , and O. A. von Lilienfeld, “Fast and accurate modeling of molecular atomization ener gies with machine learning, ” Phys. Rev . Lett. , v ol. 108, p. 058301, Jan 2012. [11] A. Thompson, L. Swiler , C. T rott, S. F oiles, and G. T ucker , “Spectral neighbor analysis method for automated generation of quantum-accurate interatomic potentials, ” Journal of Computational Physics , vol. 285, pp. 316 – 330, 2015. [12] R. Drautz, “ Atomic cluster expansion for accurate and transferable interatomic potentials, ” Phys. Rev . B , vol. 99, p. 014104, Jan 2019. [13] S. Moore, C. T rott, and S. Plimpton, “ECP CoP A Milestone Report: Deploy ExaMiniMD optimizations into LAMMPS, ” 6 2018. [14] C. R. Trott, S. D. Hammond, and A. P . Thompson, “SN AP: Strong scaling high fidelity molecular dynamics simulations on leadership- class computing platforms, ” ISC 2014, Super computing, Lectur e Notes in Computer Science , v ol. 8488, p. 19, 2014. [15] “Lammps github commit, ” 2018, [Online]. [Online]. A v ailable: https://github .com/lammps/lammps/commit/39a09d3 [16] M. Bachmayr, G. Csanyi, G. Dusson, S. Etter , C. van der Oord, and C. Ortner, “ Approximation of potential energy surfaces with spherical harmonics, ” 2019. [17] “Lammps github commit, ” 2019. [Online]. A v ailable: https://github. com/lammps/lammps/commit/fe9f7f4 [18] “Lammps github commit, ” 2020. [Online]. A v ailable: https://github. com/lammps/lammps/commit/dae2cb2

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment