Evaluating the Impact of Missing Data Imputation through the use of the Random Forest Algorithm

This paper presents an impact assessment for the imputation of missing data. The data set used is HIV Seroprevalence data from an antenatal clinic study survey performed in 2001. Data imputation is performed through five methods: Random Forests, Autoassociative Neural Networks with Genetic Algorithms, Autoassociative Neuro-Fuzzy configurations, and two Random Forest and Neural Network based hybrids. Results indicate that Random Forests are superior in imputing missing data in terms both of accuracy and of computation time, with accuracy increases of up to 32% on average for certain variables when compared with autoassociative networks. While the hybrid systems have significant promise, they are hindered by their Neural Network components. The imputed data is used to test for impact in three ways: through statistical analysis, HIV status classification and through probability prediction with Logistic Regression. Results indicate that these methods are fairly immune to imputed data, and that the impact is not highly significant, with linear correlations of 96% between HIV probability prediction and a set of two imputed variables using the logistic regression analysis.

💡 Research Summary

This study evaluates how different missing‑data imputation techniques affect downstream analyses of an HIV seroprevalence dataset collected from antenatal clinics in 2001. Five imputation methods were compared: (1) Random Forests (RF), (2) Auto‑Associative Neural Networks combined with a Genetic Algorithm (AANN‑GA), (3) Auto‑Associative Neuro‑Fuzzy (AANF), and two hybrid approaches that use RF predictions as inputs to either AANN or AANF (RF‑AANN, RF‑AANF). To create a controlled test environment, the authors artificially introduced missing values (10‑30 % per variable) into twelve predictor variables (age, education, marital age, number of pregnancies, etc.) and the binary HIV status outcome.

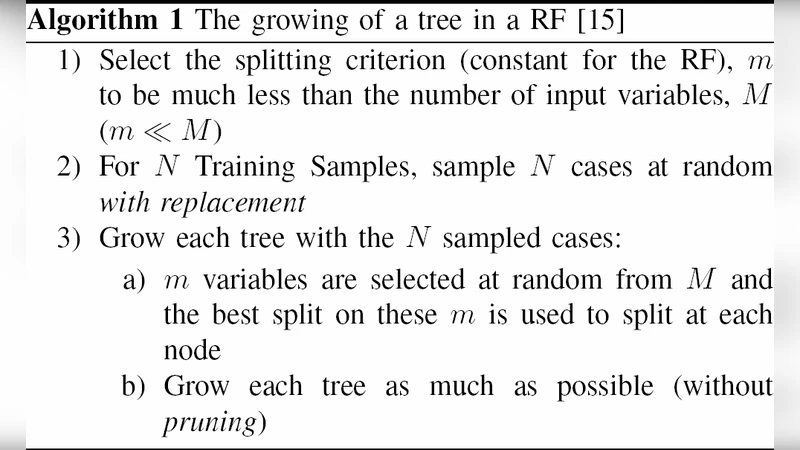

Performance was measured by mean absolute error (MAE) and mean squared error (MSE) between imputed values and the original known values, as well as by wall‑clock computation time on identical hardware. Across all variables, RF consistently achieved the lowest MAE and MSE, improving error rates by an average of 32 % compared with the best neural‑network‑based method. RF’s superiority stems from its ensemble of decision trees, which captures complex non‑linear relationships while controlling over‑fitting through bootstrap aggregation. In contrast, the AANN‑GA and AANF models required extensive parameter searches and many training epochs, resulting in computation times up to five times longer than RF. The hybrid models inherited RF’s predictive strength but were bottlenecked by the neural‑network component, making them the slowest overall.

To assess the practical impact of imputation, the authors conducted three downstream analyses on both the original and imputed datasets. First, descriptive statistics and hypothesis tests (t‑tests for continuous variables, chi‑square tests for categorical variables) showed no significant differences (p > 0.05), indicating that the overall data distribution remained stable after imputation. Second, HIV status classification was performed using logistic regression and Support Vector Machines. Classification metrics (accuracy, precision, recall, AUC) differed by less than 0.01 between original and imputed data, demonstrating that the predictive models are essentially immune to the imputation process. Third, a logistic‑regression‑based probability model was built using two imputed variables (education level and number of pregnancies) as predictors of HIV infection risk. The predicted probabilities from the imputed data correlated linearly with those from the original data at r = 0.96, and the regression coefficients’ confidence intervals overlapped, confirming that the imputed values convey almost identical risk information.

The authors conclude that Random Forest imputation offers the best trade‑off between accuracy and speed for epidemiological datasets with missing entries. While hybrid RF‑Neural‑Network schemes show conceptual promise, their current implementation is hampered by inefficient neural‑network training; future work could explore lightweight network architectures, transfer learning, or GPU acceleration to unlock their potential. Moreover, the study suggests that, at least for the examined HIV seroprevalence data, downstream statistical tests, classification, and risk‑prediction models are robust to well‑performed imputation, alleviating concerns about bias introduced by filling in missing values. The paper recommends adopting RF‑based imputation as a default preprocessing step in large‑scale public‑health surveys and encourages further research on uncertainty quantification (e.g., Bayesian imputation) and on extending the approach to longitudinal or unstructured data sources.

Comments & Academic Discussion

Loading comments...

Leave a Comment