Point Cloud Rendering after Coding: Impacts on Subjective and Objective Quality

Recently, point clouds have shown to be a promising way to represent 3D visual data for a wide range of immersive applications, from augmented reality to autonomous cars. Emerging imaging sensors have made easier to perform richer and denser point cloud acquisition, notably with millions of points, thus raising the need for efficient point cloud coding solutions. In such a scenario, it is important to evaluate the impact and performance of several processing steps in a point cloud communication system, notably the quality degradations associated to point cloud coding solutions. Moreover, since point clouds are not directly visualized but rather processed with a rendering algorithm before shown on any display, the perceived quality of point cloud data highly depends on the rendering solution. In this context, the main objective of this paper is to study the impact of several coding and rendering solutions on the perceived user quality and in the performance of available objective quality assessment metrics. Another contribution regards the assessment of recent MPEG point cloud coding solutions for several popular rendering methods which were never presented before. The conclusions regard the visibility of three types of coding artifacts for the three considered rendering approaches as well as the strengths and weakness of objective quality metrics when point clouds are rendered after coding.

💡 Research Summary

This paper investigates how the rendering stage, applied after point‑cloud (PC) compression and decoding, influences both subjective user quality and the performance of objective quality‑assessment metrics. Recent advances in 3D acquisition have made it possible to capture millions of points, creating a need for efficient compression. The authors focus on the two MPEG standards that have emerged for this purpose: Geometry‑based PCC (G‑PCC) for static and progressive point clouds, and Video‑based PCC (V‑PCC) for dynamic sequences. Both codecs employ different strategies—G‑PCC often increases the number of points to hide artifacts, while V‑PCC exploits temporal redundancy—resulting in distinct geometric distortions such as holes, noisy surfaces, and point duplication.

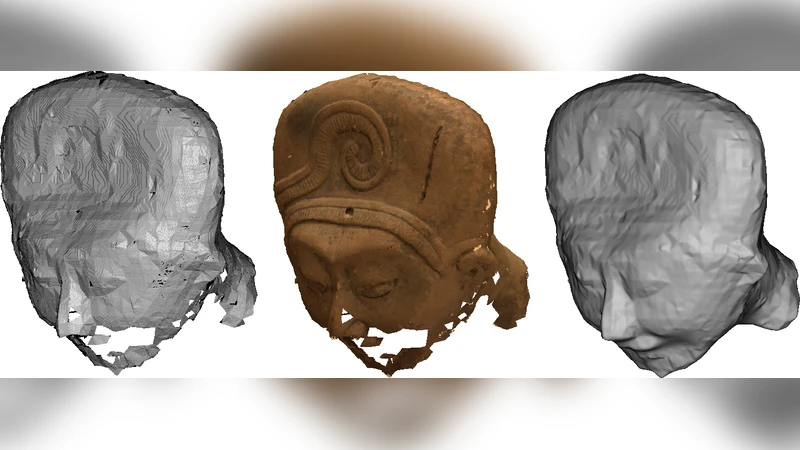

Because a point cloud cannot be displayed directly, a rendering algorithm must be applied after decoding. The study selects three representative rendering approaches that are widely used in the literature: (1) point‑based rendering, where each decoded point is displayed with a fixed size and optional recoloring; (2) mesh‑based rendering, where the point set is first converted into a polygonal mesh (e.g., via Poisson surface reconstruction) and then shaded; and (3) a hybrid method that combines point‑based display with depth‑buffer handling and simple shading. These methods differ in how they expose or conceal geometric artifacts. For instance, mesh‑based rendering can fill small holes through surface interpolation, but it may also amplify errors caused by excessive point duplication, while point‑based rendering shows every missing or noisy point directly.

To assess the perceptual impact, the authors conduct a double‑stimulus impairment scale (DSIS) subjective test with 24 participants. Twelve test conditions are formed by crossing the two codecs, three rendering methods, and two bitrate levels (high and low). Tests are performed on a 30‑inch 2D monitor and on an augmented‑reality (AR) headset, allowing the authors to examine whether display modality further modulates perceived quality. Participants view a reference (original, uncompressed) point cloud and a test version, then assign a mean opinion score (MOS) on a five‑point scale. Results reveal that MOS is far more sensitive to the rendering technique than to the codec itself. G‑PCC achieves the highest MOS when paired with point‑based rendering because its high point density masks holes, whereas the same codec yields lower MOS with mesh‑based rendering due to over‑refined meshes that introduce visual artifacts. V‑PCC performs relatively well on 2D displays but suffers on AR headsets where temporal inconsistencies become more noticeable in mesh form. Overall, the variance of MOS across rendering methods reaches up to 1.2 points, underscoring the critical role of post‑decoding visualization.

The paper also evaluates eight objective quality metrics: classic point‑to‑point mean squared error (PC‑MSE), point‑to‑plane distance (P2plane), Hausdorff distance, several normal‑based distances, and four recent learning‑based metrics trained on synthetic distortions. Correlation analysis shows that distance‑based metrics correlate reasonably well (R² ≈ 0.78) with MOS for mesh‑based rendering, but their correlation drops significantly (R² ≈ 0.45) for point‑based rendering where the visual impact of missing points is not captured by simple Euclidean distances. Normal‑based metrics improve correlation for mesh rendering because they reflect surface smoothness, yet they still miss the perceptual penalty caused by point duplication. The learning‑based metrics, trained on datasets that lack realistic MPEG‑codec artifacts and diverse rendering pipelines, perform poorly across all conditions, indicating a need for training data that includes rendering‑specific distortions.

A major contribution of the work is the release of the “Rendered Point Cloud Quality Assessment Dataset.” The dataset contains the original point clouds, compressed versions from G‑PCC and V‑PCC at two bitrates, rendered images for each of the three rendering methods, the collected MOS values, and the computed objective metric scores. This publicly available resource fills a gap in the community, where most existing datasets either ignore the rendering step or use only simplistic codecs and noise models.

In conclusion, the study demonstrates that rendering after coding is not a neutral post‑processing step but a decisive factor that shapes both human perception and the apparent validity of objective quality measures. Existing objective metrics, largely designed for a single rendering paradigm, fail to generalize across the diverse rendering pipelines used in practice. The authors argue for a joint optimization framework where codecs are designed with awareness of the downstream rendering method, and for the development of new objective metrics that explicitly model rendering‑induced visual effects. Future work should explore adaptive rendering strategies that mitigate codec‑specific artifacts, incorporate color attributes, and extend the analysis to dynamic point‑cloud sequences and stereoscopic displays. The dataset and findings presented here provide a solid foundation for such research directions.

Comments & Academic Discussion

Loading comments...

Leave a Comment