Robust Forecasting

💡 Research Summary

This paper, “Robust Forecasting,” addresses the challenge of forecasting discrete outcomes when the forecaster faces uncertainty about the true predictive distribution. This uncertainty can arise from partial identification of model parameters, concerns about model misspecification, or the possibility of structural breaks at the forecast origin. The authors employ a statistical decision-theoretic framework to derive forecasting rules that are robust to such ambiguity.

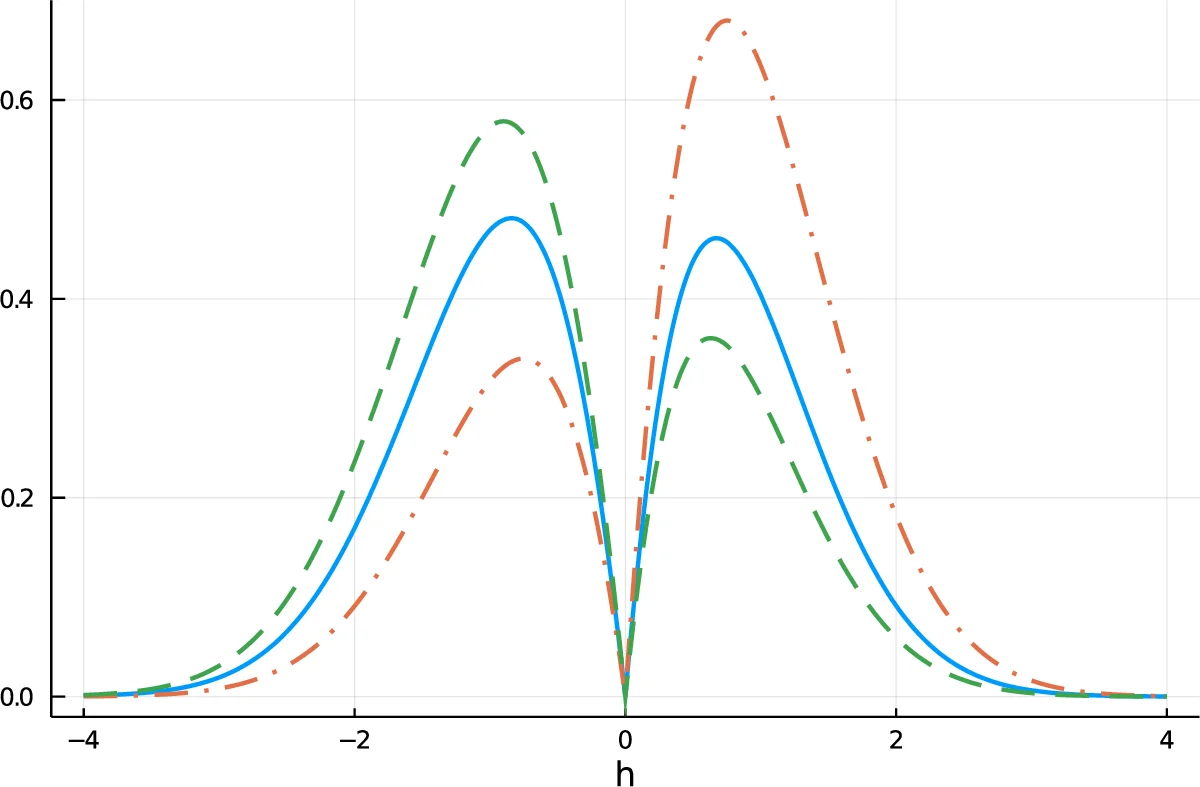

The core of the methodology involves minimizing either the maximum risk (minimax loss) or maximum regret (minimax regret) over the set of plausible forecast distributions, denoted Θ0. For binary forecasting problems under standard loss functions (binary, quadratic, logarithmic), the optimal robust forecast is shown to depend solely on the minimum (p_L) and maximum (p_U) conditional probability of the event occurring, computed over all distributions in Θ0. The robust forecast predicts the event if the average of these extremes, (p_L + p_U)/2, exceeds a threshold. This result simplifies the complex minimax problem into a manageable one involving a small number of convex optimization problems, which can often be solved efficiently using duality methods, particularly for semiparametric models like dynamic discrete choice panel models.

A key insight of the paper is that in models with non-Markovian dynamics, such as panel binary choice models with unobserved heterogeneity, different parameter values within the identified set—indistinguishable based on in-sample data—can generate meaningfully different and unequally accurate forecasts for future periods. This contradicts intuition from models like VARs where forecasts depend only on the identified reduced form.

The paper further tackles the practical issue where the set Θ0 itself must be estimated from data. The authors propose evaluating forecasts by their integrated maximum risk/regret, which averages over both the data and the underlying identifiable reduced-form parameter, P. Under this criterion, the optimal forecast is the Bayesian Robust Forecast. It minimizes the posterior maximum risk/regret, effectively averaging out the uncertainty about P using its posterior distribution given the observed data.

Finally, the authors develop an asymptotic efficiency theory. They show that forecasts which are asymptotically equivalent to the Bayesian Robust Forecast minimize asymptotic integrated maximum risk/regret. Such forecasts are termed asymptotically efficient-robust. A significant finding is that simple “plug-in” forecasts, which replace P with an efficient first-stage estimator, can be strictly dominated by the Bayesian robust forecast. This suboptimality occurs because the key statistics determining the robust forecast (like p_L and p_U) may be non-differentiable (or only directionally differentiable) functions of P. In contrast, “bagging” predictors, which average forecasts over the bootstrap distribution of an efficient estimator of P, often achieve asymptotic efficient-robustness.

In summary, this work provides a coherent and computationally feasible decision-theoretic framework for robust discrete outcome forecasting. It offers solutions for handling uncertainty stemming from identification issues, model distrust, and potential breaks, and demonstrates the superiority of Bayesian averaging or bagging approaches over simple plug-in methods in this context.

Comments & Academic Discussion

Loading comments...

Leave a Comment